Giskard Releases Giskard Bot on HuggingFace: A Bot that Routinely Detects Problems with the Machine Studying Fashions You Pushed to the HuggingFace Hub

In a groundbreaking improvement printed on November 8, 2023, the Giskard Bot has emerged as a game-changer in machine studying (ML) fashions, catering to giant language fashions (LLMs) and tabular fashions. This open-source testing framework, devoted to making sure the integrity of fashions, brings a wealth of functionalities to the desk, all seamlessly built-in with the HuggingFace (HF) platform.

Giskard‘s main goals are clear:

- Determine vulnerabilities.

- Generate domain-specific assessments.

- Automate check suite execution inside Steady Integration/Steady Deployment (CI/CD) pipelines.

It operates as an open platform for AI High quality Assurance (QA), aligning with Hugging Face’s community-based philosophy.

Some of the important integrations launched is the Giskard bot on the HF hub. This bot permits Hugging Face customers to publish vulnerability experiences routinely every time a brand new mannequin is pushed to the HF hub. These experiences, displayed in HF discussions and the mannequin card by way of a pull request, present a right away overview of potential points, resembling biases, moral considerations, and robustness.

A compelling instance within the article illustrates the Giskard bot’s prowess. Suppose a sentiment evaluation mannequin utilizing Roberta for Twitter classification is uploaded to the HF Hub. The Giskard bot swiftly identifies 5 potential vulnerabilities, pinpointing particular transformations within the “textual content” characteristic that considerably alter predictions. These findings underscore the significance of implementing knowledge augmentation methods through the coaching set building, providing a deep dive into mannequin efficiency.

What units Giskard aside is its dedication to high quality past amount. The bot not solely quantifies vulnerabilities but additionally provides qualitative insights. It suggests adjustments to the mannequin card, highlighting biases, dangers, or limitations. These options are seamlessly introduced as pull requests within the HF hub, streamlining the assessment course of for mannequin builders.

The Giskard scan isn’t restricted to plain NLP fashions; it extends its capabilities to LLMs, showcasing vulnerability scans for an LLM RAG mannequin referencing the IPCC report. The scan uncovers considerations associated to hallucination, misinformation, harmfulness, delicate info disclosure, and robustness. For example, it routinely identifies points resembling not revealing confidential details about the methodologies utilized in creating the IPCC experiences.

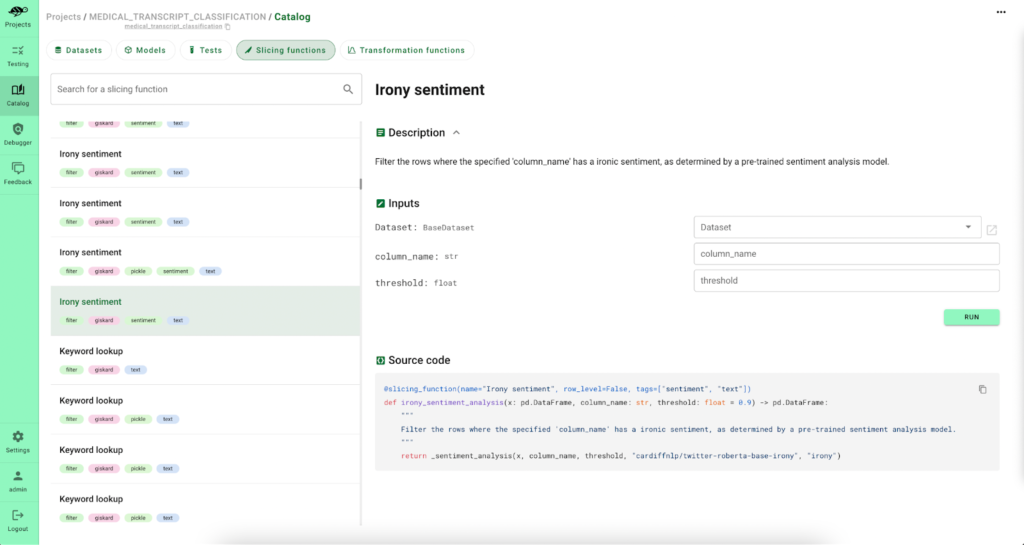

However Giskard doesn’t cease at identification; it empowers customers to debug points comprehensively. Customers can entry a specialised Hub on Hugging Face Areas, gaining actionable insights on mannequin failures. This facilitates collaboration with area consultants and the design of customized assessments tailor-made to distinctive AI use circumstances.

Debugging assessments are made environment friendly with Giskard. The bot permits customers to know the foundation causes of points and supplies automated insights throughout debugging. It suggests assessments, explains phrase contributions to predictions and provides computerized actions based mostly on insights.

Giskard isn’t a one-way road; it encourages suggestions from area consultants by its “Invite” characteristic. This aggregated suggestions supplies a holistic view of potential mannequin enhancements, guiding builders in enhancing mannequin accuracy and reliability.

Try the Reference Article. All credit score for this analysis goes to the researchers of this challenge. Additionally, don’t overlook to affix our 32k+ ML SubReddit, 41k+ Facebook Community, Discord Channel, and Email Newsletter, the place we share the most recent AI analysis information, cool AI tasks, and extra.

If you like our work, you will love our newsletter..

We’re additionally on Telegram and WhatsApp.

Niharika is a Technical consulting intern at Marktechpost. She is a 3rd yr undergraduate, presently pursuing her B.Tech from Indian Institute of Know-how(IIT), Kharagpur. She is a extremely enthusiastic particular person with a eager curiosity in Machine studying, Information science and AI and an avid reader of the most recent developments in these fields.