Optimize AWS Inferentia utilization with FastAPI and PyTorch fashions on Amazon EC2 Inf1 & Inf2 cases

When deploying Deep Studying fashions at scale, it’s essential to successfully make the most of the underlying {hardware} to maximise efficiency and value advantages. For manufacturing workloads requiring excessive throughput and low latency, the collection of the Amazon Elastic Compute Cloud (EC2) occasion, mannequin serving stack, and deployment structure is essential. Inefficient structure can result in suboptimal utilization of the accelerators and unnecessarily excessive manufacturing price.

On this submit we stroll you thru the method of deploying FastAPI mannequin servers on AWS Inferentia units (discovered on Amazon EC2 Inf1 and Amazon EC Inf2 cases). We additionally display internet hosting a pattern mannequin that’s deployed in parallel throughout all NeuronCores for max {hardware} utilization.

Resolution overview

FastAPI is an open-source net framework for serving Python functions that’s a lot quicker than conventional frameworks like Flask and Django. It makes use of an Asynchronous Server Gateway Interface (ASGI) as an alternative of the broadly used Web Server Gateway Interface (WSGI). ASGI processes incoming requests asynchronously versus WSGI which processes requests sequentially. This makes FastAPI the perfect option to deal with latency delicate requests. You should use FastAPI to deploy a server that hosts an endpoint on an Inferentia (Inf1/Inf2) cases that listens to consumer requests by means of a delegated port.

Our goal is to realize highest efficiency at lowest price by means of most utilization of the {hardware}. This enables us to deal with extra inference requests with fewer accelerators. Every AWS Inferentia1 gadget incorporates 4 NeuronCores-v1 and every AWS Inferentia2 gadget incorporates two NeuronCores-v2. The AWS Neuron SDK permits us to make the most of every of the NeuronCores in parallel, which supplies us extra management in loading and inferring 4 or extra fashions in parallel with out sacrificing throughput.

With FastAPI, you may have your selection of Python net server (Gunicorn, Uvicorn, Hypercorn, Daphne). These net servers present and abstraction layer on high of the underlying Machine Studying (ML) mannequin. The requesting consumer has the good thing about being oblivious to the hosted mannequin. A consumer doesn’t must know the mannequin’s identify or model that has been deployed underneath the server; the endpoint identify is now only a proxy to a operate that masses and runs the mannequin. In distinction, in a framework-specific serving software, comparable to TensorFlow Serving, the mannequin’s identify and model are a part of the endpoint identify. If the mannequin modifications on the server facet, the consumer has to know and alter its API name to the brand new endpoint accordingly. Due to this fact, in case you are constantly evolving the model fashions, comparable to within the case of A/B testing, then utilizing a generic Python net server with FastAPI is a handy manner of serving fashions, as a result of the endpoint identify is static.

An ASGI server’s function is to spawn a specified variety of employees that hear for consumer requests and run the inference code. An essential functionality of the server is to ensure the requested variety of employees can be found and energetic. In case a employee is killed, the server should launch a brand new employee. On this context, the server and employees could also be recognized by their Unix course of ID (PID). For this submit, we use a Hypercorn server, which is a well-liked selection for Python net servers.

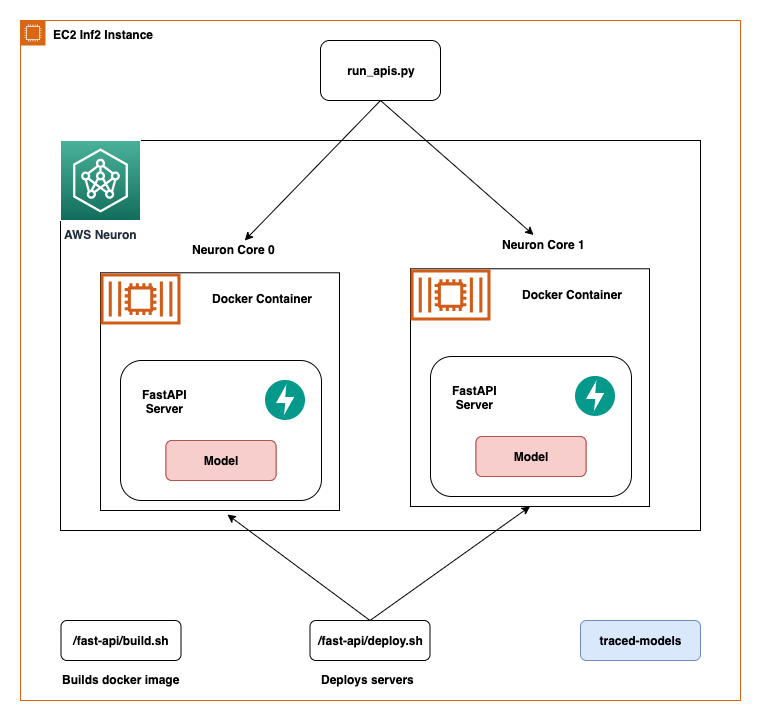

On this submit, we share finest practices to deploy deep studying fashions with FastAPI on AWS Inferentia NeuronCores. We present that you would be able to deploy a number of fashions on separate NeuronCores that may be known as concurrently. This setup will increase throughput as a result of a number of fashions could be inferred concurrently and NeuronCore utilization is totally optimized. The code could be discovered on the GitHub repo. The next determine reveals the structure of the best way to arrange the answer on an EC2 Inf2 occasion.

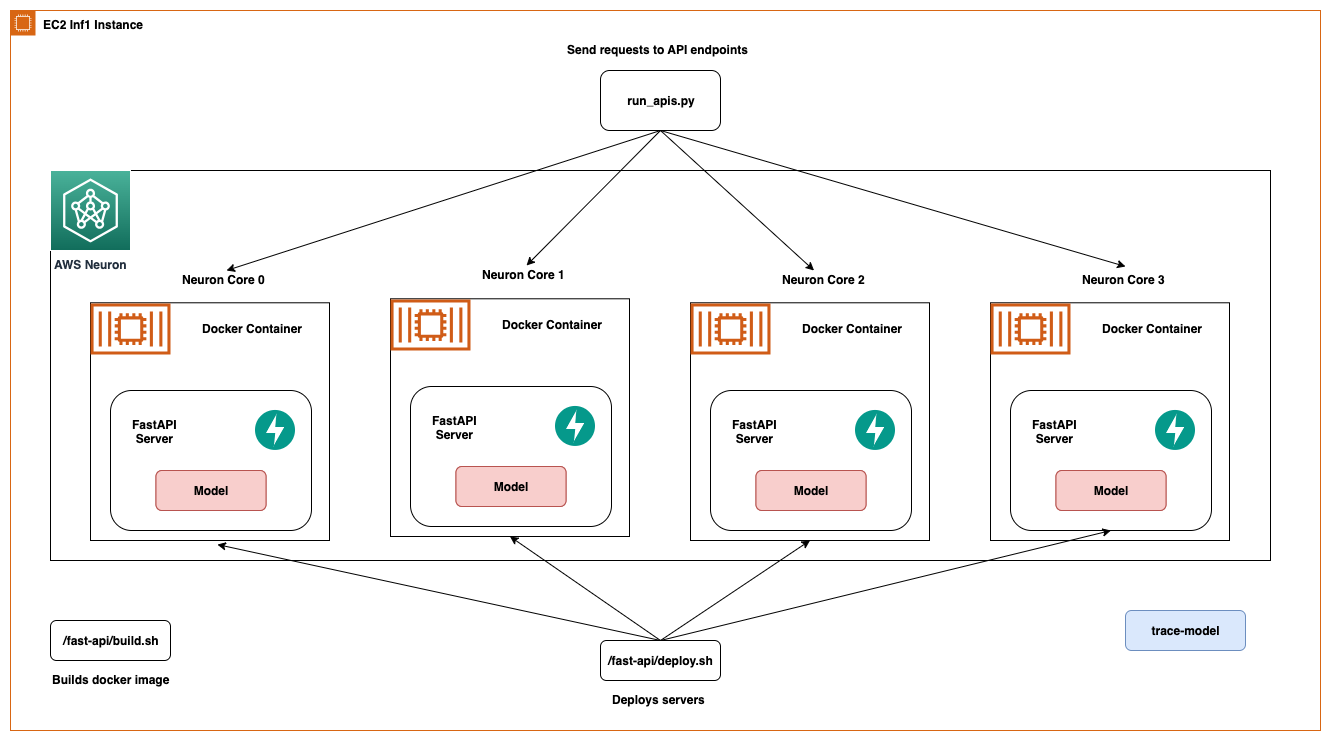

The identical structure applies to an EC2 Inf1 occasion kind besides it has 4 cores. In order that modifications the structure diagram just a little bit.

AWS Inferentia NeuronCores

Let’s dig just a little deeper into instruments supplied by AWS Neuron to have interaction with the NeuronCores. The next tables reveals the variety of NeuronCores in every Inf1 and Inf2 occasion kind. The host vCPUs and the system reminiscence are shared throughout all obtainable NeuronCores.

| Occasion Measurement | # Inferentia Accelerators | # NeuronCores-v1 | vCPUs | Reminiscence (GiB) |

| Inf1.xlarge | 1 | 4 | 4 | 8 |

| Inf1.2xlarge | 1 | 4 | 8 | 16 |

| Inf1.6xlarge | 4 | 16 | 24 | 48 |

| Inf1.24xlarge | 16 | 64 | 96 | 192 |

| Occasion Measurement | # Inferentia Accelerators | # NeuronCores-v2 | vCPUs | Reminiscence (GiB) |

| Inf2.xlarge | 1 | 2 | 4 | 32 |

| Inf2.8xlarge | 1 | 2 | 32 | 32 |

| Inf2.24xlarge | 6 | 12 | 96 | 192 |

| Inf2.48xlarge | 12 | 24 | 192 | 384 |

Inf2 cases comprise the brand new NeuronCores-v2 compared to the NeuronCore-v1 within the Inf1 cases. Regardless of fewer cores, they’re able to supply 4x larger throughput and 10x decrease latency than Inf1 cases. Inf2 cases are perfect for Deep Studying workloads like Generative AI, Giant Language Fashions (LLM) in OPT/GPT household and imaginative and prescient transformers like Secure Diffusion.

The Neuron Runtime is chargeable for working fashions on Neuron units. Neuron Runtime determines which NeuronCore will run which mannequin and the best way to run it. Configuration of Neuron Runtime is managed by means of the usage of environment variables on the course of stage. By default, Neuron framework extensions will care for Neuron Runtime configuration on the consumer’s behalf; nonetheless, specific configurations are additionally attainable to realize extra optimized habits.

Two standard surroundings variables are NEURON_RT_NUM_CORES and NEURON_RT_VISIBLE_CORES. With these surroundings variables, Python processes could be tied to a NeuronCore. With NEURON_RT_NUM_CORES, a specified variety of cores could be reserved for a course of, and with NEURON_RT_VISIBLE_CORES, a variety of NeuronCores could be reserved. For instance, NEURON_RT_NUM_CORES=2 myapp.py will reserve two cores and NEURON_RT_VISIBLE_CORES=’0-2’ myapp.py will reserve zero, one, and two cores for myapp.py. You possibly can reserve NeuronCores throughout units (AWS Inferentia chips) as properly. So, NEURON_RT_VISIBLE_CORES=’0-5’ myapp.py will reserve the primary 4 cores on device1 and one core on device2 in an Ec2 Inf1 occasion kind. Equally, on an EC2 Inf2 occasion kind, this configuration will reserve two cores throughout device1 and device2 and one core on device3. The next desk summarizes the configuration of those variables.

| Title | Description | Kind | Anticipated Values | Default Worth | RT Model |

NEURON_RT_VISIBLE_CORES |

Vary of particular NeuronCores wanted by the method | Integer vary (like 1-3) | Any worth or vary between 0 to Max NeuronCore within the system | None | 2.0+ |

NEURON_RT_NUM_CORES |

Variety of NeuronCores required by the method | Integer | A price from 1 to Max NeuronCore within the system | 0, which is interpreted as “all” | 2.0+ |

For a listing of all surroundings variables, consult with Neuron Runtime Configuration.

By default, when loading fashions, fashions get loaded onto NeuronCore 0 after which NeuronCore 1 except explicitly acknowledged by the previous surroundings variables. As specified earlier, the NeuronCores share the obtainable host vCPUs and system reminiscence. Due to this fact, fashions deployed on every NeuronCore will compete for the obtainable sources. This gained’t be a problem if the mannequin is using the NeuronCores to a big extent. But when a mannequin is working solely partly on the NeuronCores and the remaining on host vCPUs then contemplating CPU availability per NeuronCore develop into essential. This impacts the selection of the occasion as properly.

The next desk reveals variety of host vCPUs and system reminiscence obtainable per mannequin if one mannequin was deployed to every NeuronCore. Relying in your software’s NeuronCore utilization, vCPU, and reminiscence utilization, it’s endorsed to run checks to seek out out which configuration is most performant on your software. The Neuron Top tool may help in visualizing core utilization and gadget and host reminiscence utilization. Primarily based on these metrics an knowledgeable resolution could be made. We display the usage of Neuron Prime on the finish of this weblog.

| Occasion Measurement | # Inferentia Accelerators | # Fashions | vCPUs/Mannequin | Reminiscence/Mannequin (GiB) |

| Inf1.xlarge | 1 | 4 | 1 | 2 |

| Inf1.2xlarge | 1 | 4 | 2 | 4 |

| Inf1.6xlarge | 4 | 16 | 1.5 | 3 |

| Inf1.24xlarge | 16 | 64 | 1.5 | 3 |

| Occasion Measurement | # Inferentia Accelerators | # Fashions | vCPUs/Mannequin | Reminiscence/Mannequin (GiB) |

| Inf2.xlarge | 1 | 2 | 2 | 8 |

| Inf2.8xlarge | 1 | 2 | 16 | 64 |

| Inf2.24xlarge | 6 | 12 | 8 | 32 |

| Inf2.48xlarge | 12 | 24 | 8 | 32 |

To check out the Neuron SDK options your self, try the newest Neuron capabilities for PyTorch.

System setup

The next is the system setup used for this answer:

Arrange the answer

There are a few issues we have to do to setup the answer. Begin by creating an IAM function that your EC2 occasion goes to imagine that can enable it to push and pull from Amazon Elastic Container Registry.

Step 1: Setup the IAM function

- Begin by logging into the console and accessing IAM > Roles > Create Function

- Choose Trusted entity kind

AWS Service - Choose EC2 because the service underneath use-case

- Click on Subsequent and also you’ll be capable to see all insurance policies obtainable

- For the aim of this answer, we’re going to provide our EC2 occasion full entry to ECR. Filter for AmazonEC2ContainerRegistryFullAccess and choose it.

- Press subsequent and identify the function

inf-ecr-access

Word: the coverage we connected provides the EC2 occasion full entry to Amazon ECR. We strongly advocate following the principal of least-privilege for manufacturing workloads.

Step 2: Setup AWS CLI

If you happen to’re utilizing the prescribed Deep Studying AMI listed above, it comes with AWS CLI put in. If you happen to’re utilizing a special AMI (Amazon Linux 2023, Base Ubuntu and so on.), set up the CLI instruments by following this guide.

Upon getting the CLI instruments put in, configure the CLI utilizing the command aws configure. You probably have entry keys, you may add them right here however don’t essentially want them to work together with AWS companies. We’re counting on IAM roles to try this.

Word: We have to enter at-least one worth (default area or default format) to create the default profile. For this instance, we’re going with us-east-2 because the area and json because the default output.

Clone the Github repository

The GitHub repo gives all of the scripts essential to deploy fashions utilizing FastAPI on NeuronCores on AWS Inferentia cases. This instance makes use of Docker containers to make sure we will create reusable options. Included on this instance is the next config.properties file for customers to supply inputs.

The configuration file wants user-defined identify prefixes for the Docker picture and Docker containers. The construct.sh script within the fastapi and trace-model folders use this to create Docker photos.

Compile a mannequin on AWS Inferentia

We’ll begin with tracing the mannequin and producing a PyTorch Torchscript .pt file. Begin by accessing trace-model listing and modifying the .env file. Relying upon the kind of occasion you selected, modify the CHIP_TYPE inside the .env file. For instance, we are going to select Inf2 because the information. The identical steps apply to the deployment course of for Inf1.

Subsequent set the default area in the identical file. This area might be used to create an ECR repository and Docker photos might be pushed to this repository. Additionally on this folder, we offer all of the scripts essential to hint a bert-base-uncased mannequin on AWS Inferentia. This script might be used for many fashions obtainable on Hugging Face. The Dockerfile has all of the dependencies to run fashions with Neuron and runs the trace-model.py code because the entry level.

Neuron compilation defined

The Neuron SDK’s API carefully resembles the PyTorch Python API. The torch.jit.hint() from PyTorch takes the mannequin and pattern enter tensor as arguments. The pattern inputs are fed to the mannequin and the operations which might be invoked as that enter makes its manner by means of the mannequin’s layers are recorded as TorchScript. To be taught extra about JIT Tracing in PyTorch, consult with the next documentation.

Identical to torch.jit.hint(), you may examine to see in case your mannequin could be compiled on AWS Inferentia with the next code for inf1 cases.

For inf2, the library is known as torch_neuronx. Right here’s how one can check your mannequin compilation towards inf2 cases.

After creating the hint occasion, we will cross the instance tensor enter like so:

And eventually save the ensuing TorchScript output on native disk

As proven within the previous code, you should use compiler_args and optimizations to optimize the deployment. For an in depth listing of arguments for the torch.neuron.hint API, consult with PyTorch-Neuron trace python API.

Maintain the next essential factors in thoughts:

- The Neuron SDK doesn’t help dynamic tensor shapes as of this writing. Due to this fact, a mannequin should be compiled individually for various enter shapes. For extra info on working inference on variable enter shapes with bucketing, consult with Running inference on variable input shapes with bucketing.

- If you happen to face out of reminiscence points when compiling a mannequin, strive compiling the mannequin on an AWS Inferentia occasion with extra vCPUs or reminiscence, and even a big c6i or r6i occasion as compilation solely makes use of CPUs. As soon as compiled, the traced mannequin can in all probability be run on smaller AWS Inferentia occasion sizes.

Construct course of clarification

Now we are going to construct this container by working build.sh. The construct script file merely creates the Docker picture by pulling a base Deep Studying Container Picture and putting in the HuggingFace transformers package deal. Primarily based on the CHIP_TYPE specified within the .env file, the docker.properties file decides the suitable BASE_IMAGE. This BASE_IMAGE factors to a Deep Studying Container Picture for Neuron Runtime supplied by AWS.

It’s obtainable by means of a personal ECR repository. Earlier than we will pull the picture, we have to login and get non permanent AWS credentials.

Word: we have to change the area listed within the command specified by the area flag and inside the repository URI with the area we put within the .env file.

For the aim of constructing this course of simpler, we will use the fetch-credentials.sh file. The area might be taken from the .env file robotically.

Subsequent, we’ll push the picture utilizing the script push.sh. The push script creates a repository in Amazon ECR for you and pushes the container picture.

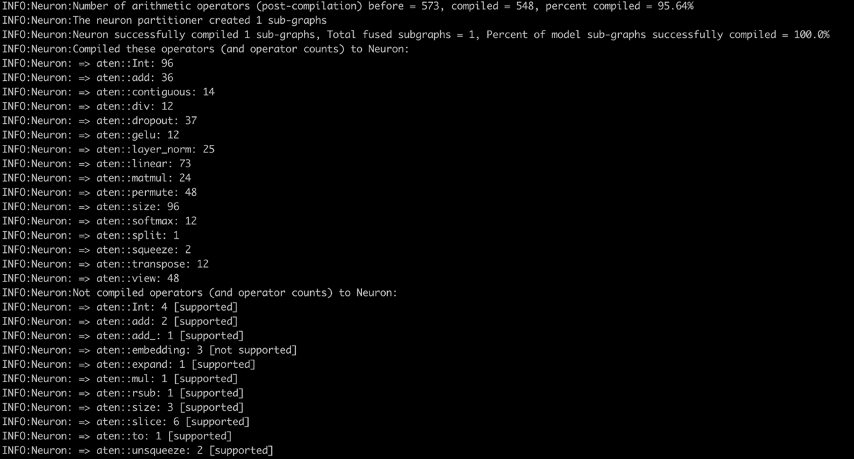

Lastly, when the picture is constructed and pushed, we will run it as a container by working run.sh and tail working logs with logs.sh. Within the compiler logs (see the next screenshot), you will notice the proportion of arithmetic operators compiled on Neuron and proportion of mannequin sub-graphs efficiently compiled on Neuron. The screenshot reveals the compiler logs for the bert-base-uncased-squad2 mannequin. The logs present that 95.64% of the arithmetic operators had been compiled, and it additionally provides a listing of operators that had been compiled on Neuron and those who aren’t supported.

Here is a list of all supported operators within the newest PyTorch Neuron package deal. Equally, here is the list of all supported operators within the newest PyTorch Neuronx package deal.

Deploy fashions with FastAPI

After the fashions are compiled, the traced mannequin might be current within the trace-model folder. On this instance, we’ve got positioned the traced mannequin for a batch measurement of 1. We take into account a batch measurement of 1 right here to account for these use circumstances the place a better batch measurement is just not possible or required. To be used circumstances the place larger batch sizes are wanted, the torch.neuron.DataParallel (for Inf1) or torch.neuronx.DataParallel (for Inf2) API might also be helpful.

The fast-api folder gives all the mandatory scripts to deploy fashions with FastAPI. To deploy the fashions with none modifications, merely run the deploy.sh script and it’ll construct a FastAPI container picture, run containers on the desired variety of cores, and deploy the desired variety of fashions per server in every FastAPI mannequin server. This folder additionally incorporates a .env file, modify it to replicate the right CHIP_TYPE and AWS_DEFAULT_REGION.

Word: FastAPI scripts depend on the identical surroundings variables used to construct, push and run the pictures as containers. FastAPI deployment scripts will use the final identified values from these variables. So, in case you traced the mannequin for Inf1 occasion kind final, that mannequin might be deployed by means of these scripts.

The fastapi-server.py file which is chargeable for internet hosting the server and sending the requests to the mannequin does the next:

- Reads the variety of fashions per server and the placement of the compiled mannequin from the properties file

- Units seen NeuronCores as surroundings variables to the Docker container and reads the surroundings variables to specify which NeuronCores to make use of

- Offers an inference API for the

bert-base-uncased-squad2mannequin - With

jit.load(), masses the variety of fashions per server as specified within the config and shops the fashions and the required tokenizers in international dictionaries

With this setup, it will be comparatively straightforward to arrange APIs that listing which fashions and what number of fashions are saved in every NeuronCore. Equally, APIs might be written to delete fashions from particular NeuronCores.

The Dockerfile for constructing FastAPI containers is constructed on the Docker picture we constructed for tracing the fashions. Because of this the docker.properties file specifies the ECR path to the Docker picture for tracing the fashions. In our setup, the Docker containers throughout all NeuronCores are related, so we will construct one picture and run a number of containers from one picture. To keep away from any entry level errors, we specify ENTRYPOINT ["/usr/bin/env"] within the Dockerfile earlier than working the startup.sh script, which seems like hypercorn fastapi-server:app -b 0.0.0.0:8080. This startup script is similar for all containers. If you happen to’re utilizing the identical base picture as for tracing fashions, you may construct this container by merely working the construct.sh script. The push.sh script stays the identical as earlier than for tracing fashions. The modified Docker picture and container identify are supplied by the docker.properties file.

The run.sh file does the next:

- Reads the Docker picture and container identify from the properties file, which in flip reads the

config.propertiesfile, which has anum_coresconsumer setting - Begins a loop from 0 to

num_coresand for every core:- Units the port quantity and gadget quantity

- Units the

NEURON_RT_VISIBLE_CORESsurroundings variable - Specifies the amount mount

- Runs a Docker container

For readability, the Docker run command for deploying in NeuronCore 0 for Inf1 would seem like the next code:

The run command for deploying in NeuronCore 5 would seem like the next code:

After the containers are deployed, we use the run_apis.py script, which calls the APIs in parallel threads. The code is ready as much as name six fashions deployed, one on every NeuronCore, however could be simply modified to a special setting. We name the APIs from the consumer facet as follows:

Monitor NeuronCore

After the mannequin servers are deployed, to watch NeuronCore utilization, we might use neuron-top to watch in actual time the utilization proportion of every NeuronCore. neuron-top is a CLI software within the Neuron SDK to supply info comparable to NeuronCore, vCPU, and reminiscence utilization. In a separate terminal, enter the next command:

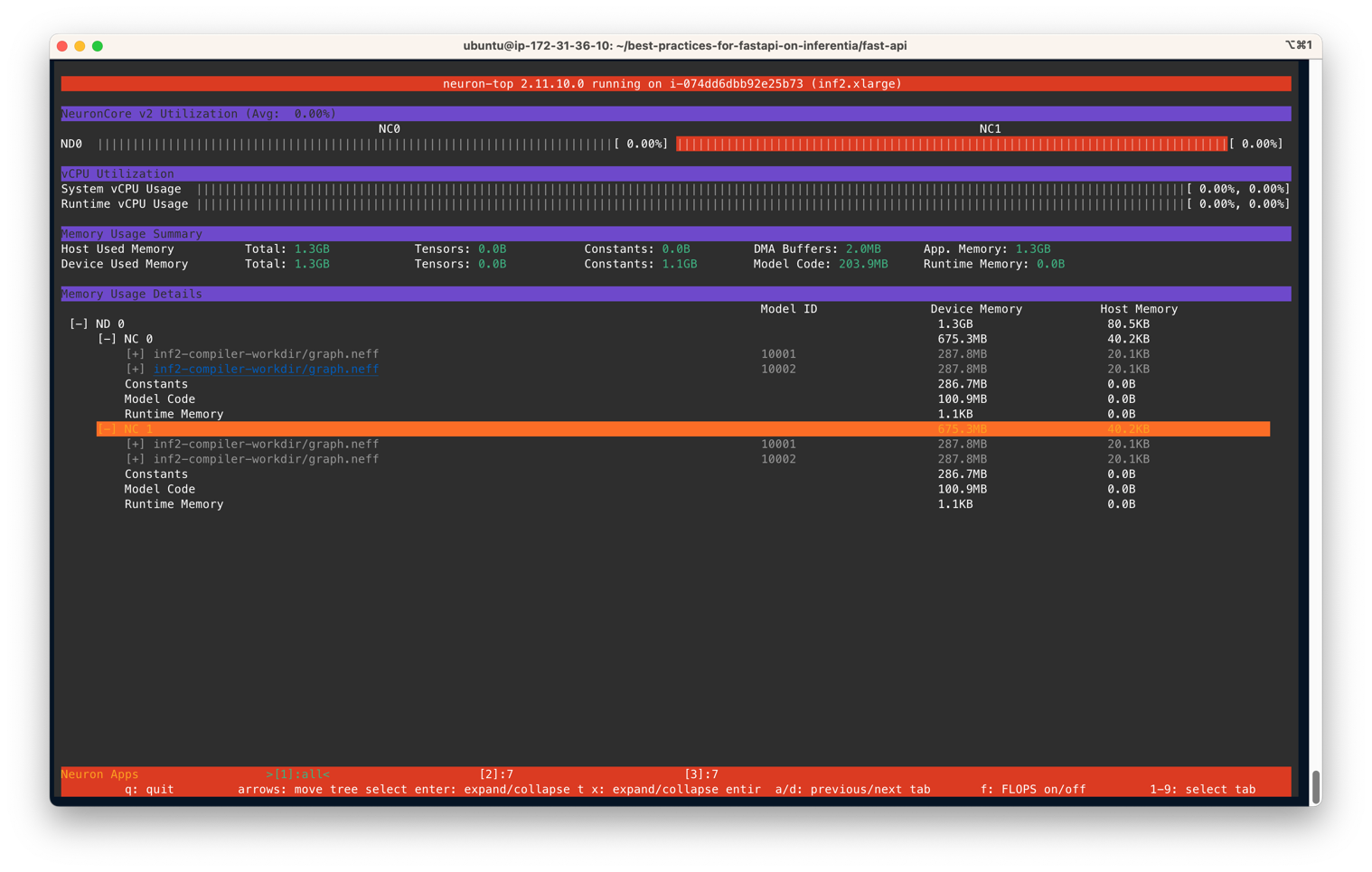

You output ought to be much like the next determine. On this state of affairs, we’ve got specified to make use of two NeuronCores and two fashions per server on an Inf2.xlarge occasion. The next screenshot reveals that two fashions of measurement 287.8MB every are loaded on two NeuronCores. With a complete of 4 fashions loaded, you may see the gadget reminiscence used is 1.3 GB. Use the arrow keys to maneuver between the NeuronCores on totally different units

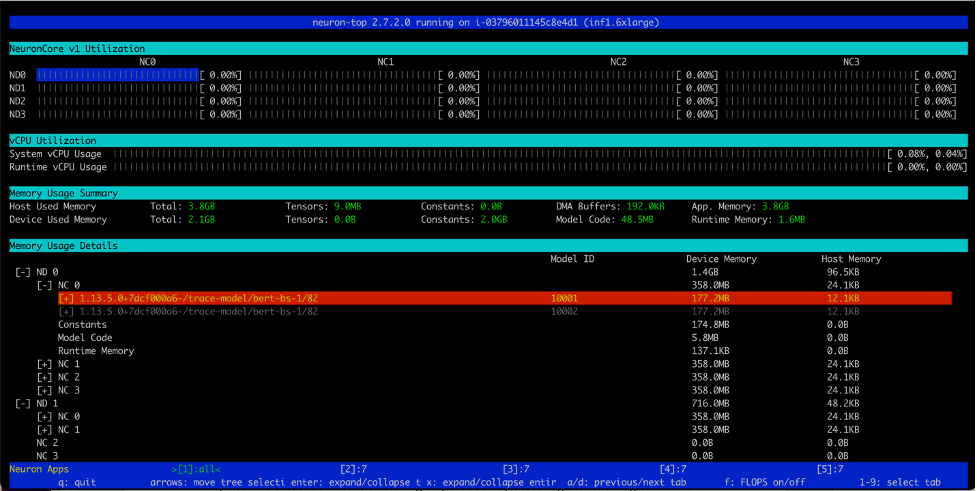

Equally, on an Inf1.16xlarge occasion kind we see a complete of 12 fashions (2 fashions per core over 6 cores) loaded. A complete reminiscence of two.1GB is consumed and each mannequin is 177.2MB in measurement.

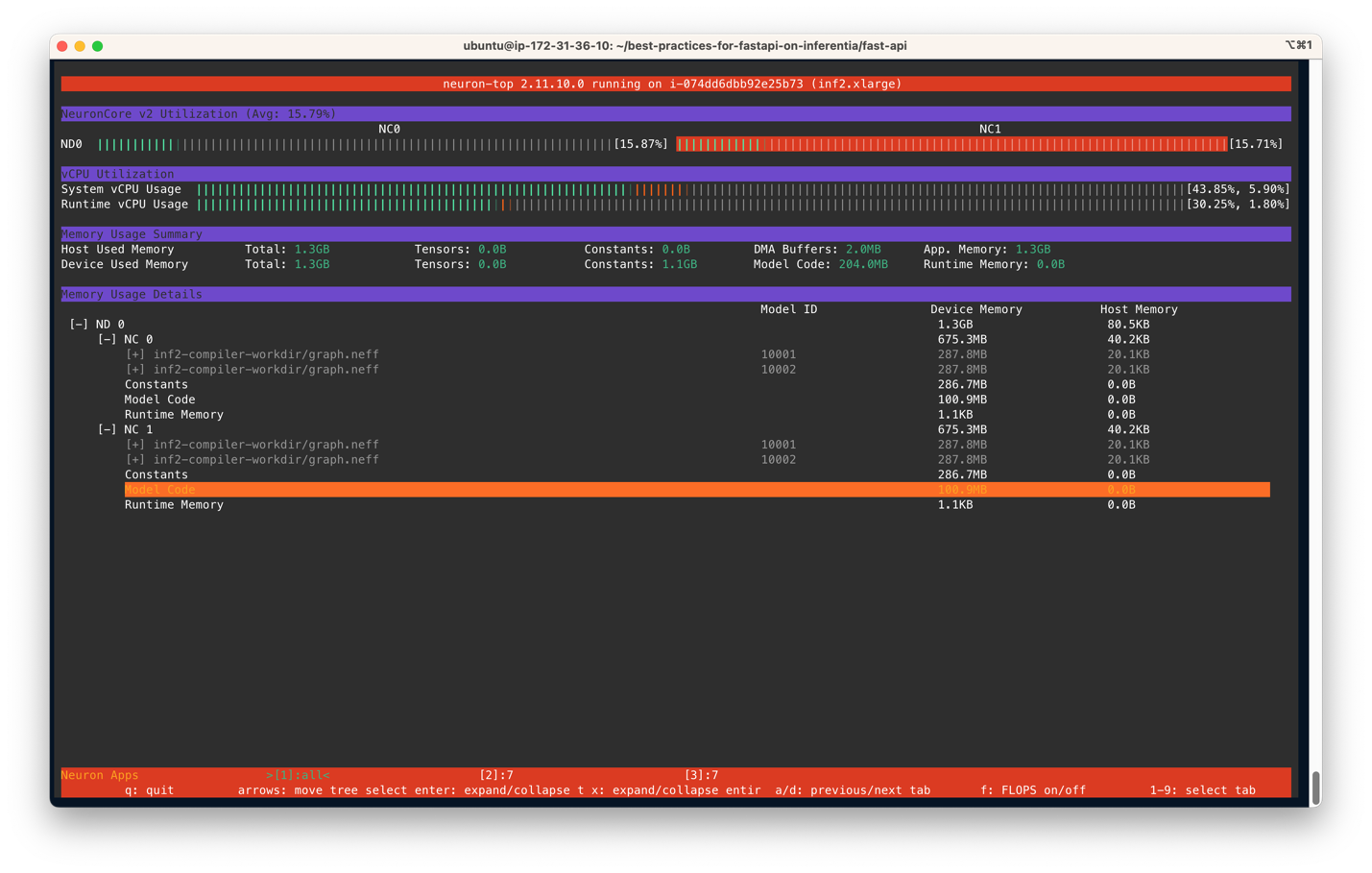

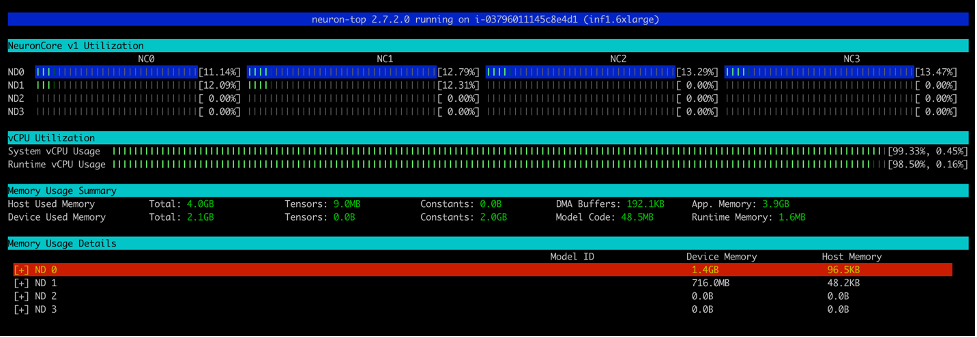

After you run the run_apis.py script, you may see the proportion of utilization of every of the six NeuronCores (see the next screenshot). You can even see the system vCPU utilization and runtime vCPU utilization.

The next screenshot reveals the Inf2 occasion core utilization proportion.

Equally, this screenshot reveals core utilization in an inf1.6xlarge occasion kind.

Clear up

To scrub up all of the Docker containers you created, we offer a cleanup.sh script that removes all working and stopped containers. This script will take away all containers, so don’t use it if you wish to preserve some containers working.

Conclusion

Manufacturing workloads typically have excessive throughput, low latency, and value necessities. Inefficient architectures that sub-optimally make the most of accelerators may result in unnecessarily excessive manufacturing prices. On this submit, we confirmed the best way to optimally make the most of NeuronCores with FastAPI to maximise throughput at minimal latency. We’ve got printed the directions on our GitHub repo. With this answer structure, you may deploy a number of fashions in every NeuronCore and function a number of fashions in parallel on totally different NeuronCores with out shedding efficiency. For extra info on the best way to deploy fashions at scale with companies like Amazon Elastic Kubernetes Service (Amazon EKS), consult with Serve 3,000 deep learning models on Amazon EKS with AWS Inferentia for under $50 an hour.

Concerning the authors

Ankur Srivastava is a Sr. Options Architect within the ML Frameworks Group. He focuses on serving to prospects with self-managed distributed coaching and inference at scale on AWS. His expertise contains industrial predictive upkeep, digital twins, probabilistic design optimization and has accomplished his doctoral research from Mechanical Engineering at Rice College and post-doctoral analysis from Massachusetts Institute of Expertise.

Ankur Srivastava is a Sr. Options Architect within the ML Frameworks Group. He focuses on serving to prospects with self-managed distributed coaching and inference at scale on AWS. His expertise contains industrial predictive upkeep, digital twins, probabilistic design optimization and has accomplished his doctoral research from Mechanical Engineering at Rice College and post-doctoral analysis from Massachusetts Institute of Expertise.

Ok.C. Tung is a Senior Resolution Architect in AWS Annapurna Labs. He makes a speciality of massive deep studying mannequin coaching and deployment at scale in cloud. He has a Ph.D. in molecular biophysics from the College of Texas Southwestern Medical Middle in Dallas. He has spoken at AWS Summits and AWS Reinvent. As we speak he helps prospects to coach and deploy massive PyTorch and TensorFlow fashions in AWS cloud. He’s the creator of two books: Learn TensorFlow Enterprise and TensorFlow 2 Pocket Reference.

Ok.C. Tung is a Senior Resolution Architect in AWS Annapurna Labs. He makes a speciality of massive deep studying mannequin coaching and deployment at scale in cloud. He has a Ph.D. in molecular biophysics from the College of Texas Southwestern Medical Middle in Dallas. He has spoken at AWS Summits and AWS Reinvent. As we speak he helps prospects to coach and deploy massive PyTorch and TensorFlow fashions in AWS cloud. He’s the creator of two books: Learn TensorFlow Enterprise and TensorFlow 2 Pocket Reference.

Pronoy Chopra is a Senior Options Architect with the Startups Generative AI group at AWS. He makes a speciality of architecting and creating IoT and Machine Studying options. He has co-founded two startups previously and enjoys being hands-on with initiatives within the IoT, AI/ML and Serverless area.

Pronoy Chopra is a Senior Options Architect with the Startups Generative AI group at AWS. He makes a speciality of architecting and creating IoT and Machine Studying options. He has co-founded two startups previously and enjoys being hands-on with initiatives within the IoT, AI/ML and Serverless area.