An open-source gymnasium for machine studying assisted pc structure design – Google Analysis Weblog

Computer Architecture analysis has a protracted historical past of creating simulators and instruments to judge and form the design of pc programs. For instance, the SimpleScalar simulator was launched within the late Nineteen Nineties and allowed researchers to discover numerous microarchitectural concepts. Laptop structure simulators and instruments, similar to gem5, DRAMSys, and lots of extra have performed a major function in advancing pc structure analysis. Since then, these shared sources and infrastructure have benefited trade and academia and have enabled researchers to systematically construct on one another’s work, resulting in important advances within the subject.

Nonetheless, pc structure analysis is evolving, with trade and academia turning in direction of machine studying (ML) optimization to fulfill stringent domain-specific necessities, similar to ML for computer architecture, ML for TinyML acceleration, DNN accelerator datapath optimization, memory controllers, power consumption, security, and privacy. Though prior work has demonstrated the advantages of ML in design optimization, the shortage of sturdy, reproducible baselines hinders truthful and goal comparability throughout totally different strategies and poses a number of challenges to their deployment. To make sure regular progress, it’s crucial to know and sort out these challenges collectively.

To alleviate these challenges, in “ArchGym: An Open-Source Gymnasium for Machine Learning Assisted Architecture Design”, accepted at ISCA 2023, we launched ArchGym, which incorporates a wide range of pc structure simulators and ML algorithms. Enabled by ArchGym, our outcomes point out that with a sufficiently massive variety of samples, any of a various assortment of ML algorithms are able to find the optimum set of structure design parameters for every goal downside; nobody resolution is essentially higher than one other. These outcomes additional point out that choosing the optimum hyperparameters for a given ML algorithm is crucial for locating the optimum structure design, however selecting them is non-trivial. We release the code and dataset throughout a number of pc structure simulations and ML algorithms.

Challenges in ML-assisted structure analysis

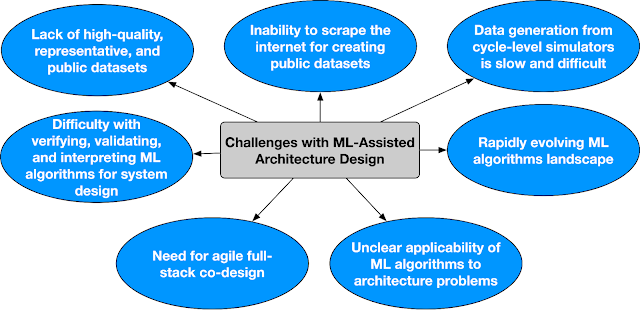

ML-assisted structure analysis poses a number of challenges, together with:

- For a selected ML-assisted pc structure downside (e.g., discovering an optimum resolution for a DRAM controller) there isn’t a systematic solution to determine optimum ML algorithms or hyperparameters (e.g., studying charge, warm-up steps, and many others.). There’s a wider vary of ML and heuristic strategies, from random walk to reinforcement learning (RL), that may be employed for design space exploration (DSE). Whereas these strategies have proven noticeable efficiency enchancment over their alternative of baselines, it’s not evident whether or not the enhancements are due to the selection of optimization algorithms or hyperparameters.

Thus, to make sure reproducibility and facilitate widespread adoption of ML-aided structure DSE, it’s mandatory to stipulate a scientific benchmarking methodology. - Whereas pc structure simulators have been the spine of architectural improvements, there may be an rising want to deal with the trade-offs between accuracy, velocity, and value in structure exploration. The accuracy and velocity of efficiency estimation extensively varies from one simulator to a different, relying on the underlying modeling particulars (e.g., cycle–accurate vs. ML–based proxy models). Whereas analytical or ML-based proxy fashions are nimble by advantage of discarding low-level particulars, they often endure from excessive prediction error. Additionally, as a result of business licensing, there will be strict limits on the number of runs collected from a simulator. General, these constraints exhibit distinct efficiency vs. pattern effectivity trade-offs, affecting the selection of optimization algorithm for structure exploration.

It’s difficult to delineate the way to systematically examine the effectiveness of assorted ML algorithms underneath these constraints. - Lastly, the panorama of ML algorithms is quickly evolving and a few ML algorithms want knowledge to be helpful. Moreover, rendering the end result of DSE into significant artifacts similar to datasets is crucial for drawing insights in regards to the design house.

On this quickly evolving ecosystem, it’s consequential to make sure the way to amortize the overhead of search algorithms for structure exploration. It’s not obvious, nor systematically studied the way to leverage exploration knowledge whereas being agnostic to the underlying search algorithm.

ArchGym design

ArchGym addresses these challenges by offering a unified framework for evaluating totally different ML-based search algorithms pretty. It includes two primary elements: 1) the ArchGym setting and a pair of) the ArchGym agent. The setting is an encapsulation of the structure price mannequin — which incorporates latency, throughput, space, vitality, and many others., to find out the computational price of operating the workload, given a set of architectural parameters — paired with the goal workload(s). The agent is an encapsulation of the ML algorithm used for the search and consists of hyperparameters and a guiding coverage. The hyperparameters are intrinsic to the algorithm for which the mannequin is to be optimized and might considerably affect efficiency. The coverage, then again, determines how the agent selects a parameter iteratively to optimize the goal goal.

Notably, ArchGym additionally features a standardized interface that connects these two elements, whereas additionally saving the exploration knowledge because the ArchGym Dataset. At its core, the interface entails three primary indicators: {hardware} state, {hardware} parameters, and metrics. These indicators are the naked minimal to determine a significant communication channel between the setting and the agent. Utilizing these indicators, the agent observes the state of the {hardware} and suggests a set of {hardware} parameters to iteratively optimize a (user-defined) reward. The reward is a operate of {hardware} efficiency metrics, similar to efficiency, vitality consumption, and many others.

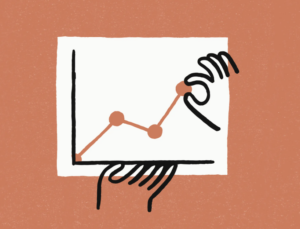

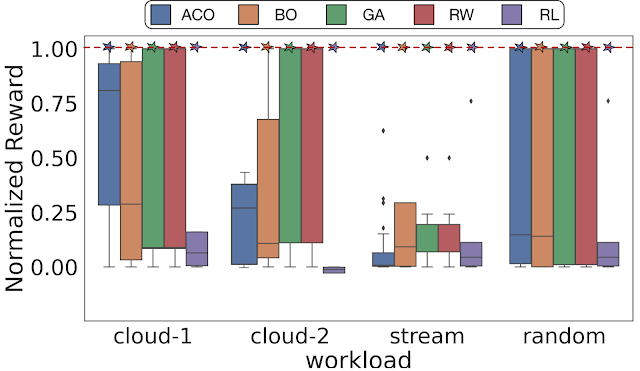

ML algorithms might be equally favorable to fulfill user-defined goal specs

Utilizing ArchGym, we empirically show that throughout totally different optimization targets and DSE issues, at the very least one set of hyperparameters exists that ends in the identical {hardware} efficiency as different ML algorithms. A poorly chosen (random choice) hyperparameter for the ML algorithm or its baseline can result in a deceptive conclusion {that a} explicit household of ML algorithms is best than one other. We present that with enough hyperparameter tuning, totally different search algorithms, even random walk (RW), are capable of determine the very best reward. Nonetheless, notice that discovering the best set of hyperparameters might require exhaustive search and even luck to make it aggressive.

|

| With a enough variety of samples, there exists at the very least one set of hyperparameters that ends in the identical efficiency throughout a spread of search algorithms. Right here the dashed line represents the utmost normalized reward. Cloud-1, cloud-2, stream, and random point out 4 totally different reminiscence traces for DRAMSys (DRAM subsystem design house exploration framework). |

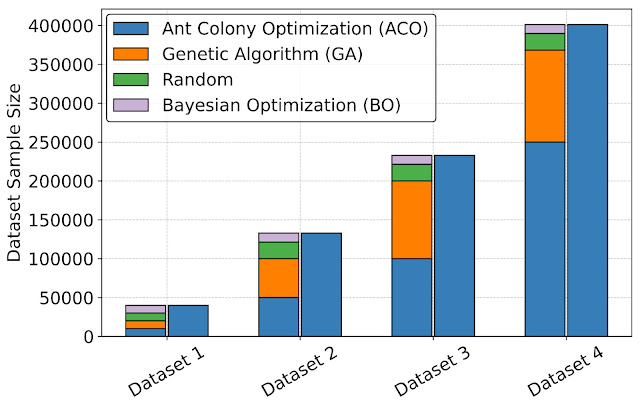

Dataset development and high-fidelity proxy mannequin coaching

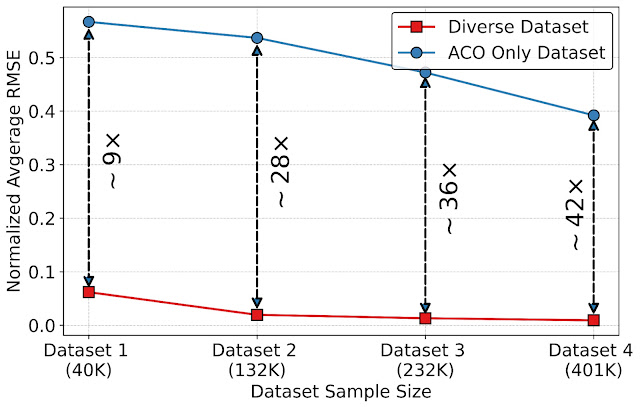

Making a unified interface utilizing ArchGym additionally permits the creation of datasets that can be utilized to design higher data-driven ML-based proxy structure price fashions to enhance the velocity of structure simulation. To guage the advantages of datasets in constructing an ML mannequin to approximate structure price, we leverage ArchGym’s capacity to log the information from every run from DRAMSys to create 4 dataset variants, every with a special variety of knowledge factors. For every variant, we create two classes: (a) Various Dataset, which represents the information collected from totally different brokers (ACO, GA, RW, and BO), and (b) ACO solely, which exhibits the information collected completely from the ACO agent, each of that are launched together with ArchGym. We practice a proxy mannequin on every dataset utilizing random forest regression with the target to foretell the latency of designs for a DRAM simulator. Our outcomes present that:

- As we improve the dataset measurement, the common normalized root mean squared error (RMSE) barely decreases.

- Nonetheless, as we introduce range within the dataset (e.g., amassing knowledge from totally different brokers), we observe 9× to 42× decrease RMSE throughout totally different dataset sizes.

|

| Various dataset assortment throughout totally different brokers utilizing ArchGym interface. |

|

| The impression of a various dataset and dataset measurement on the normalized RMSE. |

The necessity for a community-driven ecosystem for ML-assisted structure analysis

Whereas, ArchGym is an preliminary effort in direction of creating an open-source ecosystem that (1) connects a broad vary of search algorithms to pc structure simulators in an unified and easy-to-extend method, (2) facilitates analysis in ML-assisted pc structure, and (3) varieties the scaffold to develop reproducible baselines, there are a whole lot of open challenges that want community-wide assist. Beneath we define among the open challenges in ML-assisted structure design. Addressing these challenges requires a properly coordinated effort and a neighborhood pushed ecosystem.

|

| Key challenges in ML-assisted structure design. |

We name this ecosystem Architecture 2.0. We define the important thing challenges and a imaginative and prescient for constructing an inclusive ecosystem of interdisciplinary researchers to sort out the long-standing open issues in making use of ML for pc structure analysis. In case you are enthusiastic about serving to form this ecosystem, please fill out the interest survey.

Conclusion

ArchGym is an open supply gymnasium for ML structure DSE and permits an standardized interface that may be readily prolonged to go well with totally different use instances. Moreover, ArchGym permits truthful and reproducible comparability between totally different ML algorithms and helps to determine stronger baselines for pc structure analysis issues.

We invite the pc structure neighborhood in addition to the ML neighborhood to actively take part within the improvement of ArchGym. We consider that the creation of a gymnasium-type setting for pc structure analysis could be a major step ahead within the subject and supply a platform for researchers to make use of ML to speed up analysis and result in new and revolutionary designs.

Acknowledgements

This blogpost is predicated on joint work with a number of co-authors at Google and Harvard College. We want to acknowledge and spotlight Srivatsan Krishnan (Harvard) who contributed a number of concepts to this challenge in collaboration with Shvetank Prakash (Harvard), Jason Jabbour (Harvard), Ikechukwu Uchendu (Harvard), Susobhan Ghosh (Harvard), Behzad Boroujerdian (Harvard), Daniel Richins (Harvard), Devashree Tripathy (Harvard), and Thierry Thambe (Harvard). As well as, we might additionally wish to thank James Laudon, Douglas Eck, Cliff Younger, and Aleksandra Faust for his or her assist, suggestions, and motivation for this work. We’d additionally wish to thank John Guilyard for the animated determine used on this submit. Amir Yazdanbakhsh is now a Analysis Scientist at Google DeepMind and Vijay Janapa Reddi is an Affiliate Professor at Harvard.