A Light Introduction to Principal Part Evaluation (PCA) in Python

Picture by Creator | Ideogram

Principal element evaluation (PCA) is among the hottest methods for decreasing the dimensionality of high-dimensional knowledge. This is a vital knowledge transformation course of in numerous real-world situations and industries like picture processing, finance, genetics, and machine studying purposes the place knowledge accommodates many options that should be analyzed extra effectively.

The explanations for the importance of dimensionality discount methods like PCA are manifold, with three of them standing out:

- Effectivity: decreasing the variety of options in your knowledge signifies a discount within the computational price of data-intensive processes like coaching superior machine studying fashions.

- Interpretability: by projecting your knowledge right into a low-dimensional house, whereas protecting its key patterns and properties, it’s simpler to interpret and visualize in 2D and 3D, typically serving to achieve perception from its visualization.

- Noise discount: typically, high-dimensional knowledge might comprise redundant or noisy options that, when detected by strategies like PCA, might be eradicated whereas preserving (and even enhancing) the effectiveness of subsequent analyses.

Hopefully, at this level I’ve satisfied you concerning the sensible relevance of PCA when dealing with advanced knowledge. If that is the case, preserve studying, as we’ll begin getting sensible by studying easy methods to use PCA in Python.

Easy methods to Apply Principal Part Evaluation in Python

Due to supporting libraries like Scikit-learn that comprise abstracted implementations of the PCA algorithm, utilizing it in your knowledge is comparatively easy so long as the info are numerical, beforehand preprocessed, and freed from lacking values, with function values being standardized to keep away from points like variance dominance. That is significantly essential, since PCA is a deeply statistical methodology that depends on function variances to find out principal elements: new options derived from the unique ones and orthogonal to one another.

We’ll begin our instance of utilizing PCA from scratch in Python by importing the required libraries, loading the MNIST dataset of low-resolution pictures of handwritten digits, and placing it right into a Pandas DataFrame:

import pandas as pd

from torchvision import datasets

mnist_data = datasets.MNIST(root="./knowledge", practice=True, obtain=True)

knowledge = []

for img, label in mnist_data:

img_array = checklist(img.getdata())

knowledge.append([label] + img_array)

columns = ["label"] + [f"pixel_{i}" for i in range(28*28)]

mnist_data = pd.DataFrame(knowledge, columns=columns)

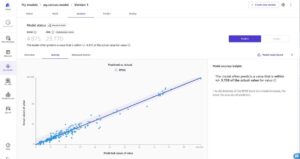

Within the MNIST dataset, every occasion is a 28×28 sq. picture, with a complete of 784 pixels, every containing a numerical code related to its grey degree, starting from 0 for black (no depth) to 255 for white (most depth). These knowledge should firstly be rearranged right into a unidimensional array — slightly than bidimensional as per its authentic 28×28 grid association. This course of known as flattening takes place within the above code, with the ultimate dataset in DataFrame format containing a complete of 785 variables: one for every of the 784 pixels plus the label, indicating with an integer worth between 0 and 9 the digit initially written within the picture.

MNIST Dataset | Supply: TensorFlow

On this instance, we can’t want the label — helpful for different use instances like picture classification — however we’ll assume we might must preserve it useful for future evaluation, due to this fact we’ll separate it from the remainder of the options related to picture pixels in a brand new variable:

X = mnist_data.drop('label', axis=1)

y = mnist_data.label

Though we is not going to apply a supervised studying approach after PCA, we’ll assume we may have to take action in future analyses, therefore we’ll cut up the dataset into coaching (80%) and testing (20%) subsets. There’s one more reason we’re doing this, let me make clear it a bit later.

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size = 0.2, random_state=42)

Preprocessing the info and making it appropriate for the PCA algorithm is as essential as making use of the algorithm itself. In our instance, preprocessing entails scaling the unique pixel intensities within the MNIST dataset to a standardized vary with a imply of 0 and a typical deviation of 1 so that every one options have equal contribution to variance computations, avoiding dominance points in sure options. To do that, we’ll use the StandardScaler class from sklearn.preprocessing, which standardizes numerical options:

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.remodel(X_test)

Discover the usage of fit_transform for the coaching knowledge, whereas for the check knowledge we used remodel as a substitute. That is the opposite cause why we beforehand cut up the info into coaching and check knowledge, to have the chance to debate this: in knowledge transformations like standardization of numerical attributes, transformations throughout the coaching and check units should be constant. The fit_transform methodology is used on the coaching knowledge as a result of it calculates the required statistics that can information the info transformation course of from the coaching set (becoming), after which applies the transformation. In the meantime, the remodel methodology is utilized on the check knowledge, which applies the identical transformation “realized” from the coaching knowledge to the check set. This ensures that the mannequin sees the check knowledge in the identical goal scale as that used for the coaching knowledge, preserving consistency and avoiding points like knowledge leakage or bias.

Now we will apply the PCA algorithm. In Scikit-learn’s implementation, PCA takes an essential argument: n_components. This hyperparameter determines the proportion of principal elements to retain. Bigger values nearer to 1 imply retaining extra elements and capturing extra variance within the authentic knowledge, whereas decrease values nearer to 0 imply protecting fewer elements and making use of a extra aggressive dimensionality discount technique. For instance, setting n_components to 0.95 implies retaining adequate elements to seize 95% of the unique knowledge’s variance, which can be acceptable for decreasing the info’s dimensionality whereas preserving most of its data. If after making use of this setting the info dimensionality is considerably decreased, meaning most of the authentic options didn’t comprise a lot statistically related data.

from sklearn.decomposition import PCA

pca = PCA(n_components = 0.95)

X_train_reduced = pca.fit_transform(X_train_scaled)

X_train_reduced.form

Utilizing the form attribute of the ensuing dataset after making use of PCA, we will see that the dimensionality of the info has been drastically decreased from 784 options to only 325, whereas nonetheless protecting 95% of the essential data.

Is that this a very good consequence? Answering this query largely is determined by the later software or sort of study you need to carry out along with your decreased knowledge. As an illustration, if you wish to construct a picture classifier of digit pictures, chances are you’ll need to construct two classification fashions: one skilled with the unique, high-dimensional dataset, and one skilled with the decreased dataset. If there isn’t any important lack of classification accuracy in your second classifier, excellent news: you achieved a sooner classifier (dimensionality discount usually implies better effectivity in coaching and inference), and related classification efficiency as in the event you have been utilizing the unique knowledge.

Wrapping Up

This text illustrated via a Python step-by-step tutorial easy methods to apply the PCA algorithm from scratch, ranging from a dataset of handwritten digit pictures with excessive dimensionality.

Iván Palomares Carrascosa is a pacesetter, author, speaker, and adviser in AI, machine studying, deep studying & LLMs. He trains and guides others in harnessing AI in the true world.