Mastering Immediate Engineering with Purposeful Testing: A Systematic Information to Dependable LLM Outputs

Creating environment friendly prompts for big language fashions typically begins as a easy job… but it surely doesn’t all the time keep that manner. Initially, following primary greatest practices appears ample: undertake the persona of a specialist, write clear directions, require a selected response format, and embrace a couple of related examples. However as necessities multiply, contradictions emerge, and even minor modifications can introduce sudden failures. What was working completely in a single immediate model all of a sudden breaks in one other.

In case you have ever felt trapped in an limitless loop of trial and error, adjusting one rule solely to see one other one fail, you’re not alone! The fact is that conventional immediate optimisation is clearly lacking a structured, extra scientific method that can assist to make sure reliability.

That’s the place purposeful testing for immediate engineering is available in! This method, impressed by methodologies of experimental science, leverages automated input-output testing with a number of iterations and algorithmic scoring to show immediate engineering right into a measurable, data-driven course of.

No extra guesswork. No extra tedious handbook validation. Simply exact and repeatable outcomes that will let you fine-tune prompts effectively and confidently.

On this article, we’ll discover a scientific method for mastering immediate engineering, which ensures your Llm outputs will likely be environment friendly and dependable even for essentially the most complicated AI duties.

Balancing precision and consistency in immediate optimisation

Including a big algorithm to a immediate can introduce partial contradictions between guidelines and result in sudden behaviors. That is very true when following a sample of beginning with a basic rule and following it with a number of exceptions or particular contradictory use instances. Including particular guidelines and exceptions could cause battle with the first instruction and, probably, with one another.

What would possibly appear to be a minor modification can unexpectedly affect different elements of a immediate. This isn’t solely true when including a brand new rule but additionally when including extra element to an current rule, like altering the order of the set of directions and even merely rewording it. These minor modifications can unintentionally change the way in which the mannequin interprets and prioritizes the set of directions.

The extra particulars you add to a immediate, the higher the danger of unintended unintended effects. By making an attempt to provide too many particulars to each side of your job, you improve as nicely the danger of getting sudden or deformed outcomes. It’s, due to this fact, important to seek out the suitable steadiness between readability and a excessive degree of specification to maximise the relevance and consistency of the response. At a sure level, fixing one requirement can break two others, creating the irritating feeling of taking one step ahead and two steps backward within the optimization course of.

Testing every change manually turns into shortly overwhelming. That is very true when one must optimize prompts that should comply with quite a few competing specs in a posh AI job. The method can not merely be about modifying the immediate for one requirement after the opposite, hoping the earlier instruction stays unaffected. It can also’t be a system of choosing examples and checking them by hand. A greater course of with a extra scientific method ought to concentrate on guaranteeing repeatability and reliability in immediate optimization.

From laboratory to AI: Why testing LLM responses requires a number of iterations

Science teaches us to make use of replicates to make sure reproducibility and construct confidence in an experiment’s outcomes. I’ve been working in tutorial analysis in chemistry and biology for greater than a decade. In these fields, experimental outcomes might be influenced by a large number of things that may result in vital variability. To make sure the reliability and reproducibility of experimental outcomes, scientists largely make use of a way often known as triplicates. This method includes conducting the identical experiment 3 times underneath equivalent circumstances, permitting the experimental variations to be of minor significance within the consequence. Statistical evaluation (commonplace imply and deviation) performed on the outcomes, largely in biology, permits the creator of an experiment to find out the consistency of the outcomes and strengthens confidence within the findings.

Identical to in biology and chemistry, this method can be utilized with LLMs to realize dependable responses. With LLMs, the era of responses is non-deterministic, that means that the identical enter can result in completely different outputs as a result of probabilistic nature of the fashions. This variability is difficult when evaluating the reliability and consistency of LLM outputs.

In the identical manner that organic/chemical experiments require triplicates to make sure reproducibility, testing LLMs ought to want a number of iterations to measure reproducibility. A single take a look at by use case is, due to this fact, not ample as a result of it doesn’t symbolize the inherent variability in LLM responses. At the very least 5 iterations per use case enable for a greater evaluation. By analyzing the consistency of the responses throughout these iterations, one can higher consider the reliability of the mannequin and determine any potential points or variation. It ensures that the output of the mannequin is accurately managed.

Multiply this throughout 10 to fifteen completely different immediate necessities, and one can simply perceive how, with no structured testing method, we find yourself spending time in trial-and-error testing with no environment friendly technique to assess high quality.

A scientific method: Purposeful testing for immediate optimization

To deal with these challenges, a structured analysis methodology can be utilized to ease and speed up the testing course of and improve the reliability of LLM outputs. This method has a number of key elements:

- Information fixtures: The method’s core heart is the info fixtures, that are composed of predefined input-output pairs particularly created for immediate testing. These fixtures function managed situations that symbolize the varied necessities and edge instances the LLM should deal with. By utilizing a various set of fixtures, the efficiency of the immediate might be evaluated effectively throughout completely different circumstances.

- Automated take a look at validation: This method automates the validation of the necessities on a set of information fixtures by comparability between the anticipated outputs outlined within the fixtures and the LLM response. This automated comparability ensures consistency and reduces the potential for human error or bias within the analysis course of. It permits for fast identification of discrepancies, enabling superb and environment friendly immediate changes.

- A number of iterations: To evaluate the inherent variability of the LLM responses, this technique runs a number of iterations for every take a look at case. This iterative method mimics the triplicate technique utilized in organic/chemical experiments, offering a extra sturdy dataset for evaluation. By observing the consistency of responses throughout iterations, we are able to higher assess the steadiness and reliability of the immediate.

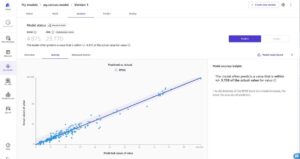

- Algorithmic scoring: The outcomes of every take a look at case are scored algorithmically, lowering the necessity for lengthy and laborious « human » analysis. This scoring system is designed to be goal and quantitative, offering clear metrics for assessing the efficiency of the immediate. And by specializing in measurable outcomes, we are able to make data-driven selections to optimize the immediate successfully.

Step 1: Defining take a look at knowledge fixtures

Choosing or creating suitable take a look at knowledge fixtures is essentially the most difficult step of our systematic method as a result of it requires cautious thought. A fixture just isn’t solely any input-output pair; it should be crafted meticulously to judge essentially the most correct as potential efficiency of the LLM for a selected requirement. This course of requires:

1. A deep understanding of the duty and the conduct of the mannequin to ensure the chosen examples successfully take a look at the anticipated output whereas minimizing ambiguity or bias.

2. Foresight into how the analysis will likely be performed algorithmically in the course of the take a look at.

The standard of a fixture, due to this fact, relies upon not solely on the great representativeness of the instance but additionally on guaranteeing it may be effectively examined algorithmically.

A fixture consists of:

• Enter instance: That is the info that will likely be given to the LLM for processing. It ought to symbolize a typical or edge-case situation that the LLM is anticipated to deal with. The enter must be designed to cowl a variety of potential variations that the LLM might need to cope with in manufacturing.

• Anticipated output: That is the anticipated consequence that the LLM ought to produce with the offered enter instance. It’s used for comparability with the precise LLM response output throughout validation.

Step 2: Operating automated checks

As soon as the take a look at knowledge fixtures are outlined, the following step includes the execution of automated checks to systematically consider the efficiency of the LLM response on the chosen use instances. As beforehand said, this course of makes certain that the immediate is completely examined towards varied situations, offering a dependable analysis of its effectivity.

Execution course of

1. A number of iterations: For every take a look at use case, the identical enter is offered to the LLM a number of instances. A easy for loop in nb_iter with nb_iter = 5 and voila!

2. Response comparability: After every iteration, the LLM response is in comparison with the anticipated output of the fixture. This comparability checks whether or not the LLM has accurately processed the enter based on the desired necessities.

3. Scoring mechanism: Every comparability ends in a rating:

◦ Move (1): The response matches the anticipated output, indicating that the LLM has accurately dealt with the enter.

◦ Fail (0): The response doesn’t match the anticipated output, signaling a discrepancy that must be mounted.

4. Ultimate rating calculation: The scores from all iterations are aggregated to calculate the general ultimate rating. This rating represents the proportion of profitable responses out of the overall variety of iterations. A excessive rating, in fact, signifies excessive immediate efficiency and reliability.

Instance: Eradicating creator signatures from an article

Let’s think about a easy situation the place an AI job is to take away creator signatures from an article. To effectively take a look at this performance, we’d like a set of fixtures that symbolize the varied signature types.

A dataset for this instance could possibly be:

| Instance Enter | Anticipated Output |

| A protracted article Jean Leblanc |

The lengthy article |

| A protracted article P. W. Hartig |

The lengthy article |

| A protracted article MCZ |

The lengthy article |

Validation course of:

- Signature removing verify: The validation perform checks if the signature is absent from the rewritten textual content. That is simply finished programmatically by trying to find the signature needle within the haystack output textual content.

- Take a look at failure standards: If the signature continues to be within the output, the take a look at fails. This means that the LLM didn’t accurately take away the signature and that additional changes to the immediate are required. If it isn’t, the take a look at is handed.

The take a look at analysis gives a ultimate rating that permits a data-driven evaluation of the immediate effectivity. If it scores completely, there isn’t any want for additional optimization. Nonetheless, normally, you’ll not get an ideal rating as a result of both the consistency of the LLM response to a case is low (for instance, 3 out of 5 iterations scored constructive) or there are edge instances that the mannequin struggles with (0 out of 5 iterations).

The suggestions clearly signifies that there’s nonetheless room for additional enhancements and it guides you to reexamine your immediate for ambiguous phrasing, conflicting guidelines, or edge instances. By constantly monitoring your rating alongside your immediate modifications, you possibly can incrementally cut back unintended effects, obtain higher effectivity and consistency, and method an optimum and dependable output.

An ideal rating is, nonetheless, not all the time achievable with the chosen mannequin. Altering the mannequin would possibly simply repair the scenario. If it doesn’t, the restrictions of your system and might take this reality into consideration in your workflow. With luck, this case would possibly simply be solved within the close to future with a easy mannequin replace.

Advantages of this technique

- Reliability of the consequence: Operating 5 to 10 iterations gives dependable statistics on the efficiency of the immediate. A single take a look at run could succeed as soon as however not twice, and constant success for a number of iterations signifies a sturdy and well-optimized immediate.

- Effectivity of the method: Not like conventional scientific experiments which will take weeks or months to duplicate, automated testing of LLMs might be carried out shortly. By setting a excessive variety of iterations and ready for a couple of minutes, we are able to receive a high-quality, reproducible analysis of the immediate effectivity.

- Information-driven optimization: The rating obtained from these checks gives a data-driven evaluation of the immediate’s potential to fulfill necessities, permitting focused enhancements.

- Facet-by-side analysis: Structured testing permits for a straightforward evaluation of immediate variations. By evaluating the take a look at outcomes, one can determine the simplest set of parameters for the directions (phrasing, order of directions) to realize the specified outcomes.

- Fast iterative enchancment: The flexibility to shortly take a look at and iterate prompts is an actual benefit to fastidiously assemble the immediate guaranteeing that the beforehand validated necessities stay because the immediate will increase in complexity and size.

By adopting this automated testing method, we are able to systematically consider and improve immediate efficiency, guaranteeing constant and dependable outputs with the specified necessities. This technique saves time and gives a sturdy analytical device for steady immediate optimization.

Systematic immediate testing: Past immediate optimization

Implementing a scientific immediate testing method presents extra benefits than simply the preliminary immediate optimization. This system is effective for different elements of AI duties:

1. Mannequin comparability:

◦ Supplier analysis: This method permits the environment friendly comparability of various LLM suppliers, corresponding to ChatGPT, Claude, Gemini, Mistral, and so on., on the identical duties. It turns into straightforward to judge which mannequin performs the most effective for his or her particular wants.

◦ Mannequin model: State-of-the-art mannequin variations are usually not all the time essential when a immediate is well-optimized, even for complicated AI duties. A light-weight, sooner model can present the identical outcomes with a sooner response. This method permits a side-by-side comparability of the completely different variations of a mannequin, corresponding to Gemini 1.5 flash vs. 1.5 professional vs. 2.0 flash or ChatGPT 3.5 vs. 4o mini vs. 4o, and permits the data-driven collection of the mannequin model.

2. Model upgrades:

◦ Compatibility verification: When a brand new mannequin model is launched, systematic immediate testing helps validate if the improve maintains or improves the immediate efficiency. That is essential for guaranteeing that updates don’t unintentionally break the performance.

◦ Seamless Transitions: By figuring out key necessities and testing them, this technique can facilitate higher transitions to new mannequin variations, permitting quick adjustment when essential with a view to preserve high-quality outputs.

3. Value optimization:

◦ Efficiency-to-cost ratio: Systematic immediate testing helps in selecting the most effective cost-effective mannequin based mostly on the performance-to-cost ratio. We will effectively determine essentially the most environment friendly choice between efficiency and operational prices to get the most effective return on LLM prices.

Overcoming the challenges

The largest problem of this method is the preparation of the set of take a look at knowledge fixtures, however the effort invested on this course of will repay considerably as time passes. Nicely-prepared fixtures save appreciable debugging time and improve mannequin effectivity and reliability by offering a sturdy basis for evaluating the LLM response. The preliminary funding is shortly returned by improved effectivity and effectiveness in LLM growth and deployment.

Fast professionals and cons

Key benefits:

- Steady enchancment: The flexibility so as to add extra necessities over time whereas guaranteeing current performance stays intact is a big benefit. This permits for the evolution of the AI job in response to new necessities, guaranteeing that the system stays up-to-date and environment friendly.

- Higher upkeep: This method allows the straightforward validation of immediate efficiency with LLM updates. That is essential for sustaining excessive requirements of high quality and reliability, as updates can typically introduce unintended modifications in conduct.

- Extra flexibility: With a set of high quality management checks, switching LLM suppliers turns into extra simple. This flexibility permits us to adapt to modifications available in the market or technological developments, guaranteeing we are able to all the time use the most effective device for the job.

- Value optimization: Information-driven evaluations allow higher selections on performance-to-cost ratio. By understanding the efficiency positive aspects of various fashions, we are able to select essentially the most cost-effective resolution that meets the wants.

- Time financial savings: Systematic evaluations present fast suggestions, lowering the necessity for handbook testing. This effectivity permits to shortly iterate on immediate enchancment and optimization, accelerating the event course of.

Challenges

- Preliminary time funding: Creating take a look at fixtures and analysis features can require a big funding of time.

- Defining measurable validation standards: Not all AI duties have clear move/fail circumstances. Defining measurable standards for validation can typically be difficult, particularly for duties that contain subjective or nuanced outputs. This requires cautious consideration and should contain a tough collection of the analysis metrics.

- Value related to a number of checks: A number of take a look at use instances related to 5 to 10 iterations can generate a excessive variety of LLM requests for a single take a look at automation. But when the price of a single LLM name is neglectable, as it’s normally for textual content enter/output calls, the general price of a take a look at stays minimal.

Conclusion: When do you have to implement this method?

Implementing this systematic testing method is, in fact, not all the time essential, particularly for easy duties. Nonetheless, for complicated AI workflows during which precision and reliability are crucial, this method turns into extremely beneficial by providing a scientific technique to assess and optimize immediate efficiency, stopping limitless cycles of trial and error.

By incorporating purposeful testing rules into Prompt Engineering, we remodel a historically subjective and fragile course of into one that’s measurable, scalable, and sturdy. Not solely does it improve the reliability of LLM outputs, it helps obtain steady enchancment and environment friendly useful resource allocation.

The choice to implement systematic immediate Testing must be based mostly on the complexity of your mission. For situations demanding excessive precision and consistency, investing the time to arrange this system can considerably enhance outcomes and velocity up the event processes. Nonetheless, for easier duties, a extra classical, light-weight method could also be ample. The secret is to steadiness the necessity for rigor with sensible issues, guaranteeing that your testing technique aligns along with your objectives and constraints.

Thanks for studying!