A New Strategy to AI Security: Layer Enhanced Classification (LEC) | by Sandi Besen | Dec, 2024

LEC surpasses finest at school fashions, like GPT-4o, by combining the effectivity of a ML classifier with the language understanding of an LLM

Think about sitting in a boardroom, discussing probably the most transformative expertise of our time — synthetic intelligence — and realizing we’re driving a rocket with no dependable security belt. The Bletchley Declaration, unveiled throughout the AI Security Summit hosted by the UK authorities and backed by 29 international locations, captures this sentiment completely [1]:

“There may be potential for critical, even catastrophic, hurt, both deliberate or unintentional, stemming from probably the most important capabilities of those AI fashions”.

Nevertheless, present AI security approaches power organizations into an un-winnable trade-off between price, pace, and accuracy. Conventional machine studying classifiers battle to seize the subtleties of pure language and LLM’s, whereas highly effective, introduce important computational overhead — requiring further mannequin calls that escalate prices for every AI security test.

Our group (Mason Sawtell, Sandi Besen, Tula Masterman, Jim Brown), introduces a novel strategy referred to as LEC (Layer Enhanced Classification).

We show LEC combines the computational effectivity of a machine studying classifier with the subtle language understanding of an LLM — so that you don’t have to decide on between price, pace, and accuracy. LEC surpasses finest at school fashions like GPT-4o and fashions particularly educated for figuring out unsafe content material and immediate injections. What’s higher but, we imagine LEC could be modified to deal with non AI security associated textual content classification duties like sentiment evaluation, intent classification, product categorization, and extra.

The implications are profound. Whether or not you’re a expertise chief navigating the complicated terrain of AI security, a product supervisor mitigating potential dangers, or an govt charting a accountable innovation technique, our strategy gives a scalable and adaptable answer.

Additional particulars could be discovered within the full paper’s pre-print on Arxiv[2] or in Tula Masterman’s summarized article in regards to the paper.

Accountable AI has turn out to be a crucial precedence for expertise leaders throughout the ecosystem — from mannequin builders like Anthropic, OpenAI, Meta, Google, and IBM to enterprise consulting corporations and AI service suppliers. As AI adoption accelerates, its significance turns into much more pronounced.

Our analysis particularly targets two pivotal challenges in AI security — content material security and immediate injection detection. Content material security refers back to the means of figuring out and stopping the technology of dangerous, inappropriate, or probably harmful content material that would pose dangers to customers or violate moral pointers. Immediate injection includes detecting makes an attempt to govern AI programs by crafting enter prompts designed to bypass security mechanisms or coerce the mannequin into producing unethical outputs.

To advance the sphere of moral AI, we utilized LEC’s capabilities to real-world accountable AI use circumstances. Our hope is that this system might be adopted broadly, serving to to make each AI system much less weak to exploitation.

We curated a content material security dataset of 5,000 examples to check LEC on each binary (2 classes) and multi-class (>2 classes) classification. We used the SALAD Knowledge dataset from OpenSafetyLab [3] to signify unsafe content material and the “LMSYS-Chat-1M” dataset from LMSYS, to signify protected content material [4].

For binary classification the content material is both “protected” or “unsafe”. For multi-class classification, content material is both categorized as “protected” or assigned to a selected particular “unsafe” class.

We in contrast mannequin’s educated utilizing LEC to GPT-4o (widely known as an trade chief), Llama Guard 3 1B and Llama Guard 3 8B (particular goal fashions particularly educated to deal with content material security duties). We discovered that the fashions utilizing LEC outperformed all fashions we in contrast them to utilizing as few as 20 coaching examples for binary classification and 50 coaching examples for multi-class classification.

The best performing LEC mannequin achieved a weighted F1 rating (measures how effectively a system balances making appropriate predictions whereas minimizing errors) of .96 of a most rating of 1 on the binary classification process in comparison with GPT-4o’s rating of 0.82 or LlamaGuard 8B’s rating of 0.71.

Because of this with as few as 15 examples, utilizing LEC you possibly can practice a mannequin to outperform trade leaders in figuring out protected or unsafe content material at a fraction of the computational price.

We curated a immediate injection dataset utilizing the SPML Chatbot Immediate Injection Dataset. We selected the SPML dataset due to its range and complexity in representing real-world chat bot eventualities. This dataset contained pairs of system and person prompts to determine person prompts that try to defy or manipulate the system immediate. That is particularly related for companies deploying public going through chatbots which might be solely meant to reply questions on particular domains.

We in contrast mannequin’s educated utilizing LEC to GPT-4o (an trade chief) and deBERTa v3 Immediate Injection v2 (a mannequin particularly educated to determine immediate injections). We discovered that the fashions utilizing LEC outperformed each GPT-4o utilizing 55 coaching examples and the the particular goal mannequin utilizing as few as 5 coaching examples.

The best performing LEC mannequin achieved a weighted F1 rating of .98 of a most rating of 1 in comparison with GPT-4o’s rating of 0.92 or deBERTa v2 Immediate Injection v2’s rating of 0.73.

Because of this with as few as 5 examples, utilizing LEC you possibly can practice a mannequin to outperform trade leaders in figuring out immediate injection assaults.

Full outcomes and experimentation implementation particulars could be discovered within the Arxiv preprint.

As organizations more and more combine AI into their operations, making certain the protection and integrity of AI-driven interactions has turn out to be mission-critical. LEC offers a strong and versatile means to make sure that probably unsafe data is being detected — leading to cut back operational danger and elevated finish person belief. There are a number of ways in which a LEC fashions could be integrated into your AI Security Toolkit to forestall undesirable vulnerabilities when utilizing your AI instruments together with throughout LM inference, earlier than/after LM inference, and even in multi-agent eventualities.

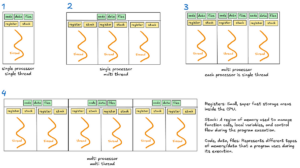

Throughout LM Inference

In case you are utilizing an open-source mannequin or have entry to the internal workings of the closed-source mannequin, you need to use LEC as a part of your inference pipeline for AI security in close to actual time. Because of this if any security issues come up whereas data is touring by the language mannequin, technology of any output could be halted. An instance of what this may seem like could be seen in determine 1.

Earlier than / After LM Inference

In the event you don’t have entry to the internal workings of the language mannequin or wish to test for security issues as a separate process you need to use a LEC mannequin earlier than or after calling a language mannequin. This makes LEC appropriate with closed supply fashions just like the Claude and GPT households.

Constructing a LEC Classifier into your deployment pipeline can prevent from passing probably dangerous content material into your LM and/or test for dangerous content material earlier than an output is returned to the person.

Utilizing LEC Classifiers with Brokers

Agentic AI programs can amplify any present unintended actions, resulting in a compounding impact of unintended penalties. LEC Classifiers can be utilized at completely different occasions all through an agentic situation to can safeguard the agent from both receiving or producing dangerous outputs. As an illustration, by together with LEC fashions into your agentic structure you possibly can:

- Verify that the request is okay to begin engaged on

- Guarantee an invoked instrument name doesn’t violate any AI security pointers (e.g., producing inappropriate search subjects for a key phrase search)

- Make certain data returned to an agent is just not dangerous (e.g., outcomes returned from RAG search or google search are “protected”)

- Validating the ultimate response of an agent earlier than passing it again to the person

Learn how to Implement LEC Primarily based on Language Mannequin Entry

Enterprises with entry to the interior workings of fashions can combine LEC straight inside the inference pipeline, enabling steady security monitoring all through the AI’s content material technology course of. When utilizing closed-source fashions through API (as is the case with GPT-4), companies wouldn’t have direct entry to the underlying data wanted to coach a LEC mannequin. On this situation, LEC could be utilized earlier than and/or after mannequin calls. For instance, earlier than an API name, the enter could be screened for unsafe content material. Publish-call, the output could be validated to make sure it aligns with enterprise security protocols.

Irrespective of which means you select to implement LEC, utilizing its highly effective skills offers you with superior content material security and immediate injection safety than present strategies at a fraction of the time and value.

Layer Enhanced Classification (LEC) is the protection belt for that AI rocket ship we’re on.

The worth proposition is obvious: LEC’s AI Security fashions can mitigate regulatory danger, assist guarantee model safety, and improve person belief in AI-driven interactions. It alerts a brand new period of AI growth the place accuracy, pace, and value aren’t competing priorities and AI security measures could be addressed each at inference time, earlier than inference time, or after inference time.

In our content material security experiments, the best performing LEC mannequin achieved a weighted F1 rating of 0.96 out of 1 on binary classification, considerably outperforming GPT-4o’s rating of 0.82 and LlamaGuard 8B’s rating of 0.71 — and this was achieved with as few as 15 coaching examples. Equally, in immediate injection detection, our high LEC mannequin reached a weighted F1 rating of 0.98, in comparison with GPT-4o’s 0.92 and deBERTa v2 Immediate Injection v2’s 0.73, and it was achieved with simply 55 coaching examples. These outcomes not solely show superior efficiency, but additionally spotlight LEC’s outstanding capability to attain excessive accuracy with minimal coaching knowledge.

Though our work centered on utilizing LEC Fashions for AI security use circumstances, we anticipate that our strategy can be utilized for a greater variety of textual content classification duties. We encourage the analysis group to make use of our work as a stepping stone for exploring what else could be achieved — additional open new pathways for extra clever, safer, and extra reliable AI programs.