Accelerating AI/ML growth at BMW Group with Amazon SageMaker Studio

This publish is co-written with Marc Neumann, Amor Steinberg and Marinus Krommenhoek from BMW Group.

The BMW Group – headquartered in Munich, Germany – is pushed by 149,000 staff worldwide and manufactures in over 30 manufacturing and meeting amenities throughout 15 international locations. At this time, the BMW Group is the world’s main producer of premium cars and bikes, and supplier of premium monetary and mobility companies. The BMW Group units tendencies in manufacturing know-how and sustainability as an innovation chief with an clever materials combine, a technological shift in the direction of digitalization, and resource-efficient manufacturing.

In an more and more digital and quickly altering world, BMW Group’s enterprise and product growth methods rely closely on data-driven decision-making. With that, the necessity for knowledge scientists and machine studying (ML) engineers has grown considerably. These expert professionals are tasked with constructing and deploying fashions that enhance the standard and effectivity of BMW’s enterprise processes and allow knowledgeable management choices.

Knowledge scientists and ML engineers require succesful tooling and adequate compute for his or her work. Subsequently, BMW established a centralized ML/deep studying infrastructure on premises a number of years in the past and repeatedly upgraded it. To pave the way in which for the expansion of AI, BMW Group wanted to make a leap relating to scalability and elasticity whereas decreasing operational overhead, software program licensing, and {hardware} administration.

On this publish, we are going to speak about how BMW Group, in collaboration with AWS Skilled Providers, constructed its Jupyter Managed (JuMa) service to deal with these challenges. JuMa is a service of BMW Group’s AI platform for its knowledge analysts, ML engineers, and knowledge scientists that gives a user-friendly workspace with an built-in growth surroundings (IDE). It’s powered by Amazon SageMaker Studio and gives JupyterLab for Python and Posit Workbench for R. This providing allows BMW ML engineers to carry out code-centric knowledge analytics and ML, will increase developer productiveness by offering self-service functionality and infrastructure automation, and tightly integrates with BMW’s centralized IT tooling panorama.

JuMa is now out there to all knowledge scientists, ML engineers, and knowledge analysts at BMW Group. The service streamlines ML growth and manufacturing workflows (MLOps) throughout BMW by offering a cost-efficient and scalable growth surroundings that facilitates seamless collaboration between knowledge science and engineering groups worldwide. This leads to quicker experimentation and shorter concept validation cycles. Furthermore, the JuMa infrastructure, which relies on AWS serverless and managed companies, helps scale back operational overhead for DevOps groups and permits them to deal with enabling use circumstances and accelerating AI innovation at BMW Group.

Challenges of rising an on-premises AI platform

Previous to introducing the JuMa service, BMW groups worldwide have been utilizing two on-premises platforms that offered groups JupyterHub and RStudio environments. These platforms have been too restricted relating to CPU, GPU, and reminiscence to permit the scalability of AI at BMW Group. Scaling these platforms with managing extra on-premises {hardware}, extra software program licenses, and assist charges would require vital up-front investments and excessive efforts for its upkeep. So as to add to this, restricted self-service capabilities have been out there, requiring excessive operational effort for its DevOps groups. Extra importantly, the usage of these platforms was misaligned with BMW Group’s IT cloud-first technique. For instance, groups utilizing these platforms missed a simple migration of their AI/ML prototypes to the industrialization of the answer operating on AWS. In distinction, the information science and analytics groups already utilizing AWS straight for experimentation wanted to additionally deal with constructing and working their AWS infrastructure whereas guaranteeing compliance with BMW Group’s inner insurance policies, native legal guidelines, and rules. This included a spread of configuration and governance actions from ordering AWS accounts, limiting web entry, utilizing allowed listed packages to maintaining their Docker pictures updated.

Overview of answer

JuMa is a completely managed multi-tenant, safety hardened AI platform service constructed on AWS with SageMaker Studio on the core. By counting on AWS serverless and managed companies as the primary constructing blocks of the infrastructure, the JuMa DevOps workforce doesn’t want to fret about patching servers, upgrading storage, or managing every other infrastructure parts. The service handles all these processes robotically, offering a strong technical platform that’s typically updated and able to use.

JuMa customers can effortlessly order a workspace by way of a self-service portal to create a safe and remoted growth and experimentation surroundings for his or her groups. After a JuMa workspace is provisioned, the customers can launch JupyterLab or Posit workbench environments in SageMaker Studio with only a few clicks and begin the event instantly, utilizing the instruments and frameworks they’re most accustomed to. JuMa is tightly built-in with a spread of BMW Central IT companies, together with identification and entry administration, roles and rights administration, BMW Cloud Data Hub (BMW’s knowledge lake on AWS) and on-premises databases. The latter helps AI/ML groups seamlessly entry required knowledge, given they’re licensed to take action, while not having to construct knowledge pipelines. Moreover, the notebooks might be built-in into the company Git repositories to collaborate utilizing model management.

The answer abstracts away all technical complexities related to AWS account administration, configuration, and customization for AI/ML groups, permitting them to totally deal with AI innovation. The platform ensures that the workspace configuration meets BMW’s safety and compliance necessities out of the field.

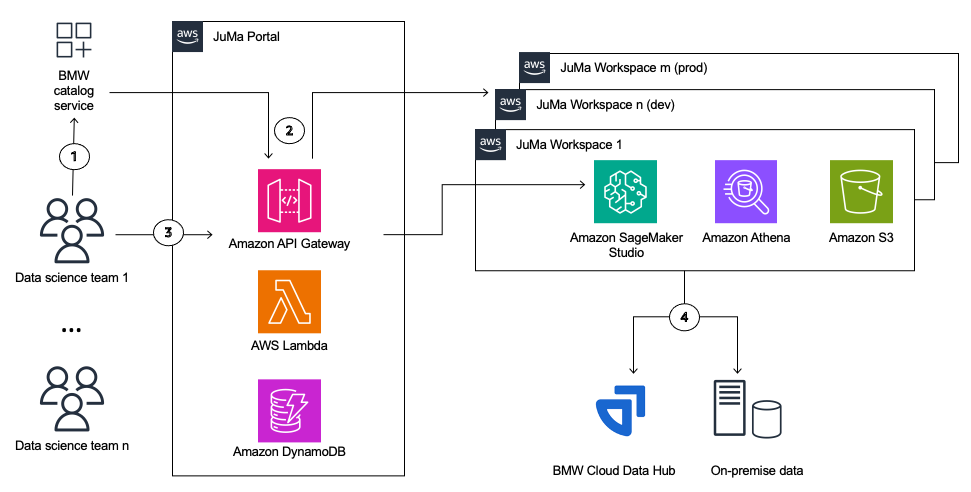

The next diagram describes the high-level context view of the structure.

Person journey

BMW AI/ML workforce members can order their JuMa workspace utilizing BMW’s commonplace catalog service. After approval by the road supervisor, the ordered JuMa workspace is provisioned by the platform absolutely automatedly. The workspace provisioning workflow consists of the next steps (as numbered within the structure diagram).

- An information scientist workforce orders a brand new JuMa workspace in BMW’s Catalog. JuMa robotically provisions a brand new AWS account for the workspace. This ensures full isolation between the workspaces following the federated mannequin account construction talked about in SageMaker Studio Administration Best Practices.

- JuMa configures a workspace (which is a Sagemaker domain) that solely permits predefined Amazon SageMaker options required for experimentation and growth, particular customized kernels, and lifecycle configurations. It additionally units up the required subnets and safety teams that make sure the notebooks run in a safe surroundings.

- After the workspaces are provisioned, the licensed customers log in to the JuMa portal and entry the SageMaker Studio IDE inside their workspace utilizing a SageMaker pre-signed URL. Customers can select between opening a SageMaker Studio non-public area or a shared space. Shared areas encourage collaboration between completely different members of a workforce that may work in parallel on the identical notebooks, whereas non-public areas permit for a growth surroundings for solitary workloads.

- Utilizing the BMW knowledge portal, customers can request entry to on-premises databases or knowledge saved in BMW’s Cloud Knowledge Hub, making it out there of their workspace for growth and experimentation, from knowledge preparation and evaluation to mannequin coaching and validation.

After an AI mannequin is developed and validated in JuMa, AI groups can use the MLOPs service of the BMW AI platform to deploy it to manufacturing shortly and effortlessly. This service gives customers with a production-grade ML infrastructure and pipelines on AWS utilizing SageMaker, which might be arrange in minutes with only a few clicks. Customers merely have to host their mannequin on the provisioned infrastructure and customise the pipeline to satisfy their particular use case wants. On this approach, the AI platform covers all the AI lifecycle at BMW Group.

JuMa options

Following greatest apply architecting on AWS, the JuMa service was designed and applied based on the AWS Well-Architected Framework. Architectural choices of every Nicely-Architected pillar are described intimately within the following sections.

Safety and compliance

To guarantee full isolation between the tenants, every workspace receives its personal AWS account, the place the licensed customers can collectively collaborate on analytics duties in addition to on creating and experimenting with AI/ML fashions. The JuMa portal itself enforces isolation at runtime utilizing policy-based isolation with AWS Identity and Access Management (IAM) and the JuMa consumer’s context. For extra details about this technique, discuss with Run-time, policy-based isolation with IAM.

Knowledge scientists can solely entry their area by way of the BMW community by way of pre-signed URLs generated by the portal. Direct web entry is disabled inside their area. Their Sagemaker area privileges are constructed utilizing Amazon SageMaker Role Manager personas to make sure least privilege entry to AWS companies wanted for the event comparable to SageMaker, Amazon Athena, Amazon Simple Storage Service (Amazon S3), and AWS Glue. This position implements ML guardrails (comparable to these described in Governance and control), together with enforcement of ML coaching to happen in both Amazon Virtual Private Cloud (Amazon VPC) or with out web and permitting solely the usage of JuMa’s customized vetted and up-to-date SageMaker pictures.

As a result of JuMa is designed for growth, experimentation, and ad-hoc evaluation, it implements retention insurance policies to take away knowledge after 30 days. To entry knowledge every time wanted and retailer it for long run, JuMa seamlessly integrates with the BMW Cloud Knowledge Hub and BMW on-premises databases.

Lastly, JuMa helps a number of Areas to conform to particular native authorized conditions which, for instance, require it to course of knowledge domestically to allow BMW’s knowledge sovereignty.

Operational excellence

Each the JuMa platform backend and workspaces are applied with AWS serverless and managed companies. Utilizing these companies helps decrease the trouble of the BMW platform workforce sustaining and working the end-to-end answer, striving to be a no-ops service. Each the workspace and portal are monitored utilizing Amazon CloudWatch logs, metrics, and alarms to examine key efficiency indicators (KPIs) and proactively notify the platform workforce of any points. Moreover, the AWS X-Ray distributed tracing system is used to hint requests all through a number of parts and annotate CloudWatch logs with workspace-relevant context.

All adjustments to the JuMa infrastructure are managed and applied by way of automation utilizing infrastructure as code (IaC). This helps scale back guide efforts and human errors, enhance consistency, and guarantee reproducible and version-controlled adjustments throughout each JuMa platform backend workspaces. Particularly, all workspaces are provisioned and up to date by way of an onboarding course of constructed on prime of AWS Step Functions, AWS CodeBuild, and Terraform. Subsequently, no guide configuration is required to onboard new workspaces to the JuMa platform.

Price optimization

By utilizing AWS serverless companies, JuMa ensures on-demand scalability, pre-approved occasion sizes, and a pay-as-you-go mannequin for the sources used in the course of the growth and experimentation actions per the AI/ML groups’ wants. To additional optimize prices, the JuMa platform screens and identifies idle sources inside SageMaker Studio and shuts them down robotically to forestall bills for non-utilized sources.

Sustainability

JuMa replaces BMW’s two on-premises platforms for analytics and deep studying workloads that eat a substantial quantity of electrical energy and produce CO2 emissions even when not in use. By migrating AI/ML workloads from on premises to AWS, BMW will slash its environmental influence by decommissioning the on-premises platforms.

Moreover, the mechanism for auto shutdown of idle sources, knowledge retention polices, and the workspace utilization experiences to its homeowners applied in JuMa assist additional decrease the environmental footprint of operating AI/ML workloads on AWS.

Efficiency effectivity

By utilizing SageMaker Studio, BMW groups profit from a simple adoption of the newest SageMaker options that may assist speed up their experimentation. For instance, they will use Amazon SageMaker JumpStart capabilities to make use of the newest pre-trained, open supply fashions. Moreover, it helps scale back AI/ML workforce efforts transferring from experimentation to answer industrialization, as a result of the event surroundings gives the identical AWS core companies however restricted to growth capabilities.

Reliability

SageMaker Studio domains are deployed in a VPC-only mode to handle web entry and solely permit entry to meant AWS companies. The community is deployed in two Availability Zones to guard in opposition to a single level of failure, attaining larger resiliency and availability of the platform to its customers.

Modifications to JuMa workspaces are robotically deployed and examined to growth and integration environments, utilizing IaC and CI/CD pipelines, earlier than upgrading buyer environments.

Lastly, knowledge saved in Amazon Elastic File System (Amazon EFS) for SageMaker Studio domains is stored after volumes are deleted for backup functions.

Conclusion

On this publish, we described how BMW Group in collaboration with AWS ProServe developed a completely managed AI platform service on AWS utilizing SageMaker Studio and different AWS serverless and managed companies.

With JuMa, BMW’s AI/ML groups are empowered to unlock new enterprise worth by accelerating experimentation in addition to time-to-market for disruptive AI options. Moreover, by migrating from its on-premises platform, BMW can scale back the general operational efforts and prices whereas additionally rising sustainability and the general safety posture.

To be taught extra about operating your AI/ML experimentation and growth workloads on AWS, go to Amazon SageMaker Studio.

In regards to the Authors

Marc Neumann is the pinnacle of the central AI Platform at BMP Group. He’s accountable for creating and implementing methods to make use of AI know-how for enterprise worth creation throughout the BMW Group. His major purpose is to make sure that the usage of AI is sustainable and scalable, that means it may be constantly utilized throughout the group to drive long-term development and innovation. By his management, Neumann goals to place the BMW Group as a frontrunner in AI-driven innovation and worth creation within the automotive business and past.

Marc Neumann is the pinnacle of the central AI Platform at BMP Group. He’s accountable for creating and implementing methods to make use of AI know-how for enterprise worth creation throughout the BMW Group. His major purpose is to make sure that the usage of AI is sustainable and scalable, that means it may be constantly utilized throughout the group to drive long-term development and innovation. By his management, Neumann goals to place the BMW Group as a frontrunner in AI-driven innovation and worth creation within the automotive business and past.

Amor Steinberg is a Machine Studying Engineer at BMW Group and the service lead of Jupyter Managed, a brand new service that goals to supply a code-centric analytics and machine studying workbench for engineers and knowledge scientists on the BMW Group. His previous expertise as a DevOps Engineer at monetary establishments enabled him to collect a novel understanding of the challenges that faces banks within the European Union and hold the steadiness between striving for technological innovation, complying with legal guidelines and rules, and maximizing safety for purchasers.

Amor Steinberg is a Machine Studying Engineer at BMW Group and the service lead of Jupyter Managed, a brand new service that goals to supply a code-centric analytics and machine studying workbench for engineers and knowledge scientists on the BMW Group. His previous expertise as a DevOps Engineer at monetary establishments enabled him to collect a novel understanding of the challenges that faces banks within the European Union and hold the steadiness between striving for technological innovation, complying with legal guidelines and rules, and maximizing safety for purchasers.

Marinus Krommenhoek is a Senior Cloud Answer Architect and a Software program Developer at BMW Group. He’s obsessed with modernizing the IT panorama with state-of-the-art companies that add excessive worth and are straightforward to take care of and function. Marinus is a giant advocate of microservices, serverless architectures, and agile working. He has a report of working with distributed groups throughout the globe inside giant enterprises.

Marinus Krommenhoek is a Senior Cloud Answer Architect and a Software program Developer at BMW Group. He’s obsessed with modernizing the IT panorama with state-of-the-art companies that add excessive worth and are straightforward to take care of and function. Marinus is a giant advocate of microservices, serverless architectures, and agile working. He has a report of working with distributed groups throughout the globe inside giant enterprises.

Nicolas Jacob Baer is a Principal Cloud Utility Architect at AWS ProServe with a powerful deal with knowledge engineering and machine studying, based mostly in Switzerland. He works carefully with enterprise prospects to design knowledge platforms and construct superior analytics and ML use circumstances.

Nicolas Jacob Baer is a Principal Cloud Utility Architect at AWS ProServe with a powerful deal with knowledge engineering and machine studying, based mostly in Switzerland. He works carefully with enterprise prospects to design knowledge platforms and construct superior analytics and ML use circumstances.

Joaquin Rinaudo is a Principal Safety Architect at AWS ProServe. He’s obsessed with constructing options that assist builders enhance their software program high quality. Previous to AWS, he labored throughout a number of domains within the safety business, from cellular safety to cloud and compliance-related matters. In his free time, Joaquin enjoys spending time with household and studying science-fiction novels.

Joaquin Rinaudo is a Principal Safety Architect at AWS ProServe. He’s obsessed with constructing options that assist builders enhance their software program high quality. Previous to AWS, he labored throughout a number of domains within the safety business, from cellular safety to cloud and compliance-related matters. In his free time, Joaquin enjoys spending time with household and studying science-fiction novels.

Shukhrat Khodjaev is a Senior International Engagement Supervisor at AWS ProServe. He focuses on delivering impactful large knowledge and AI/ML options that allow AWS prospects to maximise their enterprise worth by way of knowledge utilization.

Shukhrat Khodjaev is a Senior International Engagement Supervisor at AWS ProServe. He focuses on delivering impactful large knowledge and AI/ML options that allow AWS prospects to maximise their enterprise worth by way of knowledge utilization.