Researchers on the College of Tokyo Introduce a New Approach to Defend Delicate Synthetic Intelligence AI-Primarily based Functions from Attackers

In recent times, the fast progress in Synthetic Intelligence (AI) has led to its widespread utility in numerous domains corresponding to laptop imaginative and prescient, audio recognition, and extra. This surge in utilization has revolutionized industries, with neural networks on the forefront, demonstrating exceptional success and infrequently reaching ranges of efficiency that rival human capabilities.

Nevertheless, amidst these strides in AI capabilities, a major concern looms—the vulnerability of neural networks to adversarial inputs. This vital problem in deep studying arises from the networks’ susceptibility to being misled by refined alterations in enter knowledge. Even minute, imperceptible adjustments can lead a neural community to make obviously incorrect predictions, typically with unwarranted confidence. This raises alarming issues concerning the reliability of neural networks in functions essential for security, corresponding to autonomous autos and medical diagnostics.

To counteract this vulnerability, researchers have launched into a quest for options. One notable technique includes introducing managed noise into the preliminary layers of neural networks. This novel strategy goals to bolster the community’s resilience to minor variations in enter knowledge, deterring it from fixating on inconsequential particulars. By compelling the community to study extra basic and strong options, noise injection reveals promise in mitigating its susceptibility to adversarial assaults and sudden enter variations. This growth holds nice potential in making neural networks extra dependable and reliable in real-world eventualities.

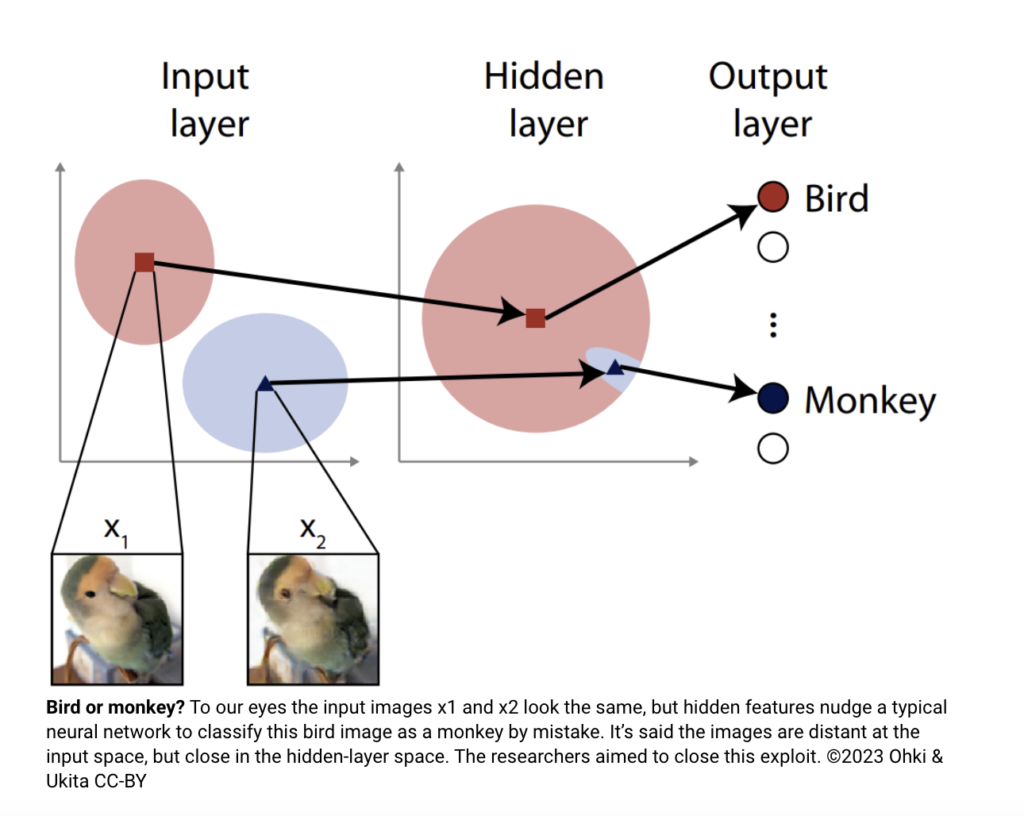

But, a brand new problem arises as attackers give attention to the inside layers of neural networks. As an alternative of refined alterations, these assaults exploit intimate information of the community’s inside workings. They supply inputs that considerably deviate from expectations however yield the specified end result with the introduction of particular artifacts.

Safeguarding in opposition to these inner-layer assaults has confirmed to be extra intricate. The prevailing perception that introducing random noise into the inside layers would impair the community’s efficiency underneath regular circumstances posed a major hurdle. Nevertheless, a paper from researchers at The College of Tokyo has challenged this assumption.

The analysis group devised an adversarial assault concentrating on the inside, hidden layers, resulting in misclassification of enter photos. This profitable assault served as a platform to guage their modern approach—inserting random noise into the community’s inside layers. Astonishingly, this seemingly easy modification rendered the neural community resilient in opposition to the assault. This breakthrough means that injecting noise into inside layers can bolster future neural networks’ adaptability and defensive capabilities.

Whereas this strategy proves promising, it’s essential to acknowledge that it addresses a particular assault kind. The researchers warning that future attackers could devise novel approaches to avoid the feature-space noise thought of of their analysis. The battle between assault and protection in neural networks is an never-ending arms race, requiring a continuing cycle of innovation and enchancment to safeguard the programs we depend on day by day.

As reliance on synthetic intelligence for vital functions grows, the robustness of neural networks in opposition to sudden knowledge and intentional assaults turns into more and more paramount. With ongoing innovation on this area, there’s hope for much more strong and resilient neural networks within the months and years forward.

Try the Paper and Reference Article. All Credit score For This Analysis Goes To the Researchers on This Undertaking. Additionally, don’t neglect to hitch our 30k+ ML SubReddit, 40k+ Facebook Community, Discord Channel, and Email Newsletter, the place we share the most recent AI analysis information, cool AI initiatives, and extra.

If you like our work, you will love our newsletter..

Niharika is a Technical consulting intern at Marktechpost. She is a 3rd 12 months undergraduate, at present pursuing her B.Tech from Indian Institute of Know-how(IIT), Kharagpur. She is a extremely enthusiastic particular person with a eager curiosity in Machine studying, Information science and AI and an avid reader of the most recent developments in these fields.