SageMaker Distribution is now out there on Amazon SageMaker Studio

SageMaker Distribution is a pre-built Docker picture containing many well-liked packages for machine studying (ML), knowledge science, and knowledge visualization. This consists of deep studying frameworks like PyTorch, TensorFlow, and Keras; well-liked Python packages like NumPy, scikit-learn, and pandas; and IDEs like JupyterLab. Along with this, SageMaker Distribution helps conda, micromamba, and pip as Python bundle managers.

In Might 2023, we launched SageMaker Distribution as an open-source project at JupyterCon. This launch helped you employ SageMaker Distribution to run experiments in your native environments. We at the moment are natively offering that picture in Amazon SageMaker Studio so that you simply achieve the excessive efficiency, compute, and safety advantages of working your experiments on Amazon SageMaker.

In comparison with the sooner open-source launch, you may have the next further capabilities:

- The open-source picture is now out there as a first-party picture in SageMaker Studio. Now you can merely select the open-source SageMaker Distribution from the record when selecting a picture and kernel in your notebooks, with out having to create a customized picture.

- The SageMaker Python SDK bundle is now built-in with the picture.

On this put up, we present the options and benefits of utilizing the SageMaker Distribution picture.

Use SageMaker Distribution in SageMaker Studio

In case you have entry to an present Studio area, you’ll be able to launch SageMaker Studio. To create a Studio area, comply with the instructions in Onboard to Amazon SageMaker Domain.

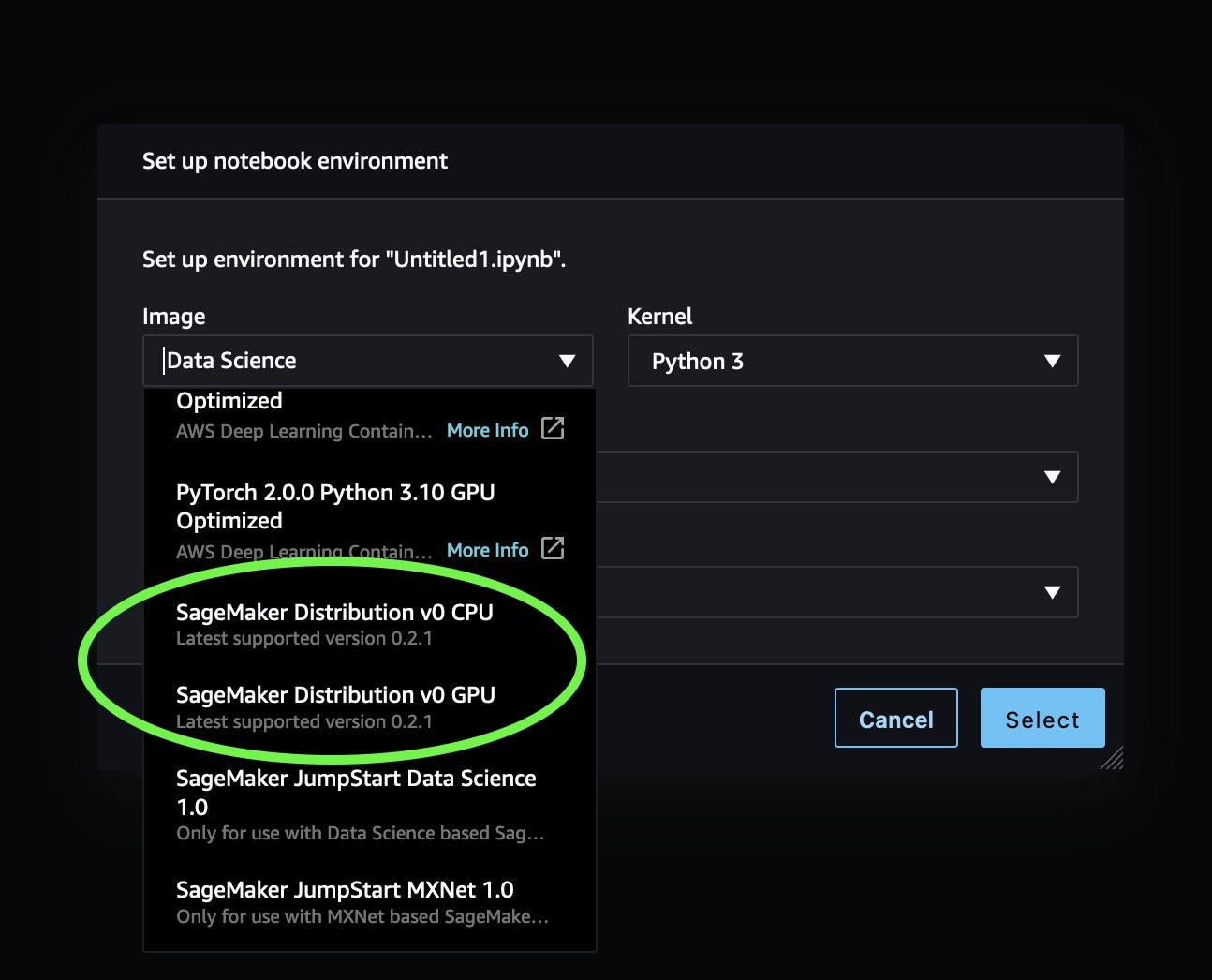

- Within the SageMaker Studio UI, select File from the menu bar, select New, and select Pocket book.

- When prompted for the picture and occasion, select the SageMaker Distribution v0 CPU or SageMaker Distribution v0 GPU picture.

- Select your Kernel, then select Choose.

Now you can begin working your instructions without having to put in widespread ML packages and frameworks! You too can run notebooks working on supported frameworks similar to PyTorch and TensorFlow from the SageMaker examples repository, with out having to modify the energetic kernels.

Run code remotely utilizing SageMaker Distribution

Within the public beta announcement, we mentioned graduating notebooks from native compute environments to SageMaker Studio, and likewise operationalizing the pocket book utilizing notebook jobs.

Moreover, you’ll be able to straight run your local notebook code as a SageMaker training job by merely including a @distant decorator to your perform.

Let’s attempt an instance. Add the next code to your Studio pocket book working on the SageMaker Distribution picture:

Once you run the cell, the perform will run as a distant SageMaker coaching job on an ml.m5.xlarge pocket book, and the SDK routinely picks up the SageMaker Distribution picture because the coaching picture in Amazon Elastic Container Registry (Amazon ECR). For deep studying workloads, you may also run your script on a number of parallel cases.

Reproduce Conda environments from SageMaker Distribution elsewhere

SageMaker Distribution is out there as a public Docker picture. Nonetheless, for knowledge scientists extra conversant in Conda environments than Docker, the GitHub repository additionally gives the setting recordsdata for every picture construct so you’ll be able to construct Conda environments for each CPU and GPU variations.

The construct artifacts for every model are saved below the sagemaker-distribution/build_artifacts listing. To create the identical setting as any of the out there SageMaker Distribution variations, run the next instructions, changing the --file parameter with the correct setting recordsdata:

Customise the open-source SageMaker Distribution picture

The open-source SageMaker Distribution picture has probably the most generally used packages for knowledge science and ML. Nonetheless, knowledge scientists would possibly require entry to further packages, and enterprise prospects might need proprietary packages that present further capabilities for his or her customers. In such circumstances, there are a number of choices to have a runtime setting with all required packages. So as of accelerating complexity, they’re listed as follows:

- You possibly can set up packages straight on the pocket book. We suggest Conda and micromamba, however pip additionally works.

- Knowledge scientists conversant in Conda for bundle administration can reproduce the Conda setting from SageMaker Distribution elsewhere and set up and handle further packages in that setting going ahead.

- If directors need a repeatable and managed runtime setting for his or her customers, they’ll prolong SageMaker Distribution’s Docker photographs and preserve their very own picture. See Bring your own SageMaker image for detailed directions to create and use a customized picture in Studio.

Clear up

If you happen to experimented with SageMaker Studio, shut down all Studio apps to keep away from paying for unused compute utilization. See Shut down and Update Studio Apps for directions.

Conclusion

In the present day, we introduced the launch of the open-source SageMaker Distribution picture inside SageMaker Studio. We confirmed you how one can use the picture in SageMaker Studio as one of many out there first-party photographs, how one can operationalize your scripts utilizing the SageMaker Python SDK @distant decorator, how one can reproduce the Conda environments from SageMaker Distribution outdoors Studio, and how one can customise the picture. We encourage you to check out SageMaker Distribution and share your suggestions by way of GitHub!

Extra References

In regards to the authors

Durga Sury is an ML Options Architect within the Amazon SageMaker Service SA staff. She is keen about making machine studying accessible to everybody. In her 4 years at AWS, she has helped arrange AI/ML platforms for enterprise prospects. When she isn’t working, she loves bike rides, thriller novels, and climbing along with her 5-year-old husky.

Durga Sury is an ML Options Architect within the Amazon SageMaker Service SA staff. She is keen about making machine studying accessible to everybody. In her 4 years at AWS, she has helped arrange AI/ML platforms for enterprise prospects. When she isn’t working, she loves bike rides, thriller novels, and climbing along with her 5-year-old husky.

Ketan Vijayvargiya is a Senior Software program Growth Engineer in Amazon Net Providers (AWS). His focus areas are machine studying, distributed techniques and open supply. Exterior work, he likes to spend his time self-hosting and having fun with nature.

Ketan Vijayvargiya is a Senior Software program Growth Engineer in Amazon Net Providers (AWS). His focus areas are machine studying, distributed techniques and open supply. Exterior work, he likes to spend his time self-hosting and having fun with nature.