Mapping footage to phrases for zero-shot composed picture retrieval – Google Analysis Weblog

Picture retrieval performs a vital position in serps. Sometimes, their customers depend on both picture or textual content as a question to retrieve a desired goal picture. Nonetheless, text-based retrieval has its limitations, as describing the goal picture precisely utilizing phrases will be difficult. For example, when trying to find a trend merchandise, customers might want an merchandise whose particular attribute, e.g., the colour of a brand or the brand itself, is totally different from what they discover in a web site. But trying to find the merchandise in an present search engine is just not trivial since exactly describing the style merchandise by textual content will be difficult. To deal with this truth, composed image retrieval (CIR) retrieves pictures primarily based on a question that mixes each a picture and a textual content pattern that gives directions on the best way to modify the picture to suit the supposed retrieval goal. Thus, CIR permits exact retrieval of the goal picture by combining picture and textual content.

Nonetheless, CIR strategies require giant quantities of labeled information, i.e., triplets of a 1) question picture, 2) description, and three) goal picture. Gathering such labeled information is dear, and fashions educated on this information are sometimes tailor-made to a particular use case, limiting their means to generalize to totally different datasets.

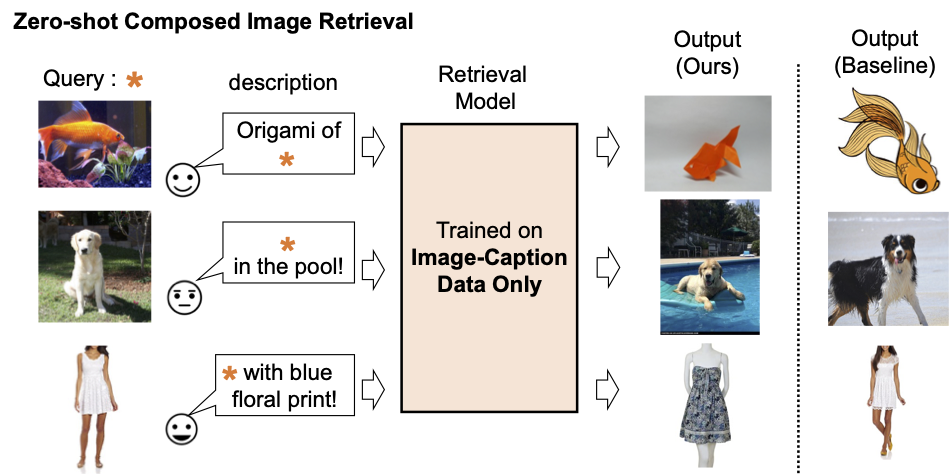

To deal with these challenges, in “Pic2Word: Mapping Pictures to Words for Zero-shot Composed Image Retrieval”, we suggest a activity known as zero-shot CIR (ZS-CIR). In ZS-CIR, we goal to construct a single CIR mannequin that performs a wide range of CIR duties, reminiscent of object composition, attribute editing, or area conversion, with out requiring labeled triplet information. As a substitute, we suggest to coach a retrieval mannequin utilizing large-scale image-caption pairs and unlabeled pictures, that are significantly simpler to gather than supervised CIR datasets at scale. To encourage reproducibility and additional advance this house, we additionally release the code.

|

| Description of present composed picture retrieval mannequin. |

Technique overview

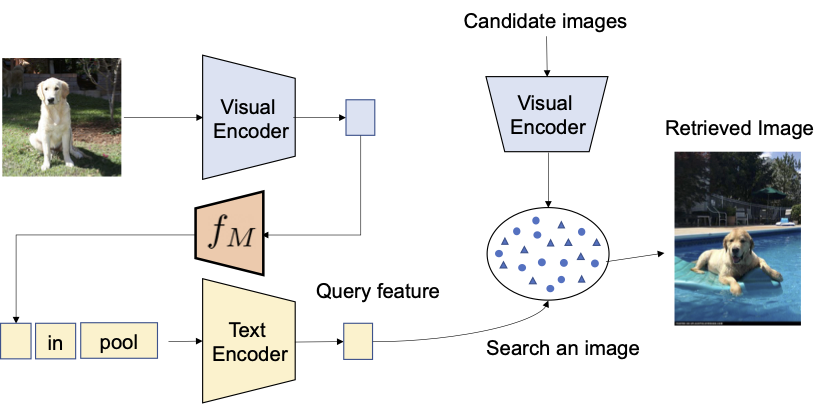

We suggest to leverage the language capabilities of the language encoder within the contrastive language-image pre-trained model (CLIP), which excels at producing semantically significant language embeddings for a variety of textual ideas and attributes. To that finish, we use a light-weight mapping sub-module in CLIP that’s designed to map an enter image (e.g., a photograph of a cat) from the picture embedding house to a phrase token (e.g., “cat”) within the textual enter house. The entire community is optimized with the vision-language contrastive loss to once more make sure the visible and textual content embedding areas are as shut as potential given a pair of a picture and its textual description. Then, the question picture will be handled as if it’s a phrase. This permits the versatile and seamless composition of question picture options and textual content descriptions by the language encoder. We name our technique Pic2Word and supply an summary of its coaching course of within the determine beneath. We wish the mapped token s to characterize the enter picture within the type of phrase token. Then, we prepare the mapping community to reconstruct the picture embedding within the language embedding, p. Particularly, we optimize the contrastive loss proposed in CLIP computed between the visible embedding v and the textual embedding p.

|

| Coaching of the mapping community (fM) utilizing unlabeled pictures solely. We optimize solely the mapping community with a frozen visible and textual content encoder. |

Given the educated mapping community, we are able to regard a picture as a phrase token and pair it with the textual content description to flexibly compose the joint image-text question as proven within the determine beneath.

|

| With the educated mapping community, we regard the picture as a phrase token and pair it with the textual content description to flexibly compose the joint image-text question. |

Analysis

We conduct a wide range of experiments to guage Pic2Word’s efficiency on a wide range of CIR duties.

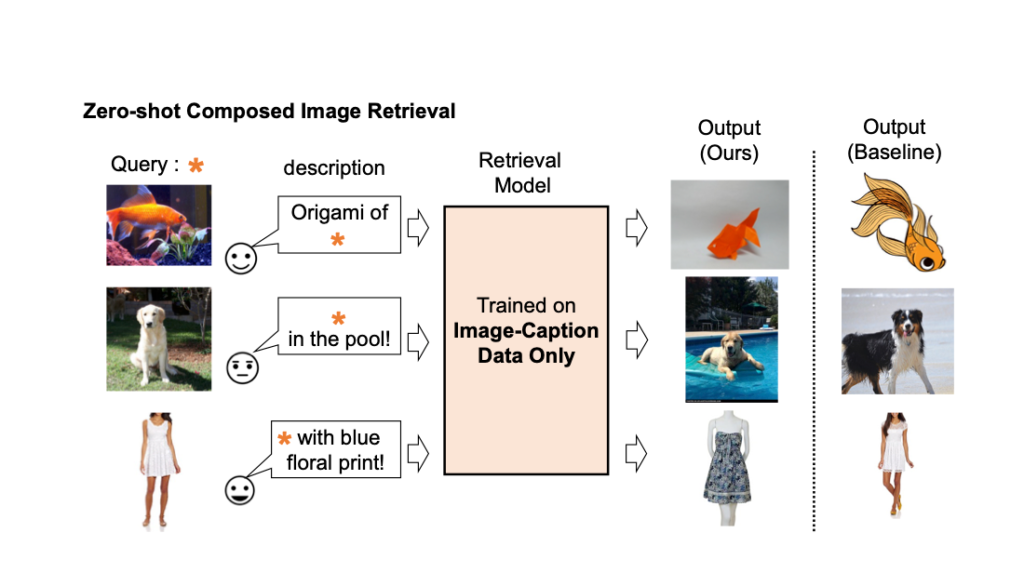

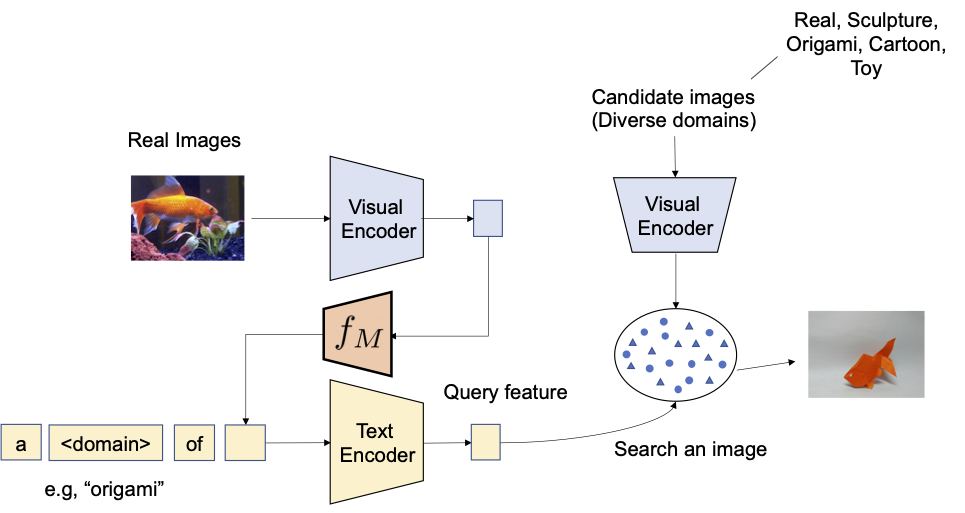

Area conversion

We first consider the potential of compositionality of the proposed technique on area conversion — given a picture and the specified new picture area (e.g., sculpture, origami, cartoon, toy), the output of the system must be a picture with the identical content material however within the new desired picture area or model. As illustrated beneath, we consider the power to compose the class info and area description given as a picture and textual content, respectively. We consider the conversion from actual pictures to 4 domains utilizing ImageNet and ImageNet-R.

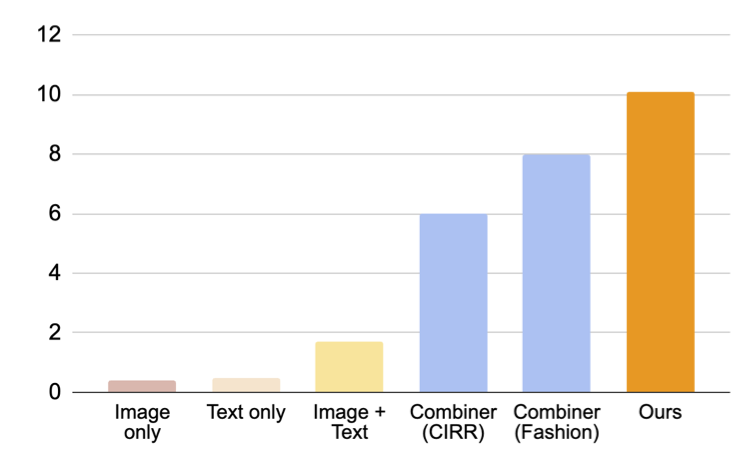

To check with approaches that don’t require supervised coaching information, we decide three approaches: (i) picture solely performs retrieval solely with visible embedding, (ii) textual content solely employs solely textual content embedding, and (iii) picture + textual content averages the visible and textual content embedding to compose the question. The comparability with (iii) exhibits the significance of composing picture and textual content utilizing a language encoder. We additionally evaluate with Combiner, which trains the CIR mannequin on Fashion-IQ or CIRR.

|

| We goal to transform the area of the enter question picture into the one described with textual content, e.g., origami. |

As proven in determine beneath, our proposed strategy outperforms baselines by a big margin.

|

| Outcomes (recall@10, i.e., the proportion of related cases within the first 10 pictures retrieved.) on composed picture retrieval for area conversion. |

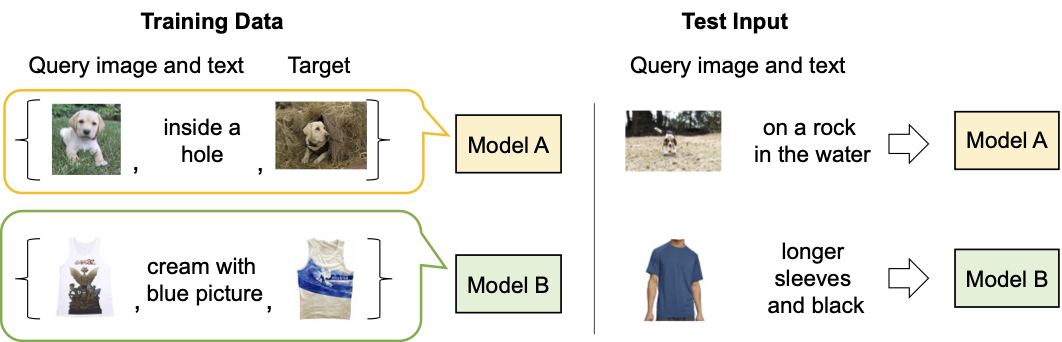

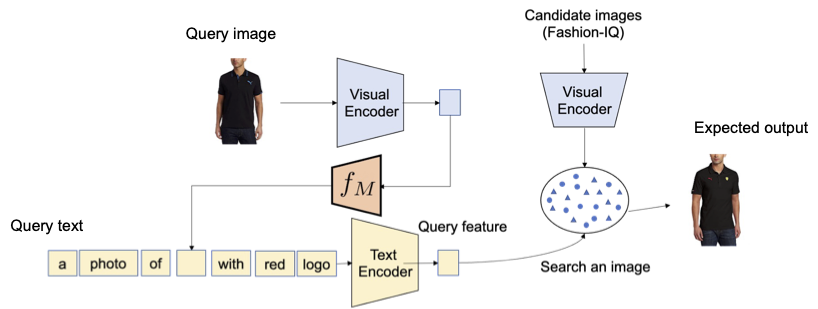

Style attribute composition

Subsequent, we consider the composition of trend attributes, reminiscent of the colour of fabric, brand, and size of sleeve, utilizing the Fashion-IQ dataset. The determine beneath illustrates the specified output given the question.

|

| Overview of CIR for trend attributes. |

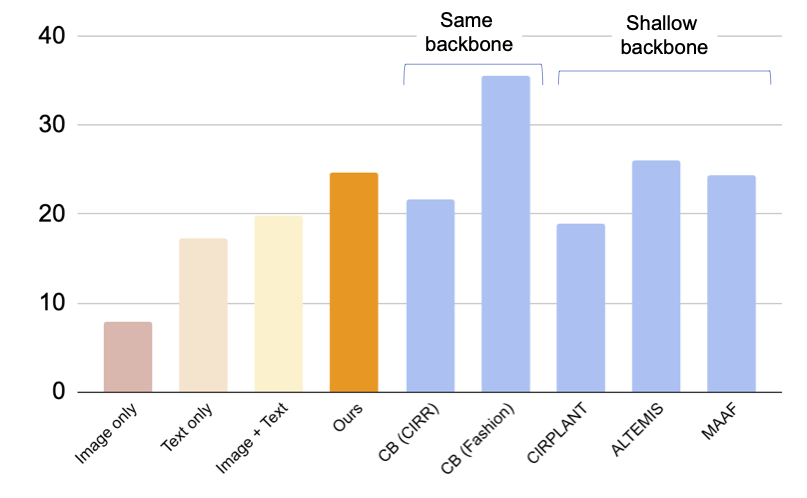

Within the determine beneath, we current a comparability with baselines, together with supervised baselines that utilized triplets for coaching the CIR mannequin: (i) CB makes use of the identical structure as our strategy, (ii) CIRPLANT, ALTEMIS, MAAF use a smaller spine, reminiscent of ResNet50. Comparability to those approaches will give us the understanding on how nicely our zero-shot strategy performs on this activity.

Though CB outperforms our strategy, our technique performs higher than supervised baselines with smaller backbones. This outcome means that by using a strong CLIP mannequin, we are able to prepare a extremely efficient CIR mannequin with out requiring annotated triplets.

|

| Outcomes (recall@10, i.e., the proportion of related cases within the first 10 pictures retrieved.) on composed picture retrieval for Style-IQ dataset (larger is best). Gentle blue bars prepare the mannequin utilizing triplets. Notice that our strategy performs on par with these supervised baselines with shallow (smaller) backbones. |

Qualitative outcomes

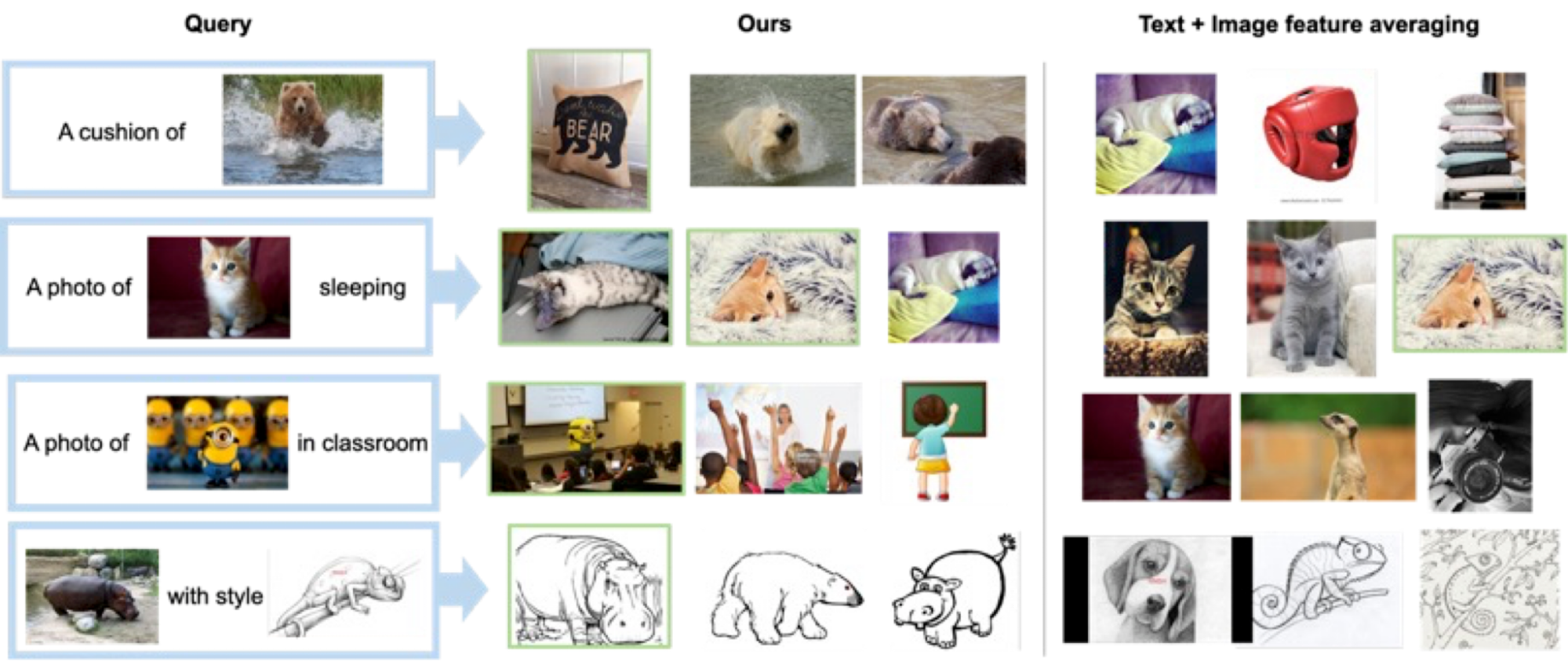

We present a number of examples within the determine beneath. In comparison with a baseline technique that doesn’t require supervised coaching information (textual content + picture function averaging), our strategy does a greater job of accurately retrieving the goal picture.

|

| Qualitative outcomes on numerous question pictures and textual content description. |

Conclusion and future work

On this article, we introduce Pic2Word, a technique for mapping footage to phrases for ZS-CIR. We suggest to transform the picture right into a phrase token to realize a CIR mannequin utilizing solely an image-caption dataset. By a wide range of experiments, we confirm the effectiveness of the educated mannequin on numerous CIR duties, indicating that coaching on an image-caption dataset can construct a robust CIR mannequin. One potential future analysis route is using caption information to coach the mapping community, though we use solely picture information within the current work.

Acknowledgements

This analysis was carried out by Kuniaki Saito, Kihyuk Sohn, Xiang Zhang, Chun-Liang Li, Chen-Yu Lee, Kate Saenko, and Tomas Pfister. Additionally because of Zizhao Zhang and Sergey Ioffe for his or her helpful suggestions.