Choice studying with automated suggestions for cache eviction – Google AI Weblog

Caching is a ubiquitous concept in laptop science that considerably improves the efficiency of storage and retrieval methods by storing a subset of standard objects nearer to the shopper primarily based on request patterns. An necessary algorithmic piece of cache administration is the choice coverage used for dynamically updating the set of things being saved, which has been extensively optimized over a number of a long time, leading to a number of efficient and robust heuristics. Whereas making use of machine studying to cache insurance policies has proven promising outcomes lately (e.g., LRB, LHD, storage applications), it stays a problem to outperform strong heuristics in a approach that may generalize reliably past benchmarks to manufacturing settings, whereas sustaining aggressive compute and reminiscence overheads.

In “HALP: Heuristic Aided Learned Preference Eviction Policy for YouTube Content Delivery Network”, offered at NSDI 2023, we introduce a scalable state-of-the-art cache eviction framework that’s primarily based on discovered rewards and makes use of preference learning with automated suggestions. The Heuristic Aided Discovered Choice (HALP) framework is a meta-algorithm that makes use of randomization to merge a light-weight heuristic baseline eviction rule with a discovered reward mannequin. The reward mannequin is a light-weight neural community that’s repeatedly skilled with ongoing automated suggestions on desire comparisons designed to imitate the offline oracle. We talk about how HALP has improved infrastructure effectivity and consumer video playback latency for YouTube’s content delivery network.

Discovered preferences for cache eviction choices

The HALP framework computes cache eviction choices primarily based on two elements: (1) a neural reward mannequin skilled with automated suggestions by way of desire studying, and (2) a meta-algorithm that mixes a discovered reward mannequin with a quick heuristic. Because the cache observes incoming requests, HALP repeatedly trains a small neural community that predicts a scalar reward for every merchandise by formulating this as a desire studying technique by way of pairwise desire suggestions. This side of HALP is just like reinforcement learning from human feedback (RLHF) methods, however with two necessary distinctions:

- Suggestions is automated and leverages well-known outcomes in regards to the construction of offline optimal cache eviction insurance policies.

- The mannequin is discovered repeatedly utilizing a transient buffer of coaching examples constructed from the automated suggestions course of.

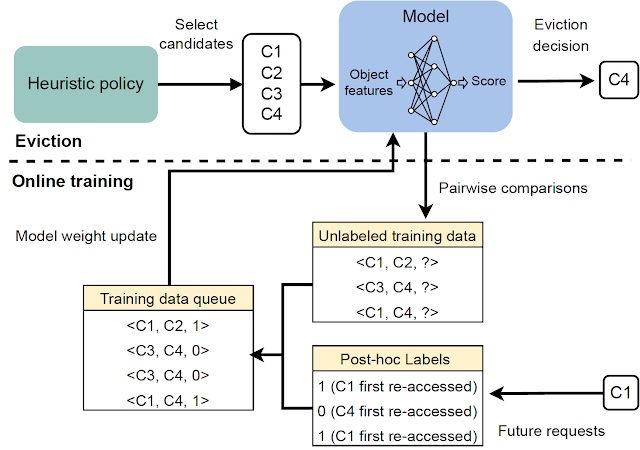

The eviction choices depend on a filtering mechanism with two steps. First, a small subset of candidates is chosen utilizing a heuristic that’s environment friendly, however suboptimal by way of efficiency. Then, a re-ranking step optimizes from throughout the baseline candidates by way of the sparing use of a neural community scoring operate to “increase” the standard of the ultimate choice.

As a manufacturing prepared cache coverage implementation, HALP not solely makes eviction choices, but in addition subsumes the end-to-end strategy of sampling pairwise desire queries used to effectively assemble related suggestions and replace the mannequin to energy eviction choices.

A neural reward mannequin

HALP makes use of a lightweight two-layer multilayer perceptron (MLP) as its reward mannequin to selectively rating particular person objects within the cache. The options are constructed and managed as a metadata-only “ghost cache” (just like classical insurance policies like ARC). After any given lookup request, along with common cache operations, HALP conducts the book-keeping (e.g., monitoring and updating characteristic metadata in a capacity-constrained key-value retailer) wanted to replace the dynamic inner illustration. This contains: (1) externally tagged options offered by the consumer as enter, together with a cache lookup request, and (2) internally constructed dynamic options (e.g., time since final entry, common time between accesses) constructed from lookup occasions noticed on every merchandise.

HALP learns its reward mannequin totally on-line ranging from a random weight initialization. This may look like a nasty concept, particularly if the choices are made completely for optimizing the reward mannequin. Nevertheless, the eviction choices depend on each the discovered reward mannequin and a suboptimal however easy and strong heuristic like LRU. This enables for optimum efficiency when the reward mannequin has totally generalized, whereas remaining strong to a briefly uninformative reward mannequin that’s but to generalize, or within the strategy of catching as much as a altering surroundings.

One other benefit of on-line coaching is specialization. Every cache server runs in a probably totally different surroundings (e.g., geographic location), which influences native community circumstances and what content material is regionally standard, amongst different issues. On-line coaching mechanically captures this info whereas lowering the burden of generalization, versus a single offline coaching answer.

Scoring samples from a randomized precedence queue

It may be impractical to optimize for the standard of eviction choices with an completely discovered goal for 2 causes.

- Compute effectivity constraints: Inference with a discovered community could be considerably dearer than the computations carried out in sensible cache insurance policies working at scale. This limits not solely the expressivity of the community and options, but in addition how typically these are invoked throughout every eviction choice.

- Robustness for generalizing out-of-distribution: HALP is deployed in a setup that includes continuous studying, the place a rapidly altering workload may generate request patterns that could be briefly out-of-distribution with respect to beforehand seen information.

To deal with these points, HALP first applies an affordable heuristic scoring rule that corresponds to an eviction precedence to establish a small candidate pattern. This course of relies on environment friendly random sampling that approximates actual priority queues. The precedence operate for producing candidate samples is meant to be fast to compute utilizing present manually-tuned algorithms, e.g., LRU. Nevertheless, that is configurable to approximate different cache alternative heuristics by modifying a easy value operate. Not like prior work, the place the randomization was used to tradeoff approximation for effectivity, HALP additionally depends on the inherent randomization within the sampled candidates throughout time steps for offering the mandatory exploratory variety within the sampled candidates for each coaching and inference.

The ultimate evicted merchandise is chosen from among the many provided candidates, equal to the best-of-n reranked pattern, equivalent to maximizing the expected desire rating based on the neural reward mannequin. The identical pool of candidates used for eviction choices can also be used to assemble the pairwise desire queries for automated suggestions, which helps decrease the coaching and inference skew between samples.

|

| An summary of the two-stage course of invoked for every eviction choice. |

On-line desire studying with automated suggestions

The reward mannequin is discovered utilizing on-line suggestions, which relies on mechanically assigned desire labels that point out, wherever possible, the ranked desire ordering for the time taken to obtain future re-accesses, ranging from a given snapshot in time amongst every queried pattern of things. That is just like the oracle optimum coverage, which, at any given time, evicts an merchandise with the farthest future entry from all of the objects within the cache.

|

| Era of the automated suggestions for studying the reward mannequin. |

To make this suggestions course of informative, HALP constructs pairwise desire queries which might be almost certainly to be related for eviction choices. In sync with the same old cache operations, HALP points a small variety of pairwise desire queries whereas making every eviction choice, and appends them to a set of pending comparisons. The labels for these pending comparisons can solely be resolved at a random future time. To function on-line, HALP additionally performs some extra book-keeping after every lookup request to course of any pending comparisons that may be labeled incrementally after the present request. HALP indexes the pending comparability buffer with every factor concerned within the comparability, and recycles the reminiscence consumed by stale comparisons (neither of which can ever get a re-access) to make sure that the reminiscence overhead related to suggestions technology stays bounded over time.

|

| Overview of all essential elements in HALP. |

Outcomes: Impression on the YouTube CDN

By empirical evaluation, we present that HALP compares favorably to state-of-the-art cache insurance policies on public benchmark traces by way of cache miss charges. Nevertheless, whereas public benchmarks are a great tool, they’re hardly ever enough to seize all of the utilization patterns the world over over time, to not point out the various {hardware} configurations that we have now already deployed.

Till lately, YouTube servers used an optimized LRU-variant for reminiscence cache eviction. HALP will increase YouTube’s reminiscence egress/ingress — the ratio of the full bandwidth egress served by the CDN to that consumed for retrieval (ingress) because of cache misses — by roughly 12% and reminiscence hit price by 6%. This reduces latency for customers, since reminiscence reads are sooner than disk reads, and likewise improves egressing capability for disk-bounded machines by shielding the disks from visitors.

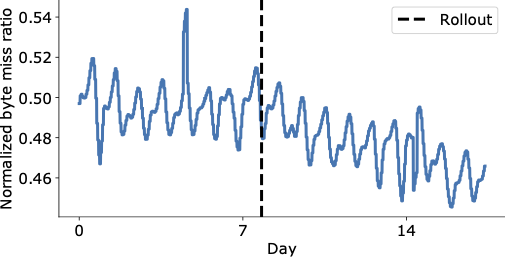

The determine under exhibits a visually compelling discount within the byte miss ratio within the days following HALP’s remaining rollout on the YouTube CDN, which is now serving considerably extra content material from throughout the cache with decrease latency to the top consumer, and with out having to resort to dearer retrieval that will increase the working prices.

|

| Mixture worldwide YouTube byte miss ratio earlier than and after rollout (vertical dashed line). |

An aggregated efficiency enchancment might nonetheless disguise necessary regressions. Along with measuring total influence, we additionally conduct an evaluation within the paper to grasp its influence on totally different racks utilizing a machine degree evaluation, and discover it to be overwhelmingly constructive.

Conclusion

We launched a scalable state-of-the-art cache eviction framework that’s primarily based on discovered rewards and makes use of preference learning with automated suggestions. Due to its design selections, HALP could be deployed in a fashion just like some other cache coverage with out the operational overhead of getting to individually handle the labeled examples, coaching process and the mannequin variations as extra offline pipelines frequent to most machine studying methods. Subsequently, it incurs solely a small additional overhead in comparison with different classical algorithms, however has the additional benefit of having the ability to reap the benefits of extra options to make its eviction choices and repeatedly adapt to altering entry patterns.

That is the primary large-scale deployment of a discovered cache coverage to a broadly used and closely trafficked CDN, and has considerably improved the CDN infrastructure effectivity whereas additionally delivering a greater high quality of expertise to customers.

Acknowledgements

Ramki Gummadi is now a part of Google DeepMind. We wish to thank John Guilyard for assist with the illustrations and Richard Schooler for suggestions on this put up.