Gaussian Course of Regression with tfprobability

How do you inspire, or give you a narrative round Gaussian Course of Regression on a weblog primarily devoted to deep studying?

Straightforward. As demonstrated by seemingly unavoidable, reliably recurring Twitter “wars” surrounding AI, nothing attracts consideration like controversy and antagonism. So, let’s return twenty years and discover citations of individuals saying, “right here come Gaussian Processes, we don’t have to trouble with these finicky, arduous to tune neural networks anymore!” And at this time, right here we’re; everybody is aware of one thing about deep studying however who’s heard of Gaussian Processes?

Whereas related tales inform loads about historical past of science and growth of opinions, we choose a distinct angle right here. Within the preface to their 2006 e book on Gaussian Processes for Machine Studying (Rasmussen and Williams 2005), Rasmussen and Williams say, referring to the “two cultures” – the disciplines of statistics and machine studying, respectively:

Gaussian course of fashions in some sense deliver collectively work within the two communities.

On this publish, that “in some sense” will get very concrete. We’ll see a Keras community, outlined and skilled the standard approach, that has a Gaussian Course of layer for its important constituent.

The duty shall be “easy” multivariate regression.

As an apart, this “bringing collectively communities” – or methods of pondering, or answer methods – makes for a very good total characterization of TensorFlow Chance as nicely.

Gaussian Processes

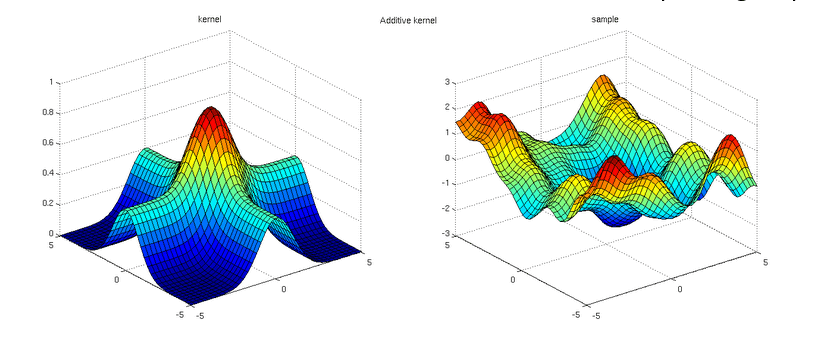

A Gaussian Course of is a distribution over functions, where the function values you sample are jointly Gaussian – roughly talking, a generalization to infinity of the multivariate Gaussian. In addition to the reference e book we already talked about (Rasmussen and Williams 2005), there are a variety of good introductions on the web: see e.g. https://distill.pub/2019/visual-exploration-gaussian-processes/ or https://peterroelants.github.io/posts/gaussian-process-tutorial/. And like on every thing cool, there’s a chapter on Gaussian Processes within the late David MacKay’s (MacKay 2002) book.

On this publish, we’ll use TensorFlow Chance’s Variational Gaussian Course of (VGP) layer, designed to effectively work with “massive knowledge.” As Gaussian Course of Regression (GPR, any more) includes the inversion of a – probably massive – covariance matrix, makes an attempt have been made to design approximate variations, usually primarily based on variational ideas. The TFP implementation is predicated on papers by Titsias (2009) (Titsias 2009) and Hensman et al. (2013) (Hensman, Fusi, and Lawrence 2013). As an alternative of (p(mathbf{y}|mathbf{X})), the precise likelihood of the goal knowledge given the precise enter, we work with a variational distribution (q(mathbf{u})) that acts as a decrease certain.

Right here (mathbf{u}) are the perform values at a set of so-called inducing index factors specified by the person, chosen to nicely cowl the vary of the particular knowledge. This algorithm is loads quicker than “regular” GPR, as solely the covariance matrix of (mathbf{u}) needs to be inverted. As we’ll see under, no less than on this instance (in addition to in others not described right here) it appears to be fairly sturdy as to the variety of inducing factors handed.

Let’s begin.

The dataset

The Concrete Compressive Strength Data Set is a part of the UCI Machine Studying Repository. Its internet web page says:

Concrete is crucial materials in civil engineering. The concrete compressive energy is a extremely nonlinear perform of age and elements.

Extremely nonlinear perform – doesn’t that sound intriguing? In any case, it ought to represent an attention-grabbing check case for GPR.

Here’s a first look.

library(tidyverse)

library(GGally)

library(visreg)

library(readxl)

library(rsample)

library(reticulate)

library(tfdatasets)

library(keras)

library(tfprobability)

concrete <- read_xls(

"Concrete_Data.xls",

col_names = c(

"cement",

"blast_furnace_slag",

"fly_ash",

"water",

"superplasticizer",

"coarse_aggregate",

"fine_aggregate",

"age",

"energy"

),

skip = 1

)

concrete %>% glimpse()Observations: 1,030

Variables: 9

$ cement <dbl> 540.0, 540.0, 332.5, 332.5, 198.6, 266.0, 380.0, 380.0, …

$ blast_furnace_slag <dbl> 0.0, 0.0, 142.5, 142.5, 132.4, 114.0, 95.0, 95.0, 114.0,…

$ fly_ash <dbl> 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,…

$ water <dbl> 162, 162, 228, 228, 192, 228, 228, 228, 228, 228, 192, 1…

$ superplasticizer <dbl> 2.5, 2.5, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0…

$ coarse_aggregate <dbl> 1040.0, 1055.0, 932.0, 932.0, 978.4, 932.0, 932.0, 932.0…

$ fine_aggregate <dbl> 676.0, 676.0, 594.0, 594.0, 825.5, 670.0, 594.0, 594.0, …

$ age <dbl> 28, 28, 270, 365, 360, 90, 365, 28, 28, 28, 90, 28, 270,…

$ energy <dbl> 79.986111, 61.887366, 40.269535, 41.052780, 44.296075, 4…It isn’t that massive – just a bit greater than 1000 rows –, however nonetheless, we can have room to experiment with totally different numbers of inducing factors.

We now have eight predictors, all numeric. Apart from age (in days), these characterize plenty (in kg) in a single cubic metre of concrete. The goal variable, energy, is measured in megapascals.

Let’s get a fast overview of mutual relationships.

Checking for a potential interplay (one {that a} layperson might simply consider), does cement focus act in another way on concrete energy relying on how a lot water there may be within the combination?

To anchor our future notion of how nicely VGP does for this instance, we match a easy linear mannequin, in addition to one involving two-way interactions.

# scale predictors right here already, so knowledge are the identical for all fashions

concrete[, 1:8] <- scale(concrete[, 1:8])

# train-test cut up

set.seed(777)

cut up <- initial_split(concrete, prop = 0.8)

practice <- coaching(cut up)

check <- testing(cut up)

# easy linear mannequin with no interactions

fit1 <- lm(energy ~ ., knowledge = practice)

fit1 %>% summary()Name:

lm(system = energy ~ ., knowledge = practice)

Residuals:

Min 1Q Median 3Q Max

-30.594 -6.075 0.612 6.694 33.032

Coefficients:

Estimate Std. Error t worth Pr(>|t|)

(Intercept) 35.6773 0.3596 99.204 < 2e-16 ***

cement 13.0352 0.9702 13.435 < 2e-16 ***

blast_furnace_slag 9.1532 0.9582 9.552 < 2e-16 ***

fly_ash 5.9592 0.8878 6.712 3.58e-11 ***

water -2.5681 0.9503 -2.702 0.00703 **

superplasticizer 1.9660 0.6138 3.203 0.00141 **

coarse_aggregate 1.4780 0.8126 1.819 0.06929 .

fine_aggregate 2.2213 0.9470 2.346 0.01923 *

age 7.7032 0.3901 19.748 < 2e-16 ***

---

Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

Residual commonplace error: 10.32 on 816 levels of freedom

A number of R-squared: 0.627, Adjusted R-squared: 0.6234

F-statistic: 171.5 on 8 and 816 DF, p-value: < 2.2e-16Name:

lm(system = energy ~ (.)^2, knowledge = practice)

Residuals:

Min 1Q Median 3Q Max

-24.4000 -5.6093 -0.0233 5.7754 27.8489

Coefficients:

Estimate Std. Error t worth Pr(>|t|)

(Intercept) 40.7908 0.8385 48.647 < 2e-16 ***

cement 13.2352 1.0036 13.188 < 2e-16 ***

blast_furnace_slag 9.5418 1.0591 9.009 < 2e-16 ***

fly_ash 6.0550 0.9557 6.336 3.98e-10 ***

water -2.0091 0.9771 -2.056 0.040090 *

superplasticizer 3.8336 0.8190 4.681 3.37e-06 ***

coarse_aggregate 0.3019 0.8068 0.374 0.708333

fine_aggregate 1.9617 0.9872 1.987 0.047256 *

age 14.3906 0.5557 25.896 < 2e-16 ***

cement:blast_furnace_slag 0.9863 0.5818 1.695 0.090402 .

cement:fly_ash 1.6434 0.6088 2.700 0.007093 **

cement:water -4.2152 0.9532 -4.422 1.11e-05 ***

cement:superplasticizer -2.1874 1.3094 -1.670 0.095218 .

cement:coarse_aggregate 0.2472 0.5967 0.414 0.678788

cement:fine_aggregate 0.7944 0.5588 1.422 0.155560

cement:age 4.6034 1.3811 3.333 0.000899 ***

blast_furnace_slag:fly_ash 2.1216 0.7229 2.935 0.003434 **

blast_furnace_slag:water -2.6362 1.0611 -2.484 0.013184 *

blast_furnace_slag:superplasticizer -0.6838 1.2812 -0.534 0.593676

blast_furnace_slag:coarse_aggregate -1.0592 0.6416 -1.651 0.099154 .

blast_furnace_slag:fine_aggregate 2.0579 0.5538 3.716 0.000217 ***

blast_furnace_slag:age 4.7563 1.1148 4.266 2.23e-05 ***

fly_ash:water -2.7131 0.9858 -2.752 0.006054 **

fly_ash:superplasticizer -2.6528 1.2553 -2.113 0.034891 *

fly_ash:coarse_aggregate 0.3323 0.7004 0.474 0.635305

fly_ash:fine_aggregate 2.6764 0.7817 3.424 0.000649 ***

fly_ash:age 7.5851 1.3570 5.589 3.14e-08 ***

water:superplasticizer 1.3686 0.8704 1.572 0.116289

water:coarse_aggregate -1.3399 0.5203 -2.575 0.010194 *

water:fine_aggregate -0.7061 0.5184 -1.362 0.173533

water:age 0.3207 1.2991 0.247 0.805068

superplasticizer:coarse_aggregate 1.4526 0.9310 1.560 0.119125

superplasticizer:fine_aggregate 0.1022 1.1342 0.090 0.928239

superplasticizer:age 1.9107 0.9491 2.013 0.044444 *

coarse_aggregate:fine_aggregate 1.3014 0.4750 2.740 0.006286 **

coarse_aggregate:age 0.7557 0.9342 0.809 0.418815

fine_aggregate:age 3.4524 1.2165 2.838 0.004657 **

---

Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

Residual commonplace error: 8.327 on 788 levels of freedom

A number of R-squared: 0.7656, Adjusted R-squared: 0.7549

F-statistic: 71.48 on 36 and 788 DF, p-value: < 2.2e-16We additionally retailer the predictions on the check set, for later comparability.

linreg_preds1 <- fit1 %>% predict(check[, 1:8])

linreg_preds2 <- fit2 %>% predict(check[, 1:8])

evaluate <-

data.frame(

y_true = check$energy,

linreg_preds1 = linreg_preds1,

linreg_preds2 = linreg_preds2

)With no additional preprocessing required, the tfdatasets enter pipeline finally ends up good and brief:

create_dataset <- perform(df, batch_size, shuffle = TRUE) {

df <- as.matrix(df)

ds <-

tensor_slices_dataset(list(df[, 1:8], df[, 9, drop = FALSE]))

if (shuffle)

ds <- ds %>% dataset_shuffle(buffer_size = nrow(df))

ds %>%

dataset_batch(batch_size = batch_size)

}

# only one potential alternative for batch measurement ...

batch_size <- 64

train_ds <- create_dataset(practice, batch_size = batch_size)

test_ds <- create_dataset(check, batch_size = nrow(check), shuffle = FALSE)And on to mannequin creation.

The mannequin

Mannequin definition is brief as nicely, though there are some things to develop on. Don’t execute this but:

mannequin <- keras_model_sequential() %>%

layer_dense(items = 8,

input_shape = 8,

use_bias = FALSE) %>%

layer_variational_gaussian_process(

# variety of inducing factors

num_inducing_points = num_inducing_points,

# kernel for use by the wrapped Gaussian Course of distribution

kernel_provider = RBFKernelFn(),

# output form

event_shape = 1,

# preliminary values for the inducing factors

inducing_index_points_initializer = initializer_constant(as.matrix(sampled_points)),

unconstrained_observation_noise_variance_initializer =

initializer_constant(array(0.1))

)Two arguments to layer_variational_gaussian_process() want some preparation earlier than we will really run this. First, because the documentation tells us, kernel_provider ought to be

a layer occasion outfitted with an @property, which yields a

PositiveSemidefiniteKerneloccasion”.

In different phrases, the VGP layer wraps one other Keras layer that, itself, wraps or bundles collectively the TensorFlow Variables containing the kernel parameters.

We will make use of reticulate’s new PyClass constructor to meet the above necessities.

Utilizing PyClass, we will straight inherit from a Python object, including and/or overriding strategies or fields as we like – and sure, even create a Python property.

bt <- import("builtins")

RBFKernelFn <- reticulate::PyClass(

"KernelFn",

inherit = tensorflow::tf$keras$layers$Layer,

list(

`__init__` = perform(self, ...) {

kwargs <- list(...)

tremendous()$`__init__`(kwargs)

dtype <- kwargs[["dtype"]]

self$`_amplitude` = self$add_variable(initializer = initializer_zeros(),

dtype = dtype,

title = 'amplitude')

self$`_length_scale` = self$add_variable(initializer = initializer_zeros(),

dtype = dtype,

title = 'length_scale')

NULL

},

name = perform(self, x, ...) {

x

},

kernel = bt$property(

reticulate::py_func(

perform(self)

tfp$math$psd_kernels$ExponentiatedQuadratic(

amplitude = tf$nn$softplus(array(0.1) * self$`_amplitude`),

length_scale = tf$nn$softplus(array(2) * self$`_length_scale`)

)

)

)

)

)The Gaussian Course of kernel used is certainly one of a number of obtainable in tfp.math.psd_kernels (psd standing for constructive semidefinite), and possibly the one which involves thoughts first when pondering of GPR: the squared exponential, or exponentiated quadratic. The model utilized in TFP, with hyperparameters amplitude (a) and size scale (lambda), is

[k(x,x’) = 2 a exp (frac{- 0.5 (x−x’)^2}{lambda^2}) ]

Right here the attention-grabbing parameter is the size scale (lambda). When we’ve got a number of options, their size scales – as induced by the training algorithm – replicate their significance: If, for some function, (lambda) is massive, the respective squared deviations from the imply don’t matter that a lot. The inverse size scale can thus be used for computerized relevance dedication (Neal 1996).

The second factor to maintain is selecting the preliminary index factors. From experiments, the precise decisions don’t matter that a lot, so long as the information are sensibly lined. As an example, an alternate approach we tried was to assemble an empirical distribution (tfd_empirical) from the information, after which pattern from it. Right here as an alternative, we simply use an – pointless, admittedly, given the provision of pattern in R – fancy option to choose random observations from the coaching knowledge:

num_inducing_points <- 50

sample_dist <- tfd_uniform(low = 1, excessive = nrow(practice) + 1)

sample_ids <- sample_dist %>%

tfd_sample(num_inducing_points) %>%

tf$forged(tf$int32) %>%

as.numeric()

sampled_points <- practice[sample_ids, 1:8]One attention-grabbing level to notice earlier than we begin coaching: Computation of the posterior predictive parameters includes a Cholesky decomposition, which might fail if, resulting from numerical points, the covariance matrix is not constructive particular. A enough motion to absorb our case is to do all computations utilizing tf$float64:

Now we outline (for actual, this time) and run the mannequin.

mannequin <- keras_model_sequential() %>%

layer_dense(items = 8,

input_shape = 8,

use_bias = FALSE) %>%

layer_variational_gaussian_process(

num_inducing_points = num_inducing_points,

kernel_provider = RBFKernelFn(),

event_shape = 1,

inducing_index_points_initializer = initializer_constant(as.matrix(sampled_points)),

unconstrained_observation_noise_variance_initializer =

initializer_constant(array(0.1))

)

# KL weight sums to at least one for one epoch

kl_weight <- batch_size / nrow(practice)

# loss that implements the VGP algorithm

loss <- perform(y, rv_y)

rv_y$variational_loss(y, kl_weight = kl_weight)

mannequin %>% compile(optimizer = optimizer_adam(lr = 0.008),

loss = loss,

metrics = "mse")

historical past <- mannequin %>% match(train_ds,

epochs = 100,

validation_data = test_ds)

plot(historical past)

Curiously, increased numbers of inducing factors (we tried 100 and 200) didn’t have a lot affect on regression efficiency. Nor does the precise alternative of multiplication constants (0.1 and 2) utilized to the skilled kernel Variables (_amplitude and _length_scale)

make a lot of a distinction to the top end result.

Predictions

We generate predictions on the check set and add them to the knowledge.body containing the linear fashions’ predictions.

As with different probabilistic output layers, “the predictions” are in reality distributions; to acquire precise tensors we pattern from them. Right here, we common over 10 samples:

We plot the common VGP predictions in opposition to the bottom reality, along with the predictions from the easy linear mannequin (cyan) and the mannequin together with two-way interactions (violet):

ggplot(evaluate, aes(x = y_true)) +

geom_abline(slope = 1, intercept = 0) +

geom_point(aes(y = vgp_preds, colour = "VGP")) +

geom_point(aes(y = linreg_preds1, colour = "easy lm"), alpha = 0.4) +

geom_point(aes(y = linreg_preds2, colour = "lm w/ interactions"), alpha = 0.4) +

scale_colour_manual("",

values = c("VGP" = "black", "easy lm" = "cyan", "lm w/ interactions" = "violet")) +

coord_cartesian(xlim = c(min(evaluate$y_true), max(evaluate$y_true)), ylim = c(min(evaluate$y_true), max(evaluate$y_true))) +

ylab("predictions") +

theme(facet.ratio = 1)

Determine 1: Predictions vs. floor reality for linear regression (no interactions; cyan), linear regression with 2-way interactions (violet), and VGP (black).

Moreover, evaluating MSEs for the three units of predictions, we see

So, the VGP does in reality outperform each baselines. One thing else we may be concerned about: How do its predictions range? Not as a lot as we’d need, had been we to assemble uncertainty estimates from them alone. Right here we plot the ten samples we drew earlier than:

samples_df <-

data.frame(cbind(evaluate$y_true, as.matrix(yhat_samples))) %>%

collect(key = run, worth = prediction, -X1) %>%

rename(y_true = "X1")

ggplot(samples_df, aes(y_true, prediction)) +

geom_point(aes(colour = run),

alpha = 0.2,

measurement = 2) +

geom_abline(slope = 1, intercept = 0) +

theme(legend.place = "none") +

ylab("repeated predictions") +

theme(facet.ratio = 1)

Determine 2: Predictions from 10 consecutive samples from the VGP distribution.

Dialogue: Function Relevance

As talked about above, the inverse size scale can be utilized as an indicator of function significance. When utilizing the ExponentiatedQuadratic kernel alone, there’ll solely be a single size scale; in our instance, the preliminary dense layer takes of scaling (and moreover, recombining) the options.

Alternatively, we might wrap the ExponentiatedQuadratic in a FeatureScaled kernel.

FeatureScaled has a further scale_diag parameter associated to precisely that: function scaling. Experiments with FeatureScaled (and preliminary dense layer eliminated, to be “honest”) confirmed barely worse efficiency, and the realized scale_diag values different fairly a bit from run to run. For that cause, we selected to current the opposite method; nevertheless, we embrace the code for a wrapping FeatureScaled in case readers wish to experiment with this:

ScaledRBFKernelFn <- reticulate::PyClass(

"KernelFn",

inherit = tensorflow::tf$keras$layers$Layer,

list(

`__init__` = perform(self, ...) {

kwargs <- list(...)

tremendous()$`__init__`(kwargs)

dtype <- kwargs[["dtype"]]

self$`_amplitude` = self$add_variable(initializer = initializer_zeros(),

dtype = dtype,

title = 'amplitude')

self$`_length_scale` = self$add_variable(initializer = initializer_zeros(),

dtype = dtype,

title = 'length_scale')

self$`_scale_diag` = self$add_variable(

initializer = initializer_ones(),

dtype = dtype,

form = 8L,

title = 'scale_diag'

)

NULL

},

name = perform(self, x, ...) {

x

},

kernel = bt$property(

reticulate::py_func(

perform(self)

tfp$math$psd_kernels$FeatureScaled(

kernel = tfp$math$psd_kernels$ExponentiatedQuadratic(

amplitude = tf$nn$softplus(array(1) * self$`_amplitude`),

length_scale = tf$nn$softplus(array(2) * self$`_length_scale`)

),

scale_diag = tf$nn$softplus(array(1) * self$`_scale_diag`)

)

)

)

)

)Lastly, if all you cared about was prediction efficiency, you can use FeatureScaled and hold the preliminary dense layer all the identical. However in that case, you’d in all probability use a neural community – not a Gaussian Course of – anyway …

Thanks for studying!

MacKay, David J. C. 2002. Info Principle, Inference & Studying Algorithms. New York, NY, USA: Cambridge College Press.

Neal, Radford M. 1996. Bayesian Studying for Neural Networks. Berlin, Heidelberg: Springer-Verlag.

Rasmussen, Carl Edward, and Christopher Okay. I. Williams. 2005. Gaussian Processes for Machine Studying (Adaptive Computation and Machine Studying). The MIT Press.