Speed up your studying in direction of AWS Certification exams with automated quiz technology utilizing Amazon SageMaker foundations fashions

Getting AWS Licensed may also help you propel your profession, whether or not you’re trying to discover a new position, showcase your expertise to tackle a brand new challenge, or change into your workforce’s go-to knowledgeable. And since AWS Certification exams are created by specialists within the related position or technical space, making ready for considered one of these exams helps you construct the required expertise recognized by expert practitioners within the area.

Studying the FAQ web page of the AWS providers related in your certification examination is vital with the intention to purchase a deeper understanding of the service. Nevertheless, this might take fairly a while. Studying FAQs of even one service can take half a day to learn and perceive. For instance, the Amazon SageMaker FAQ comprises about 33 pages (printed) of content material simply on SageMaker.

Wouldn’t it’s a better and extra enjoyable studying expertise should you might use a system to check your self on the AWS service FAQ pages? Truly, you may develop such a system utilizing state-of-the-art language fashions and some traces of Python.

On this put up, we current a complete information of deploying a multiple-choice quiz answer for the FAQ pages of any AWS service, based mostly on the AI21 Jurassic-2 Jumbo Instruct basis mannequin on Amazon SageMaker Jumpstart.

Massive language fashions

Lately, language fashions have seen an enormous surge in measurement and recognition. In 2018, BERT-large made its debut with its 340 million parameters and revolutionary transformer structure, setting the benchmark for efficiency on NLP duties. In a number of quick years, the state-of-the-art by way of mannequin measurement has ballooned by over 500 instances; OpenAI’s GPT-3 and Bloom 176 B, each with 175 billion parameters, and AI21 Jurassic-2 Jumbo Instruct with 178 billion parameters are simply three examples of huge language fashions (LLMs) elevating the bar on pure language processing (NLP) accuracy.

SageMaker basis fashions

SageMaker gives a variety of fashions from fashionable mannequin hubs together with Hugging Face, PyTorch Hub, and TensorFlow Hub, and propriety ones from AI21, Cohere, and LightOn, which you’ll be able to entry inside your machine studying (ML) improvement workflow in SageMaker. Current advances in ML have given rise to a brand new class of fashions often called basis fashions, which have billions of parameters and are educated on large quantities of knowledge. These basis fashions will be tailored to a variety of use instances, equivalent to textual content summarization, producing digital artwork, and language translation. As a result of these fashions will be costly to coach, clients need to use current pre-trained basis fashions and fine-tune them as wanted, somewhat than prepare these fashions themselves. SageMaker gives a curated record of fashions that you would be able to select from on the SageMaker console.

With JumpStart, you could find basis fashions from completely different suppliers, enabling you to get began with basis fashions shortly. You possibly can overview mannequin traits and utilization phrases, and check out these fashions utilizing a take a look at UI widget. While you’re prepared to make use of a basis mannequin at scale, you are able to do so simply with out leaving SageMaker through the use of pre-built notebooks from mannequin suppliers. Your knowledge, whether or not used for evaluating or utilizing the mannequin at scale, is rarely shared with third events as a result of the fashions are hosted and deployed on AWS.

AI21 Jurassic-2 Jumbo Instruct

Jurassic-2 Jumbo Instruct is an LLM by AI21 Labs that may be utilized to any language comprehension or technology job. It’s optimized to comply with pure language directions and context, so there is no such thing as a want to offer it with any examples. The endpoint comes pre-loaded with the mannequin and able to serve queries through an easy-to-use API and Python SDK, so you may hit the bottom working. Jurassic-2 Jumbo Instruct is a high performer at HELM, notably in duties associated to studying and writing.

Answer overview

Within the following sections, we undergo the steps to check the Jurassic-2 Jumbo instruct mannequin in SageMaker:

- Select the Jurassic-2 Jumbo instruct mannequin on the SageMaker console.

- Consider the mannequin utilizing the playground.

- Use a pocket book related to the muse mannequin to deploy it in your surroundings.

Entry Jurassic-2 Jumbo Instruct by way of the SageMaker console

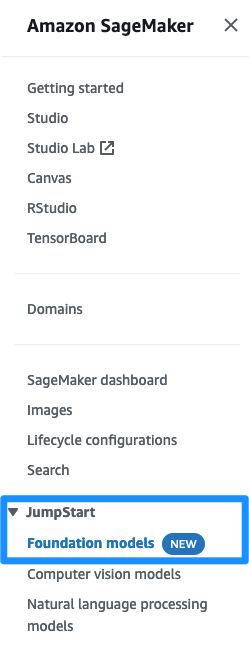

Step one is to log in to the SageMaker console. Below JumpStart within the navigation pane, select Basis fashions to request entry to the mannequin record.

After your account is enable listed, you may see an inventory of fashions on this web page and seek for the Jurassic-2 Jumbo Instruct mannequin.

Consider the Jurassic-2 Jumbo Instruct mannequin within the mannequin playground

On the AI21 Jurassic-2 Jumbo Instruct itemizing, select View Mannequin. You will note an outline of the mannequin and the duties that you would be able to carry out. Learn by way of the EULA for the mannequin earlier than continuing.

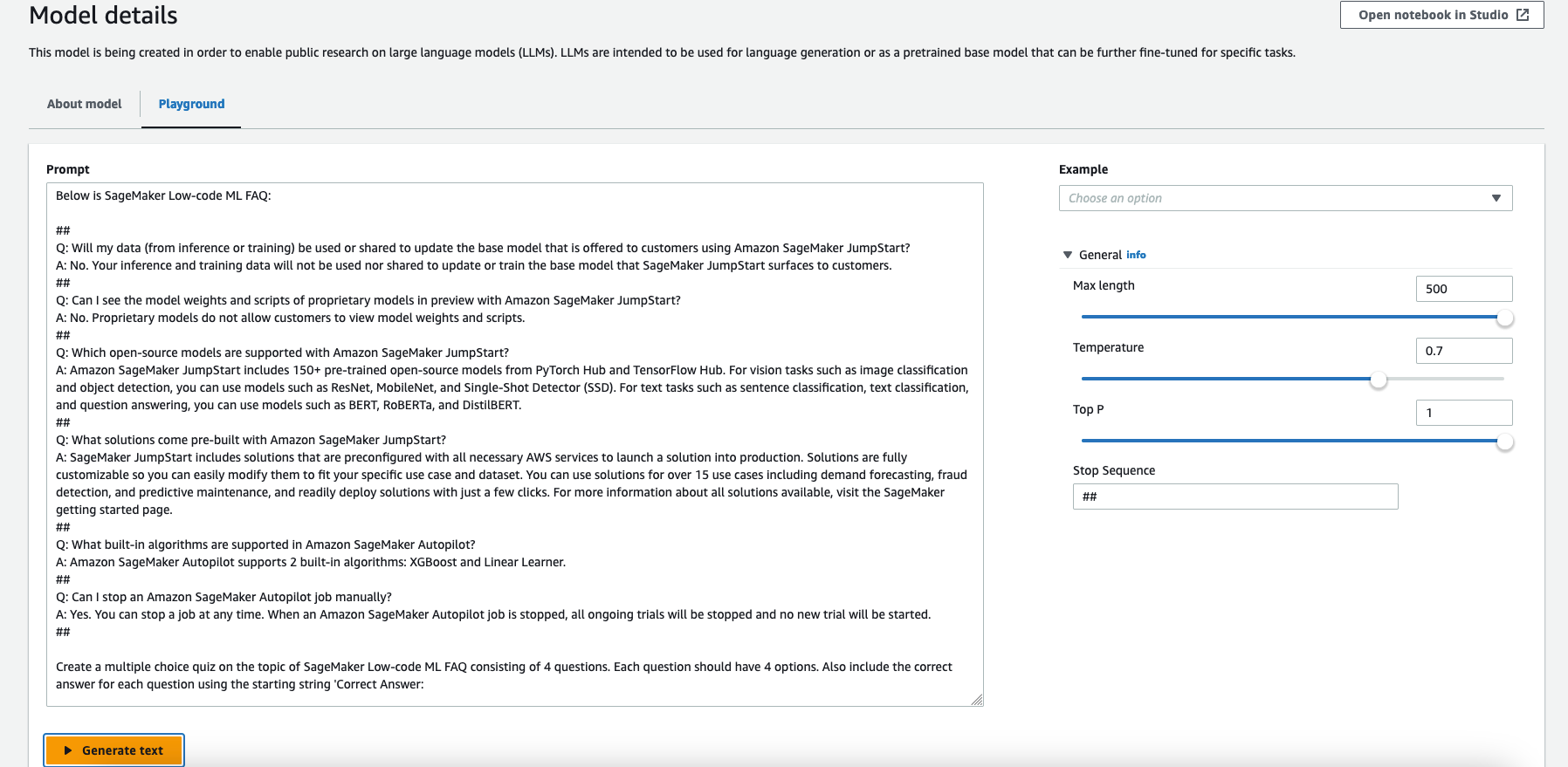

Let’s first check out the mannequin to generate a take a look at based mostly on the SageMaker FAQ web page. Navigate to the Playground tab.

On the Playground tab, you may present pattern prompts to the Jurassic-2 Jumbo Instruct mannequin and think about the output.

Notice that you should use a most of 500 tokens. We set the Max size to 500, which is the utmost variety of tokens to generate. This mannequin has an 8,192-token context window (the size of the immediate plus completion needs to be at most 8,192 tokens).

To make it simpler to see the immediate, you may enlarge the Immediate field.

As a result of we are able to use a most of 500 tokens, we take a small portion of the Amazon SageMaker FAQs page, the Low-code ML part, for our take a look at immediate.

We use the next immediate:

Immediate engineering is an iterative course of. You ought to be clear and particular, and provides the mannequin time to assume.

Right here we specified the context with ## as cease sequences, which alerts the mannequin to cease producing after this character or string is generated. It’s helpful when utilizing a few-shot immediate.

Subsequent, we’re clear and really particular in our immediate, asking for a multiple-choice quiz, consisting of 4 questions with 4 choices. We ask the mannequin to incorporate the proper reply for every query utilizing the beginning string 'Appropriate Reply:' so we are able to parse it later utilizing Python:

A well-designed immediate could make the mannequin extra artistic and generalized in order that it might probably simply adapt to new duties. Prompts may also assist incorporate area data on particular duties and enhance interpretability. Immediate engineering can significantly enhance the efficiency of zero-shot and few-shot studying fashions. Creating high-quality prompts requires cautious consideration of the duty at hand, in addition to a deep understanding of the mannequin’s strengths and limitations.

Within the scope of this put up, we don’t cowl this extensive space additional.

Copy the immediate and enter it within the Immediate field, then select Generate textual content.

This sends the immediate to the Jurassic-2 Jumbo Instruct mannequin for inference. Notice that experimenting within the playground is free.

Additionally needless to say regardless of the cutting-edge nature of LLMs, they’re nonetheless susceptible to biases, errors, and hallucinations.

After studying the mannequin output totally and thoroughly, we are able to see that the mannequin generated fairly a great quiz!

After you’ve performed with the mannequin, it’s time to make use of the pocket book and deploy it as an endpoint in your surroundings. We use a small Python operate to parse the output and simulate an interactive take a look at.

Deploy the Jurassic-2 Jumbo Instruct basis mannequin from a pocket book

You need to use the next sample notebook to deploy Jurassic-2 Jumbo Instruct utilizing SageMaker. Notice that this instance makes use of an ml.p4d.24xlarge occasion. In case your default restrict in your AWS account is 0, it’s worthwhile to request a limit increase for this GPU occasion.

Let’s create the endpoint utilizing SageMaker inference. First, we set the required variables, then we deploy the mannequin from the mannequin package deal:

After the endpoint is deployed, you may run inference queries in opposition to the mannequin.

After the mannequin is deployed, you may work together with the deployed endpoint utilizing the next code snippet:

With the Jurassic-2 Jumbo Instruct basis mannequin deployed on an ml.p4d.24xlarge occasion SageMaker endpoint, you should use a immediate with 4,096 tokens. You possibly can take the identical immediate we used within the playground and add many extra questions. On this instance, we added the FAQ’s complete Low-code ML part as context into the immediate.

We are able to see the output of the mannequin, which generated a multiple-choice quiz with 4 questions and 4 choices for every query.

Now you may develop a Python operate to parse the output and create an interactive multiple-choice quiz.

It’s fairly easy to develop such a operate with a number of traces of code. You possibly can parse the reply simply as a result of the mannequin created a line with “Appropriate Reply: ” for every query, precisely as we requested within the immediate. We don’t present the Python code for the quiz technology within the scope of this put up.

Run the quiz within the pocket book

Utilizing the Python operate we created earlier and the output from the Jurassic-2 Jumbo Instruct basis mannequin, we run the interactive quiz within the pocket book.

You possibly can see I answered three out of 4 questions appropriately and bought a 75% grade. Maybe I have to learn the SageMaker FAQ a number of extra instances!

Clear up

After you’ve tried out the endpoint, be sure to take away the SageMaker inference endpoint and the mannequin to stop any expenses:

Conclusion

On this put up, we confirmed you how one can take a look at and use AI21’s Jurassic-2 Jumbo Instruct mannequin utilizing SageMaker to construct an automatic quiz technology system. This was achieved utilizing a somewhat easy immediate with a publicly out there SageMaker FAQ web page’s textual content embedded and some traces of Python code.

Just like this instance talked about within the put up, you may customise a basis mannequin for your enterprise with just some labeled examples. As a result of all the information is encrypted and doesn’t depart your AWS account, you may belief that your knowledge will stay personal and confidential.

Request entry to try out the foundation model in SageMaker as we speak, and tell us your suggestions!

In regards to the Creator

Eitan Sela is a Machine Studying Specialist Options Architect with Amazon Net Companies. He works with AWS clients to offer steering and technical help, serving to them construct and function machine studying options on AWS. In his spare time, Eitan enjoys jogging and studying the most recent machine studying articles.

Eitan Sela is a Machine Studying Specialist Options Architect with Amazon Net Companies. He works with AWS clients to offer steering and technical help, serving to them construct and function machine studying options on AWS. In his spare time, Eitan enjoys jogging and studying the most recent machine studying articles.