Saying DataPerf’s 2023 challenges – Google AI Weblog

Machine studying (ML) affords great potential, from diagnosing cancer to engineering safe self-driving cars to amplifying human productivity. To appreciate this potential, nevertheless, organizations want ML options to be dependable with ML resolution growth that’s predictable and tractable. The important thing to each is a deeper understanding of ML knowledge — find out how to engineer coaching datasets that produce prime quality fashions and take a look at datasets that ship correct indicators of how shut we’re to fixing the goal downside.

The method of making prime quality datasets is sophisticated and error-prone, from the preliminary choice and cleansing of uncooked knowledge, to labeling the information and splitting it into coaching and take a look at units. Some experts believe that almost all of the trouble in designing an ML system is definitely the sourcing and making ready of information. Every step can introduce issues and biases. Even lots of the customary datasets we use right this moment have been proven to have mislabeled knowledge that may destabilize established ML benchmarks. Regardless of the basic significance of information to ML, it’s solely now starting to obtain the identical stage of consideration that fashions and studying algorithms have been having fun with for the previous decade.

In direction of this purpose, we’re introducing DataPerf, a set of recent data-centric ML challenges to advance the state-of-the-art in knowledge choice, preparation, and acquisition applied sciences, designed and constructed by a broad collaboration throughout business and academia. The preliminary model of DataPerf consists of 4 challenges targeted on three widespread data-centric duties throughout three utility domains; imaginative and prescient, speech and pure language processing (NLP). On this blogpost, we define dataset growth bottlenecks confronting researchers and talk about the function of benchmarks and leaderboards in incentivizing researchers to deal with these challenges. We invite innovators in academia and business who search to measure and validate breakthroughs in data-centric ML to exhibit the facility of their algorithms and methods to create and enhance datasets by these benchmarks.

Knowledge is the brand new bottleneck for ML

Knowledge is the brand new code: it’s the coaching knowledge that determines the utmost doable high quality of an ML resolution. The mannequin solely determines the diploma to which that most high quality is realized; in a way the mannequin is a lossy compiler for the information. Although high-quality coaching datasets are important to continued development within the discipline of ML, a lot of the information on which the sphere depends right this moment is almost a decade outdated (e.g., ImageNet or LibriSpeech) or scraped from the online with very restricted filtering of content material (e.g., LAION or The Pile).

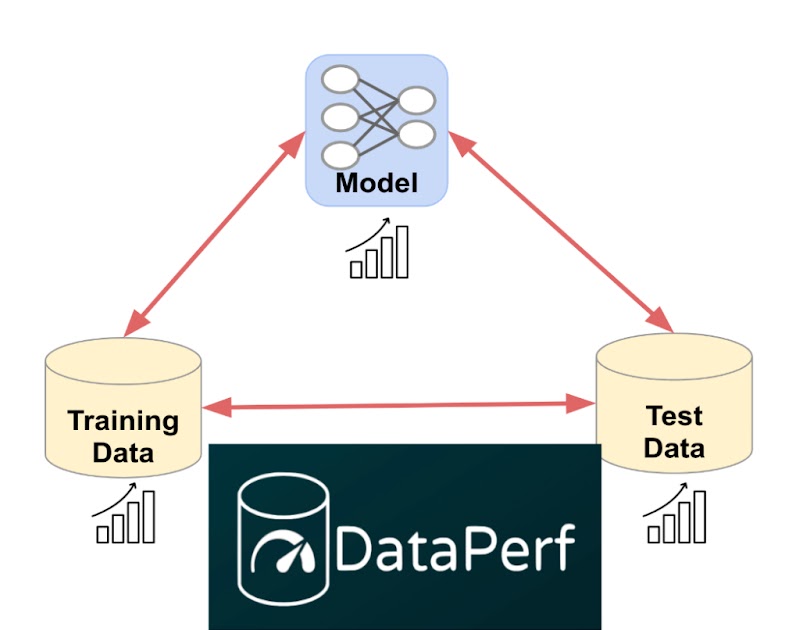

Regardless of the significance of information, ML analysis so far has been dominated by a deal with fashions. Earlier than trendy deep neural networks (DNNs), there have been no ML fashions ample to match human habits for a lot of easy duties. This beginning situation led to a model-centric paradigm through which (1) the coaching dataset and take a look at dataset had been “frozen” artifacts and the purpose was to develop a greater mannequin, and (2) the take a look at dataset was chosen randomly from the identical pool of information because the coaching set for statistical causes. Sadly, freezing the datasets ignored the flexibility to enhance coaching accuracy and effectivity with higher knowledge, and utilizing take a look at units drawn from the identical pool as coaching knowledge conflated becoming that knowledge nicely with truly fixing the underlying downside.

As a result of we at the moment are creating and deploying ML options for more and more refined duties, we have to engineer take a look at units that absolutely seize actual world issues and coaching units that, together with superior fashions, ship efficient options. We have to shift from right this moment’s model-centric paradigm to a data-centric paradigm through which we acknowledge that for almost all of ML builders, creating prime quality coaching and take a look at knowledge will probably be a bottleneck.

|

| Shifting from right this moment’s model-centric paradigm to a data-centric paradigm enabled by high quality datasets and data-centric algorithms like these measured in DataPerf. |

Enabling ML builders to create higher coaching and take a look at datasets would require a deeper understanding of ML knowledge high quality and the event of algorithms, instruments, and methodologies for optimizing it. We will start by recognizing widespread challenges in dataset creation and creating efficiency metrics for algorithms that handle these challenges. As an illustration:

- Knowledge choice: Usually, now we have a bigger pool of obtainable knowledge than we are able to label or prepare on successfully. How will we select crucial knowledge for coaching our fashions?

- Knowledge cleansing: Human labelers generally make errors. ML builders can’t afford to have consultants examine and proper all labels. How can we choose essentially the most likely-to-be-mislabeled knowledge for correction?

We will additionally create incentives that reward good dataset engineering. We anticipate that prime high quality coaching knowledge, which has been fastidiously chosen and labeled, will develop into a beneficial product in lots of industries however presently lack a approach to assess the relative worth of various datasets with out truly coaching on the datasets in query. How will we clear up this downside and allow quality-driven “knowledge acquisition”?

DataPerf: The primary leaderboard for knowledge

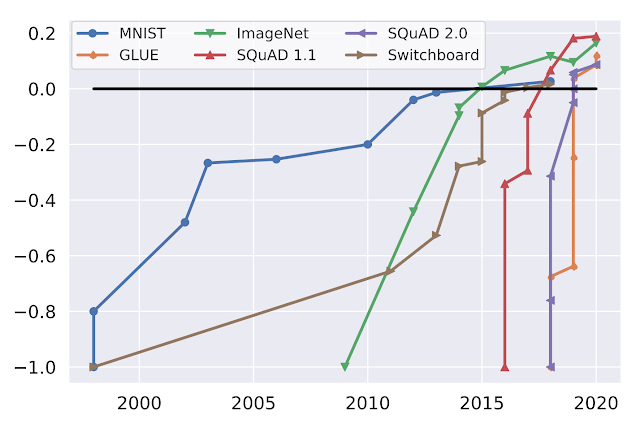

We imagine good benchmarks and leaderboards can drive speedy progress in data-centric know-how. ML benchmarks in academia have been important to stimulating progress within the discipline. Contemplate the next graph which exhibits progress on well-liked ML benchmarks (MNIST, ImageNet, SQuAD, GLUE, Switchboard) over time:

|

| Efficiency over time for well-liked benchmarks, normalized with preliminary efficiency at minus one and human efficiency at zero. (Supply: Douwe, et al. 2021; used with permission.) |

On-line leaderboards present official validation of benchmark outcomes and catalyze communities intent on optimizing these benchmarks. As an illustration, Kaggle has over 10 million registered users. The MLPerf official benchmark outcomes have helped drive an over 16x improvement in training performance on key benchmarks.

DataPerf is the primary neighborhood and platform to construct leaderboards for knowledge benchmarks, and we hope to have a similar influence on analysis and growth for data-centric ML. The preliminary model of DataPerf consists of leaderboards for 4 challenges targeted on three data-centric duties (knowledge choice, cleansing, and acquisition) throughout three utility domains (imaginative and prescient, speech and NLP):

- Training data selection (Vision): Design a knowledge choice technique that chooses the most effective coaching set from a big candidate pool of weakly labeled coaching pictures.

- Training data selection (Speech): Design a knowledge choice technique that chooses the most effective coaching set from a big candidate pool of robotically extracted clips of spoken phrases.

- Training data cleaning (Vision): Design a knowledge cleansing technique that chooses samples to relabel from a “noisy” coaching set the place among the labels are incorrect.

- Training dataset evaluation (NLP): High quality datasets might be costly to assemble, and have gotten beneficial commodities. Design a knowledge acquisition technique that chooses which coaching dataset to “purchase” primarily based on restricted details about the information.

For every problem, the DataPerf website supplies design paperwork that outline the issue, take a look at mannequin(s), high quality goal, guidelines and tips on find out how to run the code and submit. The reside leaderboards are hosted on the Dynabench platform, which additionally supplies an internet analysis framework and submission tracker. Dynabench is an open-source mission, hosted by the MLCommons Association, targeted on enabling data-centric leaderboards for each coaching and take a look at knowledge and data-centric algorithms.

Tips on how to become involved

We’re a part of a neighborhood of ML researchers, knowledge scientists and engineers who attempt to enhance knowledge high quality. We invite innovators in academia and business to measure and validate data-centric algorithms and methods to create and enhance datasets by the DataPerf benchmarks. The deadline for the primary spherical of challenges is Could twenty sixth, 2023.

Acknowledgements

The DataPerf benchmarks had been created during the last 12 months by engineers and scientists from: Coactive.ai, Eidgenössische Technische Hochschule (ETH) Zurich, Google, Harvard College, Meta, ML Commons, Stanford College. As well as, this might not have been doable with out the assist of DataPerf working group members from Carnegie Mellon College, Digital Prism Advisors, Factored, Hugging Face, Institute for Human and Machine Cognition, Touchdown.ai, San Diego Supercomputing Heart, Thomson Reuters Lab, and TU Eindhoven.