a pretrained visible language mannequin for describing multi-event movies – Google AI Weblog

Movies have develop into an more and more necessary a part of our day by day lives, spanning fields reminiscent of leisure, schooling, and communication. Understanding the content material of movies, nevertheless, is a difficult job as movies typically include a number of occasions occurring at totally different time scales. For instance, a video of a musher hitching up canine to a canine sled earlier than all of them race away entails an extended occasion (the canine pulling the sled) and a brief occasion (the canine being hitched to the sled). One method to spur analysis in video understanding is through the duty of dense video captioning, which consists of temporally localizing and describing all occasions in a minutes-long video. This differs from single image captioning and standard video captioning, which consists of describing brief movies with a single sentence.

Dense video captioning programs have large purposes, reminiscent of making movies accessible to individuals with visible or auditory impairments, routinely producing chapters for videos, or bettering the search of video moments in massive databases. Present dense video captioning approaches, nevertheless, have a number of limitations — for instance, they typically include extremely specialised task-specific parts, which make it difficult to combine them into powerful foundation models. Moreover, they’re typically educated completely on manually annotated datasets, that are very troublesome to acquire and therefore aren’t a scalable resolution.

On this submit, we introduce “Vid2Seq: Large-Scale Pretraining of a Visual Language Model for Dense Video Captioning”, to look at CVPR 2023. The Vid2Seq structure augments a language model with particular time tokens, permitting it to seamlessly predict occasion boundaries and textual descriptions in the identical output sequence. To be able to pre-train this unified mannequin, we leverage unlabeled narrated videos by reformulating sentence boundaries of transcribed speech as pseudo-event boundaries, and utilizing the transcribed speech sentences as pseudo-event captions. The ensuing Vid2Seq mannequin pre-trained on thousands and thousands of narrated movies improves the state-of-the-art on a wide range of dense video captioning benchmarks together with YouCook2, ViTT and ActivityNet Captions. Vid2Seq additionally generalizes nicely to the few-shot dense video captioning setting, the video paragraph captioning job, and the usual video captioning job. Lastly, we’ve got additionally launched the code for Vid2Seq here.

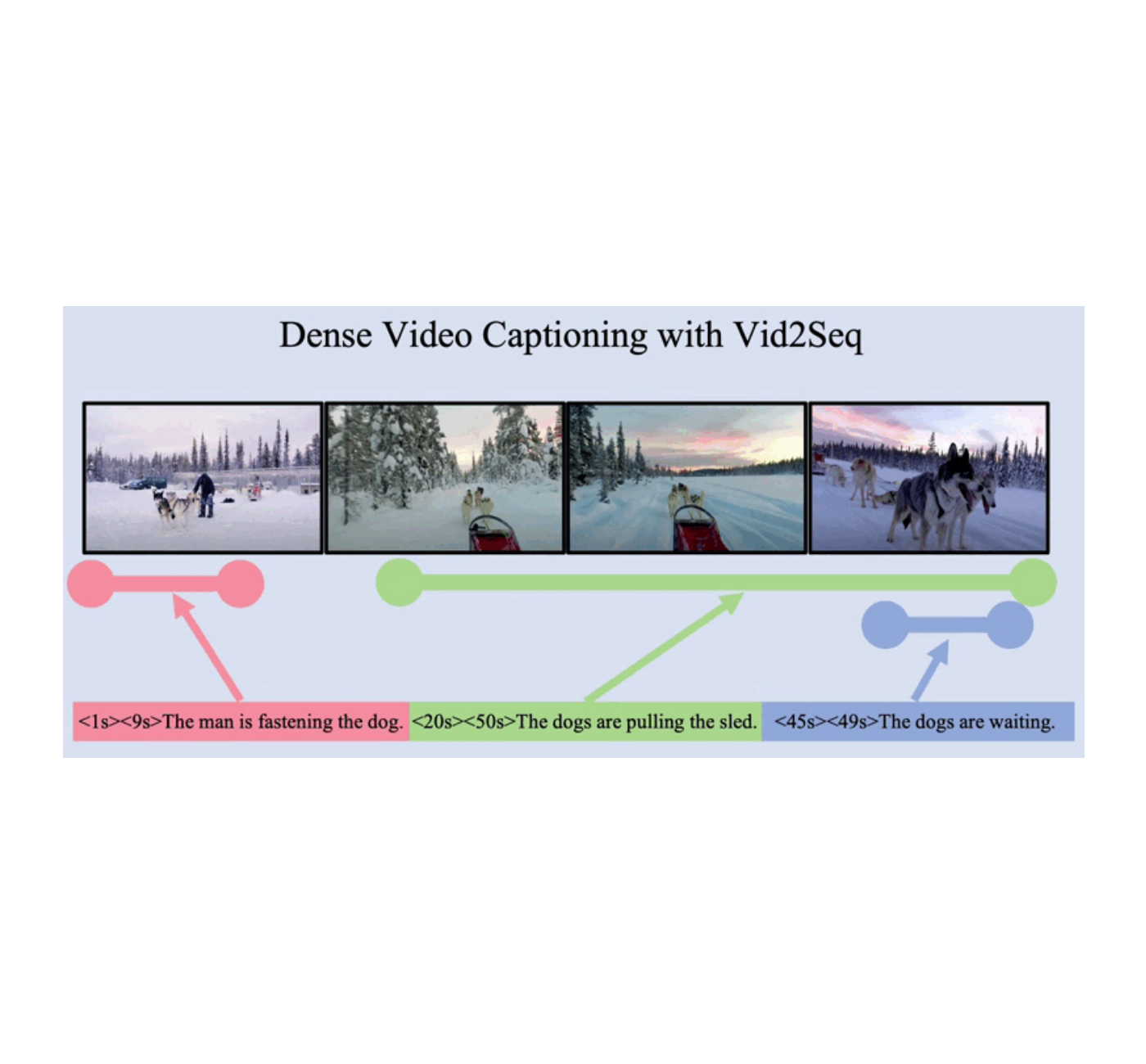

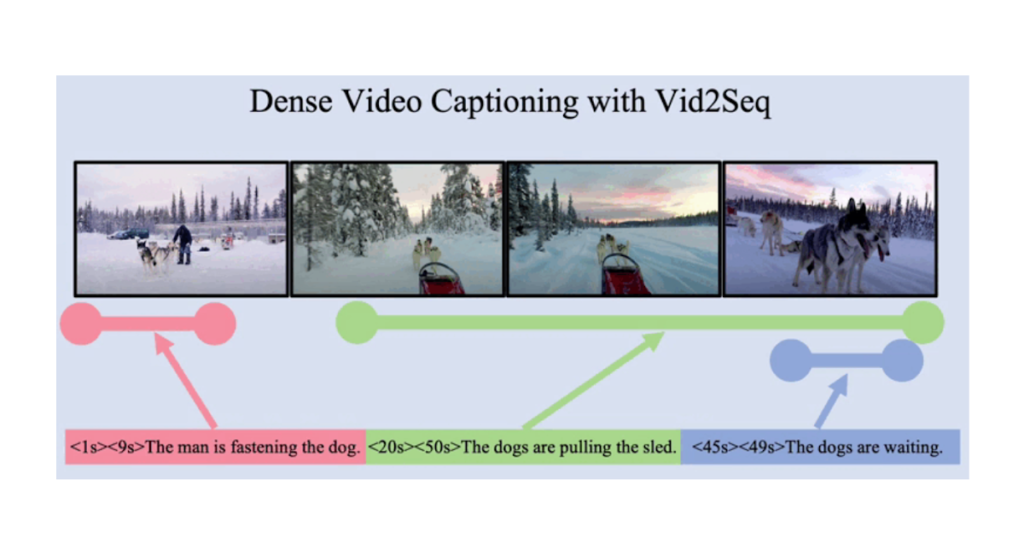

|

| Vid2Seq is a visible language mannequin that predicts dense occasion captions along with their temporal grounding in a video by producing a single sequence of tokens. |

A visible language mannequin for dense video captioning

Multimodal transformer architectures have improved the state-of-the-art on a variety of video duties, reminiscent of action recognition. Nonetheless it isn’t simple to adapt such an structure to the complicated job of collectively localizing and captioning occasions in minutes-long movies.

For a normal overview of how we obtain this, we increase a visible language mannequin with particular time tokens (like text tokens) that signify discretized timestamps within the video, much like Pix2Seq within the spatial area. Given visible inputs, the ensuing Vid2Seq mannequin can each take as enter and generate sequences of textual content and time tokens. First, this permits the Vid2Seq mannequin to know the temporal data of the transcribed speech enter, which is solid as a single sequence of tokens. Second, this permits Vid2Seq to collectively predict dense occasion captions and temporally floor them within the video whereas producing a single sequence of tokens.

The Vid2Seq structure features a visible encoder and a textual content encoder, which encode the video frames and the transcribed speech enter, respectively. The ensuing encodings are then forwarded to a textual content decoder, which autoregressively predicts the output sequence of dense occasion captions along with their temporal localization within the video. The structure is initialized with a powerful visual backbone and a strong language model.

Giant-scale pre-training on untrimmed narrated movies

Because of the dense nature of the duty, the guide assortment of annotations for dense video captioning is especially costly. Therefore we pre-train the Vid2Seq mannequin utilizing unlabeled narrated videos, that are simply out there at scale. Particularly, we use the YT-Temporal-1B dataset, which incorporates 18 million narrated movies masking a variety of domains.

We use transcribed speech sentences and their corresponding timestamps as supervision, that are solid as a single sequence of tokens. We pre-train Vid2Seq with a generative goal that teaches the decoder to foretell the transcribed speech sequence given visible inputs solely, and a denoising goal that encourages multimodal studying by requiring the mannequin to foretell masked tokens given a loud transcribed speech sequence and visible inputs. Particularly, noise is added to the speech sequence by randomly masking out spans of tokens.

|

| Vid2Seq is pre-trained on unlabeled narrated movies with a generative goal (high) and a denoising goal (backside). |

Outcomes on downstream dense video captioning benchmarks

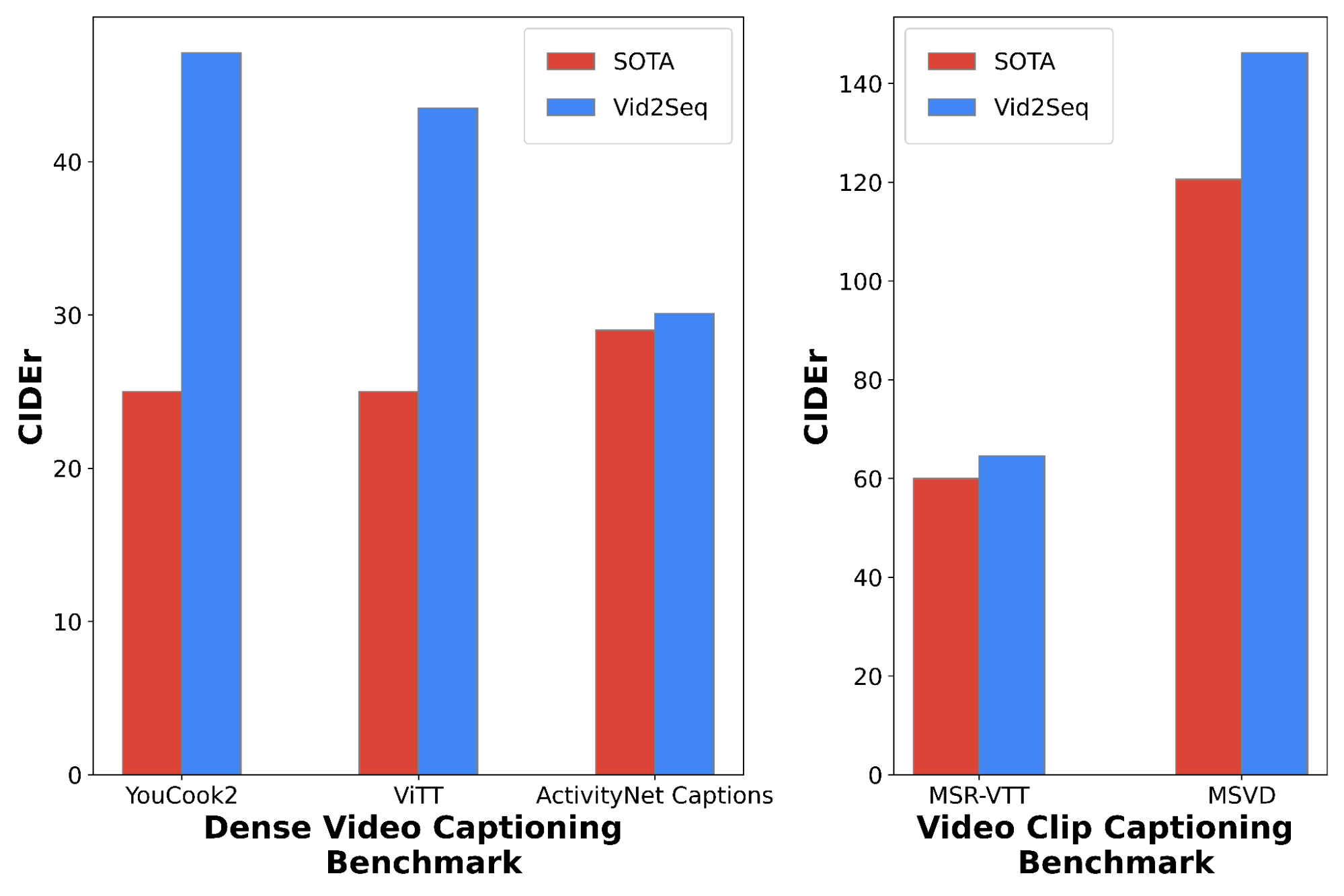

The ensuing pre-trained Vid2Seq mannequin may be fine-tuned on downstream duties with a easy most probability goal utilizing teacher forcing (i.e., predicting the subsequent token given earlier ground-truth tokens). After fine-tuning, Vid2Seq notably improves the state-of-the-art on three commonplace downstream dense video captioning benchmarks (ActivityNet Captions, YouCook2 and ViTT) and two video clip captioning benchmarks (MSR-VTT, MSVD). In our paper we offer extra ablation research, qualitative outcomes, in addition to leads to the few-shot settings and within the video paragraph captioning job.

|

| Comparability to state-of-the-art strategies for dense video captioning (left) and for video clip captioning (proper), on the CIDEr metric (larger is best). |

Conclusion

We introduce Vid2Seq, a novel visible language mannequin for dense video captioning that merely predicts all occasion boundaries and captions as a single sequence of tokens. Vid2Seq may be successfully pretrained on unlabeled narrated movies at scale, and achieves state-of-the-art outcomes on varied downstream dense video captioning benchmarks. Study extra from the paper and seize the code here.

Acknowledgements

This analysis was performed by Antoine Yang, Arsha Nagrani, Paul Hongsuck Website positioning, Antoine Miech, Jordi Pont-Tuset, Ivan Laptev, Josef Sivic and Cordelia Schmid.