Designing Societally Useful Reinforcement Studying Programs – The Berkeley Synthetic Intelligence Analysis Weblog

Deep reinforcement studying (DRL) is transitioning from a analysis area targeted on recreation taking part in to a expertise with real-world functions. Notable examples embody DeepMind’s work on controlling a nuclear reactor or on enhancing Youtube video compression, or Tesla attempting to use a method inspired by MuZero for autonomous automobile habits planning. However the thrilling potential for actual world functions of RL must also include a wholesome dose of warning – for instance RL insurance policies are well-known to be weak to exploitation, and strategies for protected and robust policy development are an lively space of analysis.

Similtaneously the emergence of highly effective RL techniques in the true world, the general public and researchers are expressing an elevated urge for food for honest, aligned, and protected machine studying techniques. The main target of those analysis efforts to this point has been to account for shortcomings of datasets or supervised studying practices that may hurt people. Nonetheless the distinctive capability of RL techniques to leverage temporal suggestions in studying complicates the kinds of dangers and security issues that may come up.

This publish expands on our current whitepaper and research paper, the place we purpose for instance the completely different modalities harms can take when augmented with the temporal axis of RL. To fight these novel societal dangers, we additionally suggest a brand new type of documentation for dynamic Machine Studying techniques which goals to evaluate and monitor these dangers each earlier than and after deployment.

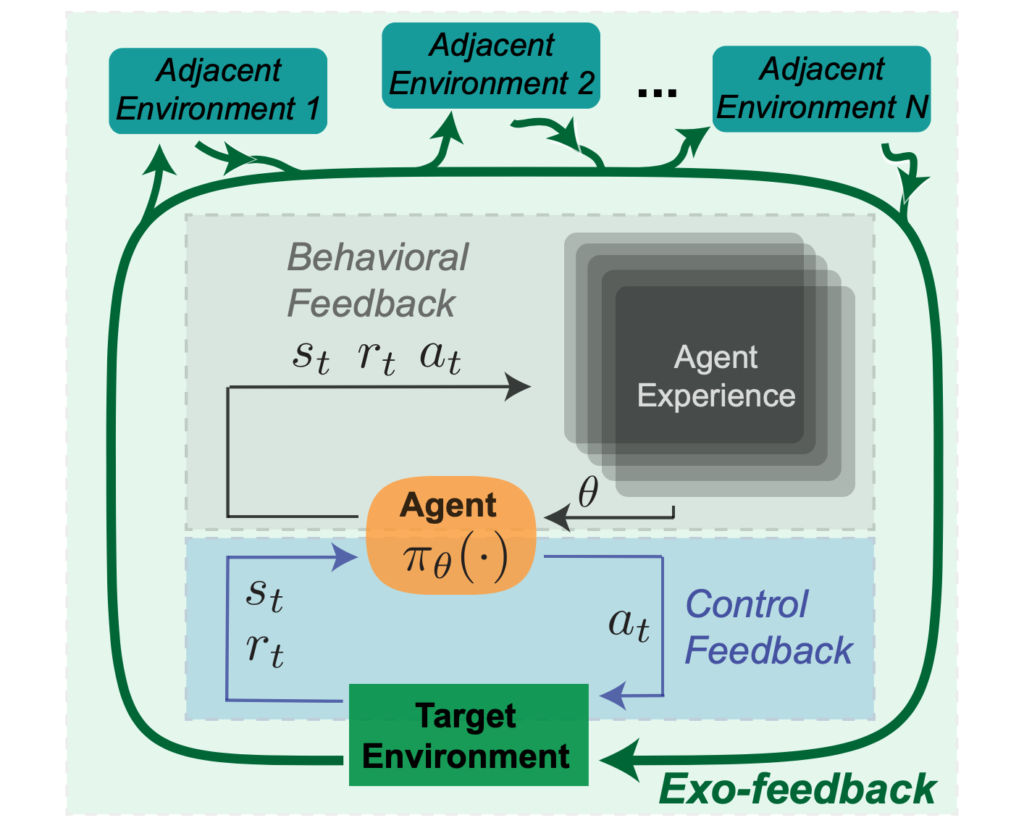

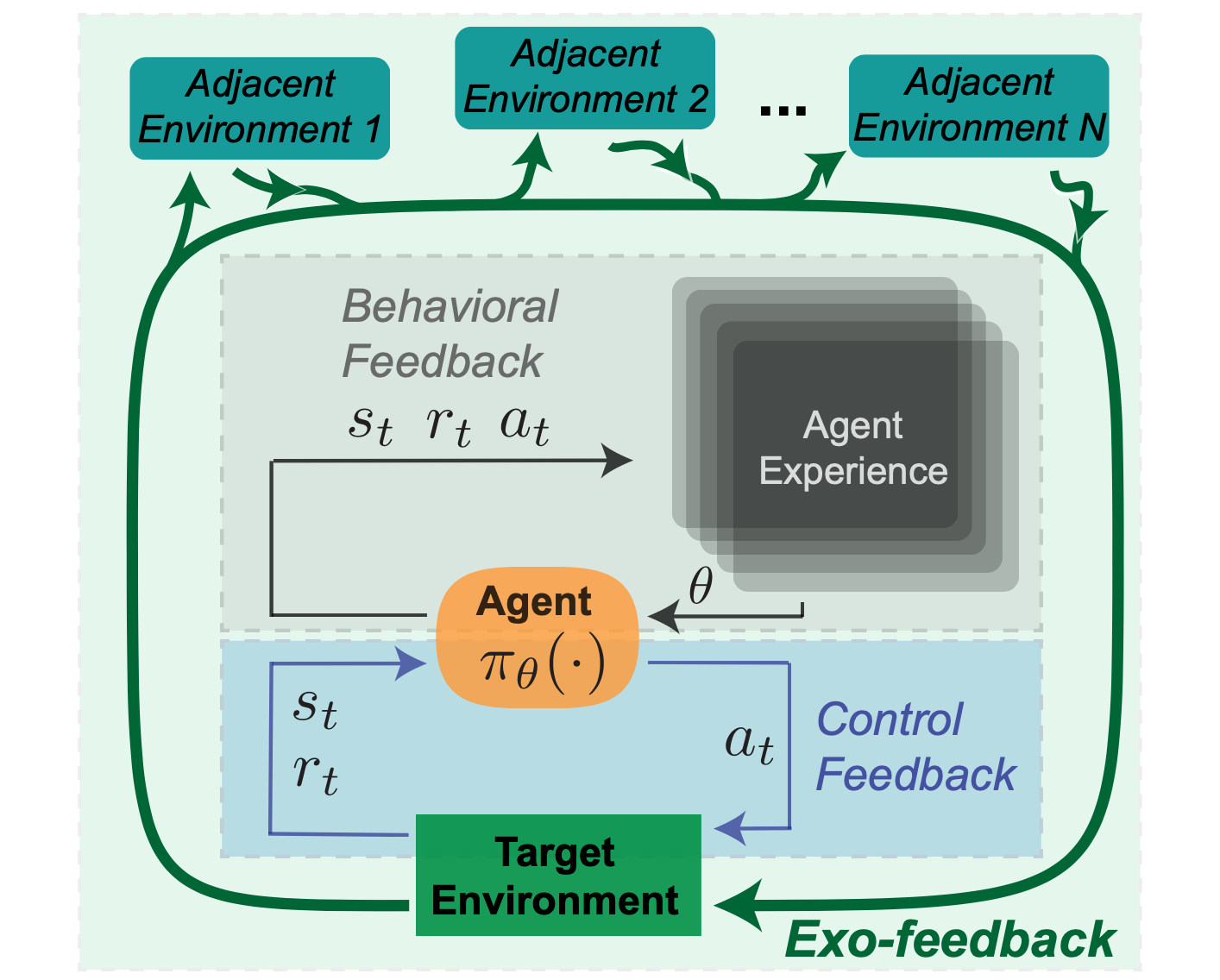

Reinforcement studying techniques are sometimes spotlighted for his or her capability to behave in an surroundings, relatively than passively make predictions. Different supervised machine studying techniques, akin to laptop imaginative and prescient, devour knowledge and return a prediction that can be utilized by some choice making rule. In distinction, the attraction of RL is in its capability to not solely (a) straight mannequin the influence of actions, but additionally to (b) enhance coverage efficiency robotically. These key properties of appearing upon an surroundings, and studying inside that surroundings may be understood as by contemplating the various kinds of suggestions that come into play when an RL agent acts inside an surroundings. We classify these suggestions varieties in a taxonomy of (1) Management, (2) Behavioral, and (3) Exogenous suggestions. The primary two notions of suggestions, Management and Behavioral, are straight inside the formal mathematical definition of an RL agent whereas Exogenous suggestions is induced because the agent interacts with the broader world.

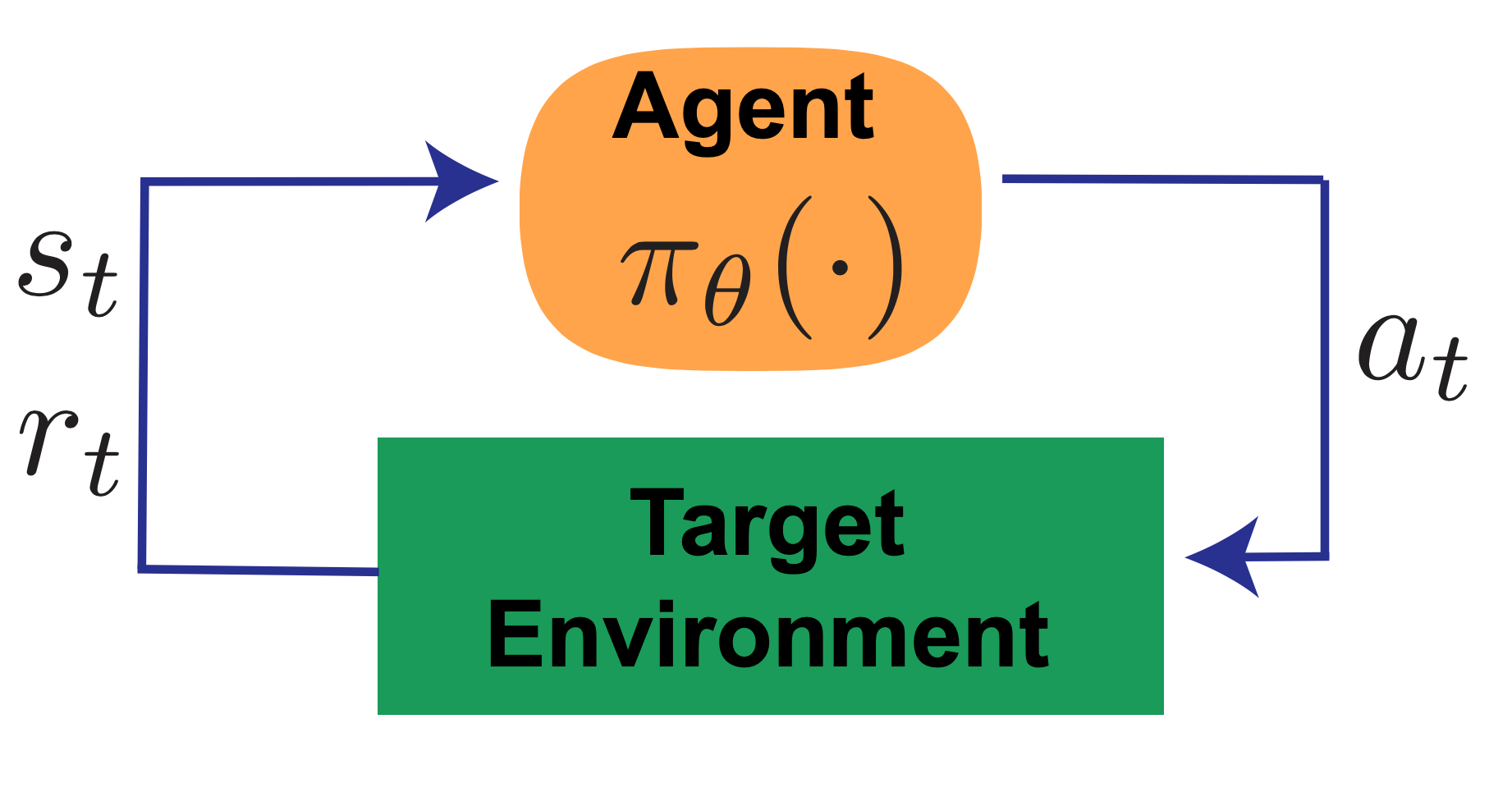

1. Management Suggestions

First is management suggestions – within the management techniques engineering sense – the place the motion taken will depend on the present measurements of the state of the system. RL brokers select actions based mostly on an noticed state based on a coverage, which generates environmental suggestions. For instance, a thermostat activates a furnace based on the present temperature measurement. Management suggestions provides an agent the flexibility to react to unexpected occasions (e.g. a sudden snap of chilly climate) autonomously.

Determine 1: Management Suggestions.

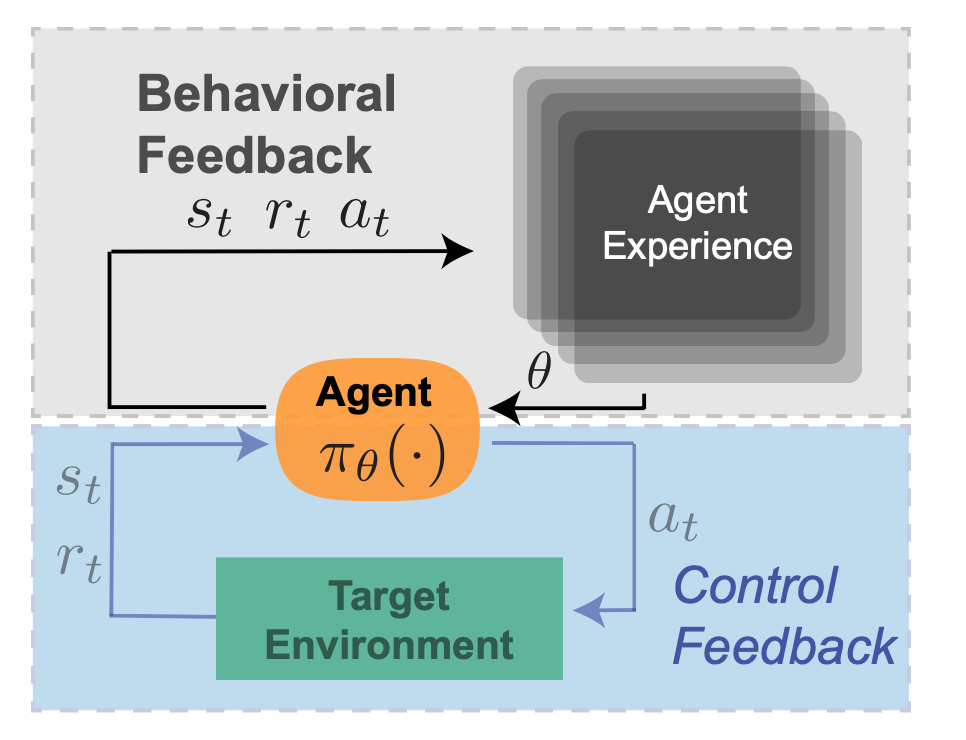

2. Behavioral Suggestions

Subsequent in our taxonomy of RL suggestions is ‘behavioral suggestions’: the trial and error studying that permits an agent to enhance its coverage by means of interplay with the surroundings. This might be thought of the defining function of RL, as in comparison with e.g. ‘classical’ management concept. Insurance policies in RL may be outlined by a set of parameters that decide the actions the agent takes sooner or later. As a result of these parameters are up to date by means of behavioral suggestions, these are literally a mirrored image of the info collected from executions of previous coverage variations. RL brokers should not totally ‘memoryless’ on this respect–the present coverage will depend on saved expertise, and impacts newly collected knowledge, which in flip impacts future variations of the agent. To proceed the thermostat instance – a ‘sensible residence’ thermostat may analyze historic temperature measurements and adapt its management parameters in accordance with seasonal shifts in temperature, as an illustration to have a extra aggressive management scheme throughout winter months.

Determine 2: Behavioral Suggestions.

3. Exogenous Suggestions

Lastly, we are able to contemplate a 3rd type of suggestions exterior to the required RL surroundings, which we name Exogenous (or ‘exo’) suggestions. Whereas RL benchmarking duties could also be static environments, each motion in the true world impacts the dynamics of each the goal deployment surroundings, in addition to adjoining environments. For instance, a information advice system that’s optimized for clickthrough could change the best way editors write headlines in the direction of attention-grabbing clickbait. On this RL formulation, the set of articles to be really useful can be thought of a part of the surroundings and anticipated to stay static, however publicity incentives trigger a shift over time.

To proceed the thermostat instance, as a ‘sensible thermostat’ continues to adapt its habits over time, the habits of different adjoining techniques in a family may change in response – as an illustration different home equipment may devour extra electrical energy attributable to elevated warmth ranges, which might influence electrical energy prices. Family occupants may additionally change their clothes and habits patterns attributable to completely different temperature profiles in the course of the day. In flip, these secondary results might additionally affect the temperature which the thermostat screens, resulting in an extended timescale suggestions loop.

Damaging prices of those exterior results is not going to be specified within the agent-centric reward operate, leaving these exterior environments to be manipulated or exploited. Exo-feedback is by definition tough for a designer to foretell. As an alternative, we suggest that it ought to be addressed by documenting the evolution of the agent, the focused surroundings, and adjoining environments.

Determine 3: Exogenous (exo) Suggestions.

Let’s contemplate how two key properties can result in failure modes particular to RL techniques: direct motion choice (by way of management suggestions) and autonomous knowledge assortment (by way of behavioral suggestions).

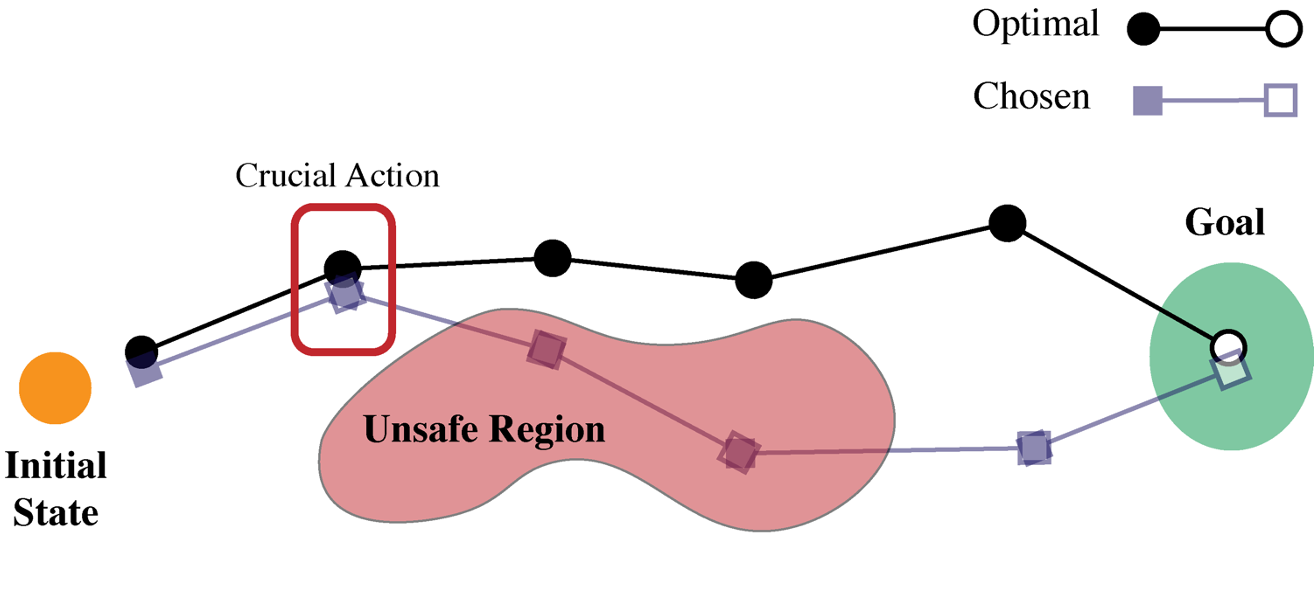

First is decision-time security. One present observe in RL analysis to create protected choices is to enhance the agent’s reward operate with a penalty time period for sure dangerous or undesirable states and actions. For instance, in a robotics area we would penalize sure actions (akin to extraordinarily giant torques) or state-action tuples (akin to carrying a glass of water over delicate gear). Nonetheless it’s tough to anticipate the place on a pathway an agent could encounter an important motion, such that failure would lead to an unsafe occasion. This facet of how reward features work together with optimizers is very problematic for deep studying techniques, the place numerical ensures are difficult.

Determine 4: Choice time failure illustration.

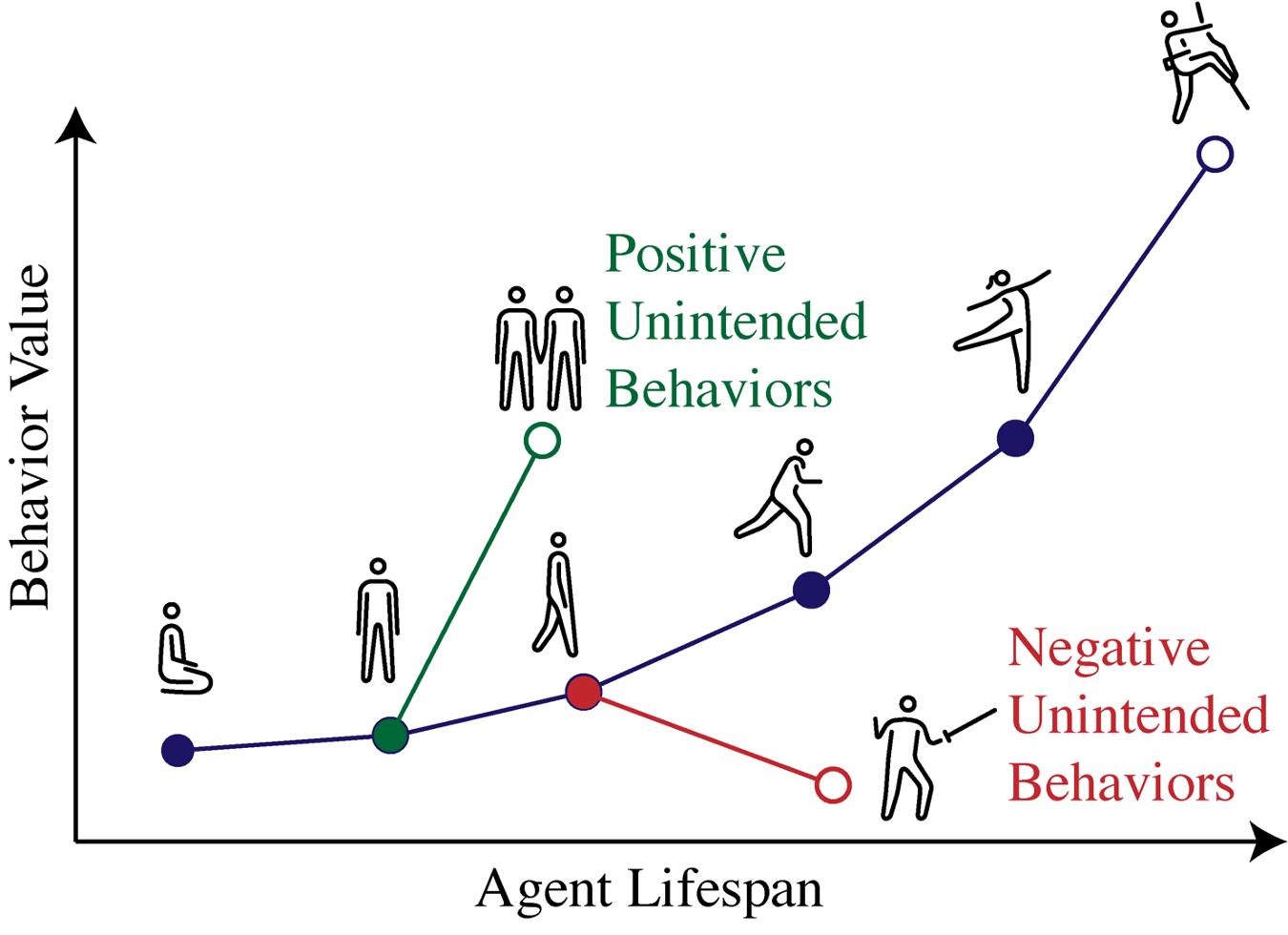

As an RL agent collects new knowledge and the coverage adapts, there’s a complicated interaction between present parameters, saved knowledge, and the surroundings that governs evolution of the system. Altering any considered one of these three sources of data will change the longer term habits of the agent, and furthermore these three parts are deeply intertwined. This uncertainty makes it tough to again out the reason for failures or successes.

In domains the place many behaviors can presumably be expressed, the RL specification leaves lots of components constraining habits unsaid. For a robotic studying locomotion over an uneven surroundings, it will be helpful to know what indicators within the system point out it would study to seek out a neater route relatively than a extra complicated gait. In complicated conditions with much less well-defined reward features, these meant or unintended behaviors will embody a much wider vary of capabilities, which can or could not have been accounted for by the designer.

Determine 5: Habits estimation failure illustration.

Whereas these failure modes are carefully associated to regulate and behavioral suggestions, Exo-feedback doesn’t map as clearly to at least one sort of error and introduces dangers that don’t match into easy classes. Understanding exo-feedback requires that stakeholders within the broader communities (machine studying, utility domains, sociology, and so forth.) work collectively on actual world RL deployments.

Right here, we focus on 4 kinds of design decisions an RL designer should make, and the way these decisions can have an effect upon the socio-technical failures that an agent may exhibit as soon as deployed.

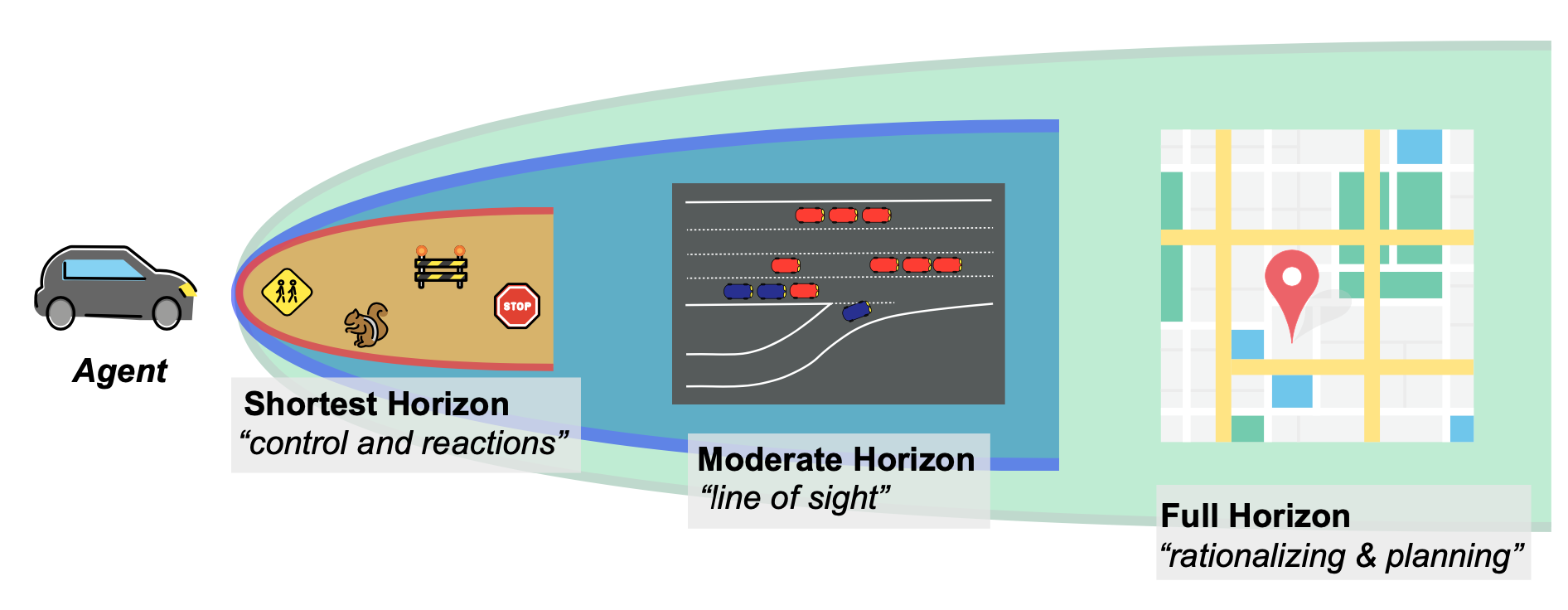

Scoping the Horizon

Figuring out the timescale on which aRL agent can plan impacts the potential and precise habits of that agent. Within the lab, it might be frequent to tune the horizon size till the specified habits is achieved. However in actual world techniques, optimizations will externalize prices relying on the outlined horizon. For instance, an RL agent controlling an autonomous automobile could have very completely different objectives and behaviors if the duty is to remain in a lane, navigate a contested intersection, or route throughout a metropolis to a vacation spot. That is true even when the target (e.g. “reduce journey time”) stays the identical.

Determine 6: Scoping the horizon instance with an autonomous automobile.

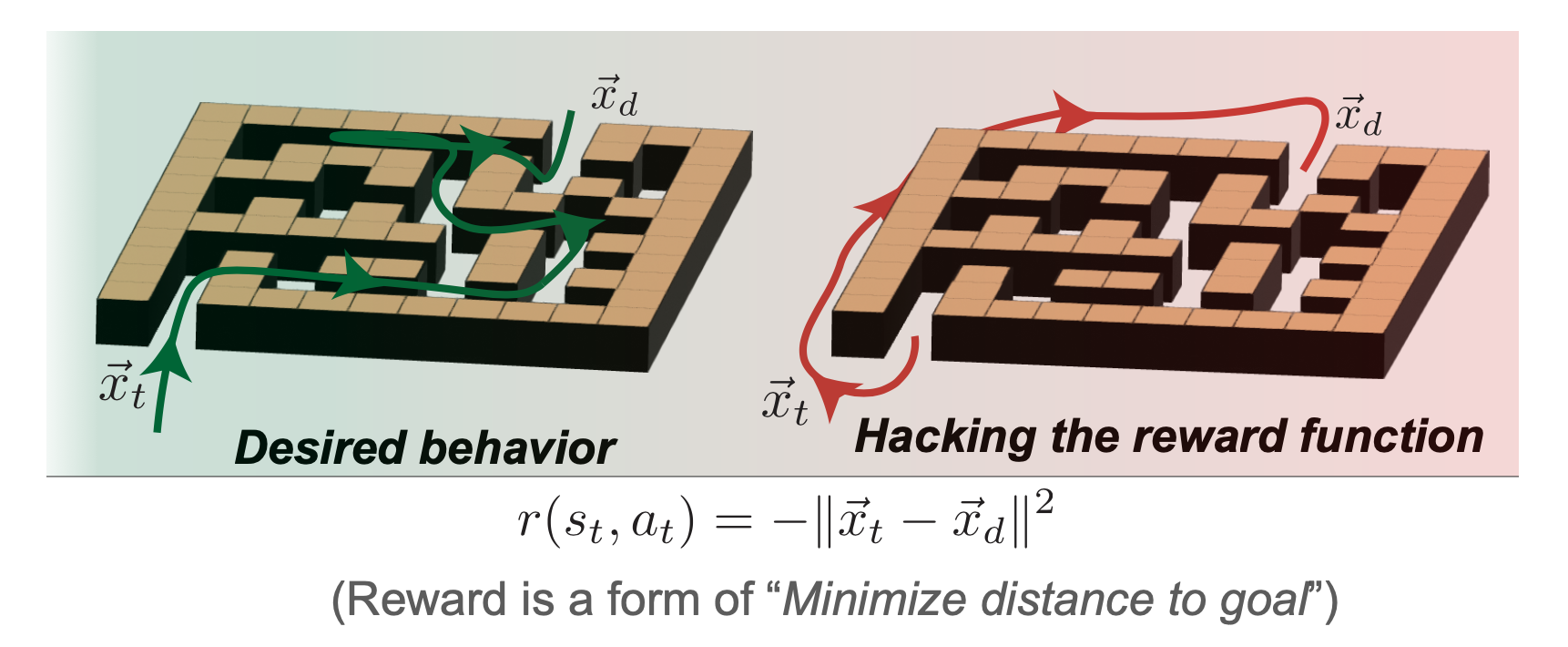

Defining Rewards

A second design selection is that of truly specifying the reward operate to be maximized. This instantly raises the well-known threat of RL techniques, reward hacking, the place the designer and agent negotiate behaviors based mostly on specified reward features. In a deployed RL system, this typically leads to sudden exploitative habits – from bizarre video game agents to causing errors in robotics simulators. For instance, if an agent is offered with the issue of navigating a maze to succeed in the far aspect, a mis-specified reward may consequence within the agent avoiding the duty totally to attenuate the time taken.

Determine 7: Defining rewards instance with maze navigation.

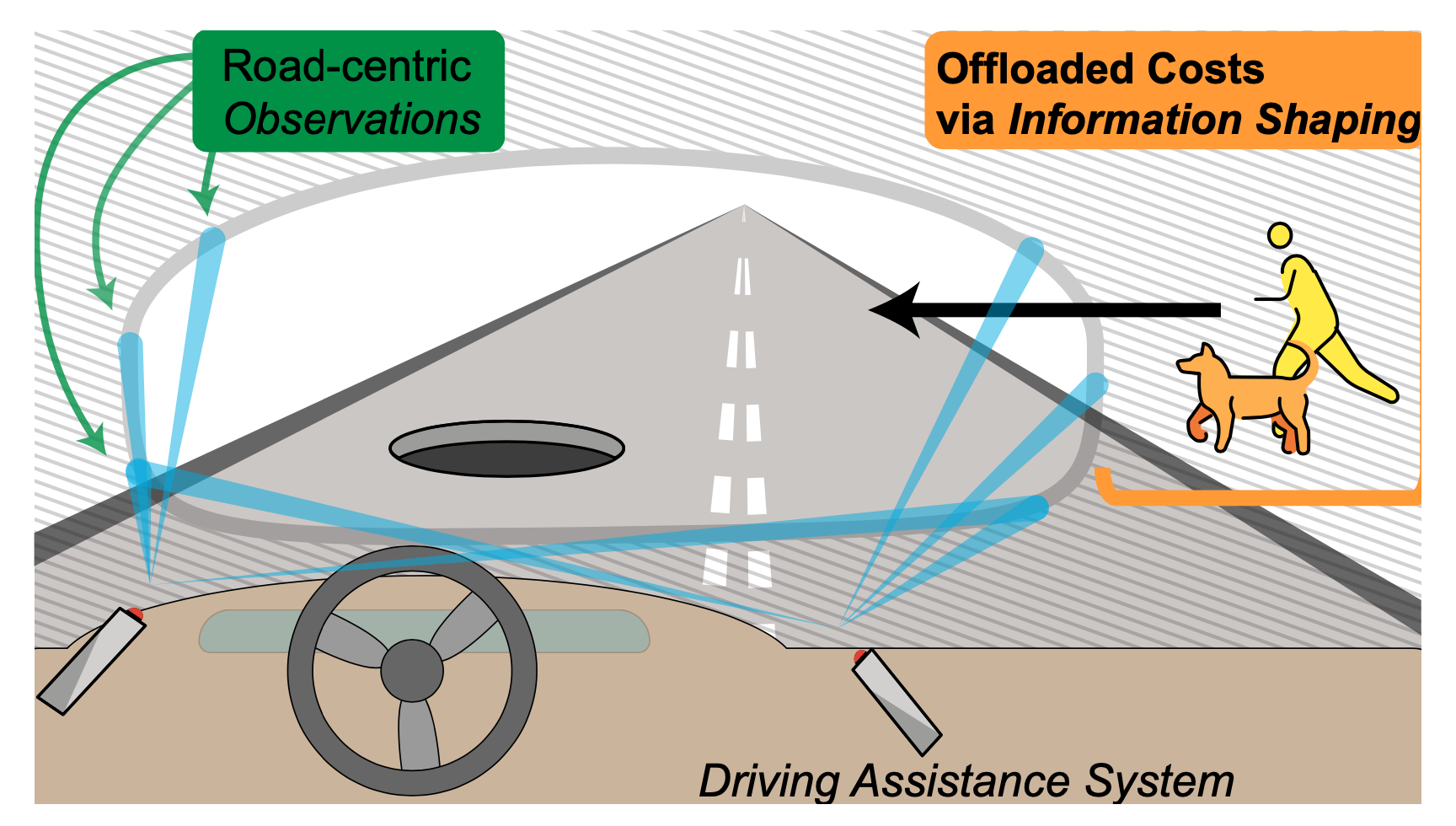

Pruning Data

A standard observe in RL analysis is to redefine the surroundings to suit one’s wants – RL designers make quite a few express and implicit assumptions to mannequin duties in a manner that makes them amenable to digital RL brokers. In extremely structured domains, akin to video video games, this may be relatively benign.Nonetheless, in the true world redefining the surroundings quantities to altering the methods info can circulation between the world and the RL agent. This may dramatically change the that means of the reward operate and offload threat to exterior techniques. For instance, an autonomous automobile with sensors targeted solely on the street floor shifts the burden from AV designers to pedestrians. On this case, the designer is pruning out details about the encircling surroundings that’s really essential to robustly protected integration inside society.

Determine 8: Data shaping instance with an autonomous automobile.

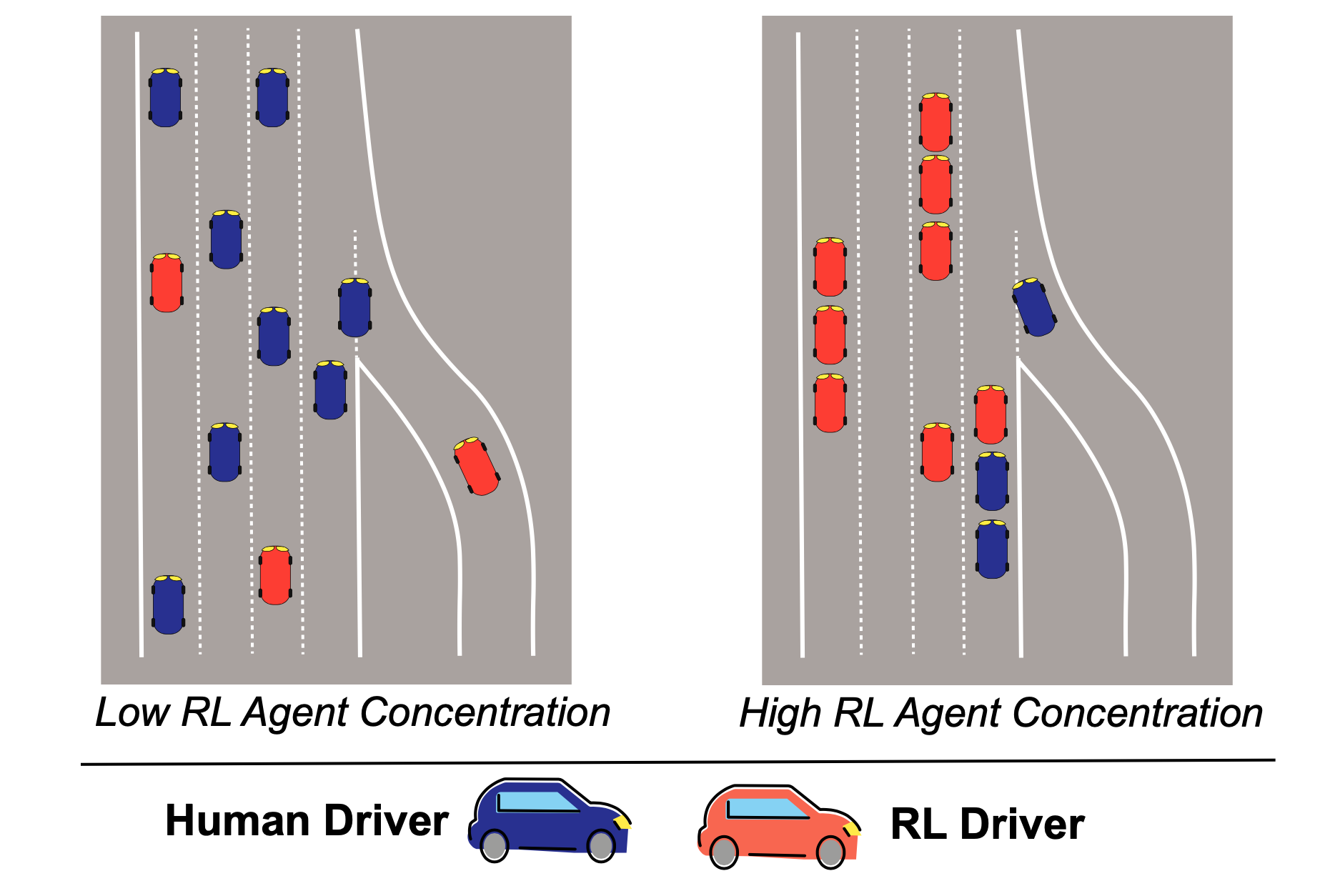

Coaching A number of Brokers

There may be rising curiosity in the issue of multi-agent RL, however as an rising analysis space, little is thought about how studying techniques work together inside dynamic environments. When the relative focus of autonomous brokers will increase inside an surroundings, the phrases these brokers optimize for can really re-wire norms and values encoded in that particular utility area. An instance can be the adjustments in habits that can come if the vast majority of automobiles are autonomous and speaking (or not) with one another. On this case, if the brokers have autonomy to optimize towards a aim of minimizing transit time (for instance), they may crowd out the remaining human drivers and closely disrupt accepted societal norms of transit.

Determine 9: The dangers of multi-agency instance on autonomous automobiles.

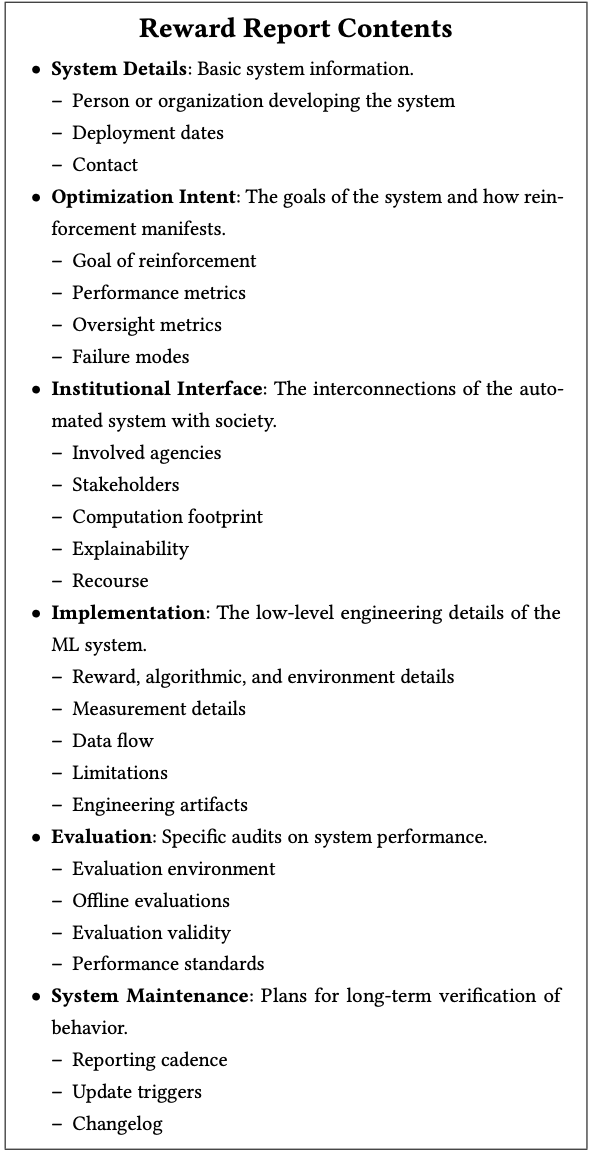

In our current whitepaper and research paper, we proposed Reward Reports, a brand new type of ML documentation that foregrounds the societal dangers posed by sequential data-driven optimization techniques, whether or not explicitly constructed as an RL agent or implicitly construed by way of data-driven optimization and suggestions. Constructing on proposals to doc datasets and fashions, we deal with reward features: the target that guides optimization choices in feedback-laden techniques. Reward Studies comprise questions that spotlight the guarantees and dangers entailed in defining what’s being optimized in an AI system, and are meant as residing paperwork that dissolve the excellence between ex-ante (design) specification and ex-post (after the very fact) hurt. In consequence, Reward Studies present a framework for ongoing deliberation and accountability earlier than and after a system is deployed.

Our proposed template for a Reward Studies consists of a number of sections, organized to assist the reporter themselves perceive and doc the system. A Reward Report begins with (1) system particulars that include the data context for deploying the mannequin. From there, the report paperwork (2) the optimization intent, which questions the objectives of the system and why RL or ML could also be a great tool. The designer then paperwork (3) how the system could have an effect on completely different stakeholders within the institutional interface. The subsequent two sections include technical particulars on (4) the system implementation and (5) analysis. Reward stories conclude with (6) plans for system upkeep as extra system dynamics are uncovered.

A very powerful function of a Reward Report is that it permits documentation to evolve over time, in line with the temporal evolution of a web based, deployed RL system! That is most evident within the change-log, which is we find on the finish of our Reward Report template:

Determine 10: Reward Studies contents.

What would this appear like in observe?

As a part of our analysis, we’ve developed a reward report LaTeX template, as well as several example reward reports that purpose for instance the sorts of points that might be managed by this type of documentation. These examples embody the temporal evolution of the MovieLens recommender system, the DeepMind MuZero recreation taking part in system, and a hypothetical deployment of an RL autonomous automobile coverage for managing merging visitors, based mostly on the Project Flow simulator.

Nonetheless, these are simply examples that we hope will serve to encourage the RL group–as extra RL techniques are deployed in real-world functions, we hope the analysis group will construct on our concepts for Reward Studies and refine the precise content material that ought to be included. To this finish, we hope that you’ll be part of us at our (un)-workshop.

Work with us on Reward Studies: An (Un)Workshop!

We’re internet hosting an “un-workshop” on the upcoming convention on Reinforcement Studying and Choice Making (RLDM) on June eleventh from 1:00-5:00pm EST at Brown College, Windfall, RI. We name this an un-workshop as a result of we’re searching for the attendees to assist create the content material! We’ll present templates, concepts, and dialogue as our attendees construct out instance stories. We’re excited to develop the concepts behind Reward Studies with real-world practitioners and cutting-edge researchers.

For extra info on the workshop, go to the website or contact the organizers at geese-org@lists.berkeley.edu.

This publish relies on the next papers: