Saying the ICDAR 2023 Competitors on Hierarchical Textual content Detection and Recognition – Google AI Weblog

The previous few many years have witnessed the speedy growth of Optical Character Recognition (OCR) expertise, which has developed from an academic benchmark task utilized in early breakthroughs of deep studying analysis to tangible merchandise obtainable in consumer devices and to third party developers for day by day use. These OCR merchandise digitize and democratize the precious info that’s saved in paper or image-based sources (e.g., books, magazines, newspapers, kinds, road indicators, restaurant menus) in order that they are often listed, searched, translated, and additional processed by state-of-the-art natural language processing techniques.

Analysis in scene text detection and recognition (or scene textual content recognizing) has been the main driver of this speedy growth by way of adapting OCR to pure photos which have extra advanced backgrounds than doc photos. These analysis efforts, nonetheless, give attention to the detection and recognition of every particular person phrase in photos, with out understanding how these phrases compose sentences and articles.

Layout analysis is one other related line of analysis that takes a doc picture and extracts its construction, i.e., title, paragraphs, headings, figures, tables and captions. These format evaluation efforts are parallel to OCR and have been largely developed as unbiased methods which might be usually evaluated solely on doc photos. As such, the synergy between OCR and format evaluation stays largely under-explored. We imagine that OCR and format evaluation are mutually complementary duties that allow machine studying to interpret textual content in photos and, when mixed, may enhance the accuracy and effectivity of each duties.

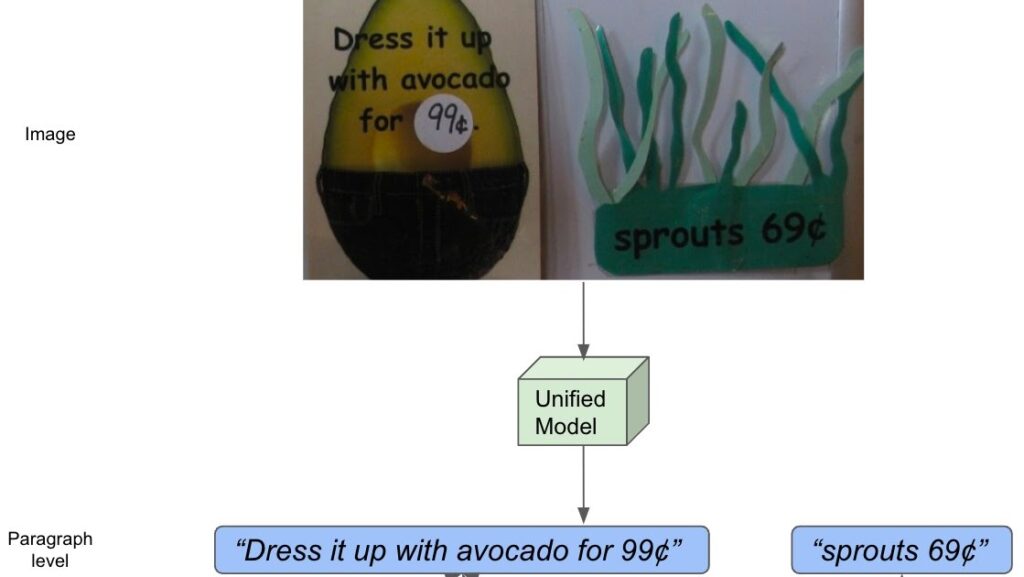

With this in thoughts, we announce the Competition on Hierarchical Text Detection and Recognition (the HierText Problem), hosted as a part of the 17th annual International Conference on Document Analysis and Recognition (ICDAR 2023). The competitors is hosted on the Robust Reading Competition web site, and represents the primary main effort to unify OCR and format evaluation. On this competitors, we invite researchers from world wide to construct techniques that may produce hierarchical annotations of textual content in photos utilizing phrases clustered into traces and paragraphs. We hope this competitors can have a big and long-term impression on image-based textual content understanding with the objective to consolidate the analysis efforts throughout OCR and format evaluation, and create new indicators for downstream info processing duties.

|

| The idea of hierarchical textual content illustration. |

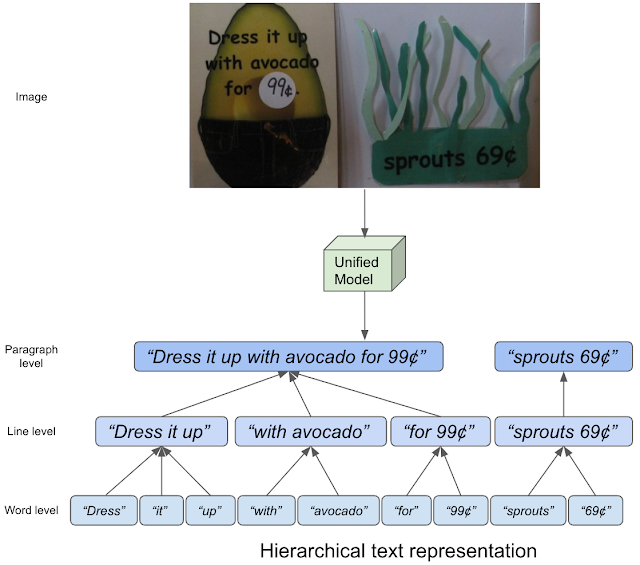

Setting up a hierarchical textual content dataset

On this competitors, we use the HierText dataset that we revealed at CVPR 2022 with our paper “Towards End-to-End Unified Scene Text Detection and Layout Analysis”. It’s the primary real-image dataset that gives hierarchical annotations of textual content, containing phrase, line, and paragraph stage annotations. Right here, “phrases” are outlined as sequences of textual characters not interrupted by areas. “Strains” are then interpreted as “house“-separated clusters of “phrases” which might be logically linked in a single course, and aligned in spatial proximity. Lastly, “paragraphs” are composed of “traces” that share the identical semantic matter and are geometrically coherent.

To construct this dataset, we first annotated photos from the Open Images dataset utilizing the Google Cloud Platform (GCP) Text Detection API. We filtered by way of these annotated photos, preserving solely photos wealthy in textual content content material and format construction. Then, we labored with our third-party companions to manually appropriate all transcriptions and to label phrases, traces and paragraph composition. Because of this, we obtained 11,639 transcribed photos, cut up into three subsets: (1) a practice set with 8,281 photos, (2) a validation set with 1,724 photos, and (3) a check set with 1,634 photos. As detailed within the paper, we additionally checked the overlap between our dataset, TextOCR, and Intel OCR (each of which additionally extracted annotated photos from Open Pictures), ensuring that the check photos within the HierText dataset weren’t additionally included within the TextOCR or Intel OCR coaching and validation splits and vice versa. Beneath, we visualize examples utilizing the HierText dataset and reveal the idea of hierarchical textual content by shading every textual content entity with completely different colours. We will see that HierText has a range of picture area, textual content format, and excessive textual content density.

|

| Samples from the HierText dataset. Left: Illustration of every phrase entity. Center: Illustration of line clustering. Proper: Illustration paragraph clustering. |

Dataset with highest density of textual content

Along with the novel hierarchical illustration, HierText represents a brand new area of textual content photos. We word that HierText is at the moment probably the most dense publicly obtainable OCR dataset. Beneath we summarize the traits of HierText as compared with different OCR datasets. HierText identifies 103.8 phrases per picture on common, which is greater than 3x the density of TextOCR and 25x extra dense than ICDAR-2015. This excessive density poses distinctive challenges for detection and recognition, and as a consequence HierText is used as one of many major datasets for OCR analysis at Google.

| Dataset | Coaching cut up | Validation cut up | Testing cut up | Phrases per picture | ||||||||||

| ICDAR-2015 | 1,000 | 0 | 500 | 4.4 | ||||||||||

| TextOCR | 21,778 | 3,124 | 3,232 | 32.1 | ||||||||||

| Intel OCR | 19,1059 | 16,731 | 0 | 10.0 | ||||||||||

| HierText | 8,281 | 1,724 | 1,634 | 103.8 |

| Evaluating a number of OCR datasets to the HierText dataset. |

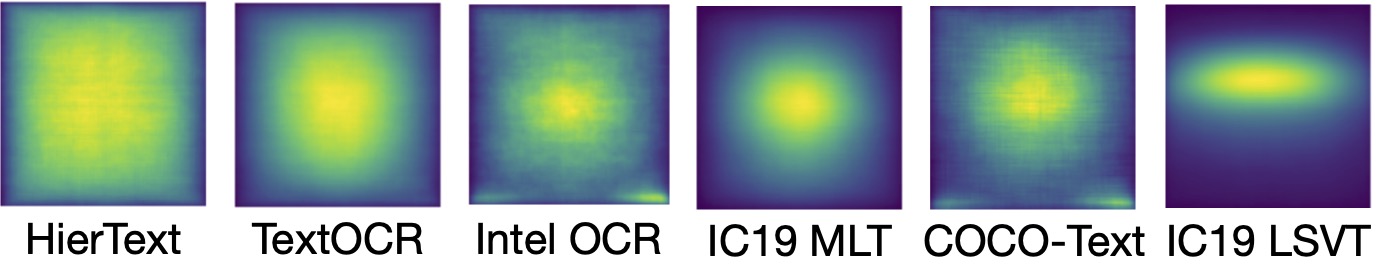

Spatial distribution

We additionally discover that textual content within the HierText dataset has a way more even spatial distribution than different OCR datasets, together with TextOCR, Intel OCR, IC19 MLT, COCO-Text and IC19 LSVT. These earlier datasets are inclined to have well-composed photos, the place textual content is positioned in the course of the pictures, and are thus simpler to establish. Quite the opposite, textual content entities in HierText are broadly distributed throughout the pictures. It is proof that our photos are from extra various domains. This attribute makes HierText uniquely difficult amongst public OCR datasets.

|

| Spatial distribution of textual content situations in several datasets. |

The HierText problem

The HierText Challenge represents a novel activity and with distinctive challenges for OCR fashions. We invite researchers to take part on this problem and be part of us in ICDAR 2023 this yr in San Jose, CA. We hope this competitors will spark analysis neighborhood curiosity in OCR fashions with wealthy info representations which might be helpful for novel down-stream duties.

Acknowledgements

The core contributors to this venture are Shangbang Lengthy, Siyang Qin, Dmitry Panteleev, Alessandro Bissacco, Yasuhisa Fujii and Michalis Raptis. Ashok Popat and Jake Walker supplied precious recommendation. We additionally thank Dimosthenis Karatzas and Sergi Robles from Autonomous College of Barcelona for serving to us arrange the competitors web site.