Researchers from UC Berkeley and Meta Current AST-T5: A Novel Pretraining Paradigm that Harnesses the Energy of Summary Syntax Timber (ASTs) to Enhance the Efficiency of Code-Centric Language Fashions

LLMs have had a big impression within the fields of code era and comprehension. These fashions, educated on in depth code datasets resembling GitHub, excel in duties like text-to-code conversion, code-to-code transpilation, and understanding code. Nevertheless, many present fashions merely deal with code as sequences of subword tokens, overlooking its construction. Analysis means that incorporating the Summary Syntax Tree (AST) of code can notably enhance efficiency in duties associated to code. Some research use code obfuscation throughout pretraining to show fashions about summary code buildings, however these strategies typically contain computationally costly processes, limiting scalability and imposing stringent circumstances.

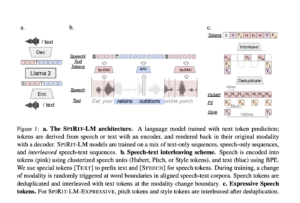

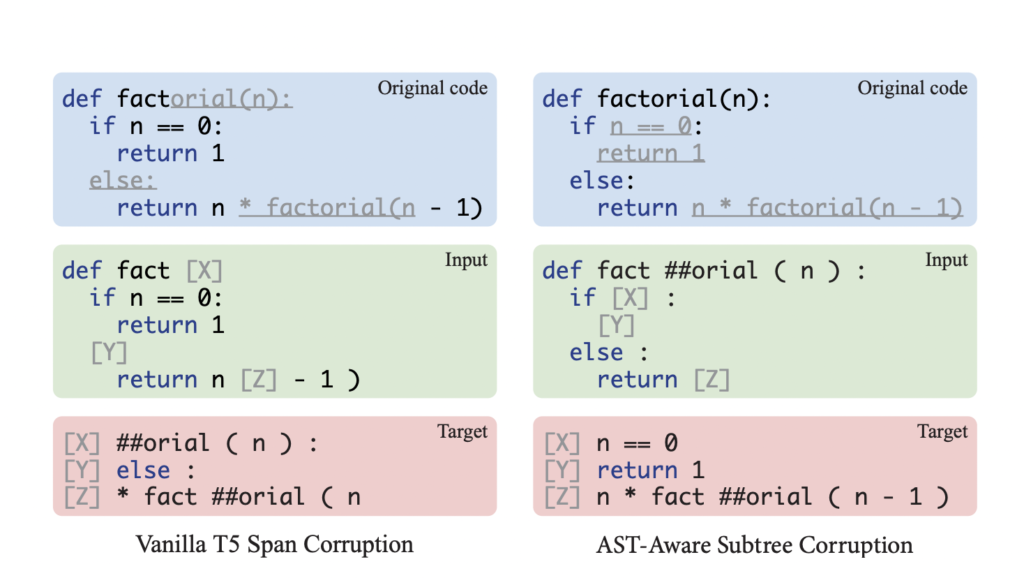

Researchers from UC Berkeley and Meta AI have developed AST-T5, a pretraining strategy that capitalizes on the AST to reinforce code era, transpilation, and comprehension. This technique, using dynamic programming, maintains code construction via AST-Conscious Segmentation and equips the mannequin with the flexibility to reconstruct various code buildings by way of AST-Conscious Span Corruption. In contrast to different fashions, AST-T5 doesn’t require intricate program analyses or architectural adjustments, making certain seamless integration with any encoder-decoder Transformer.

LMs have been prolonged from NLP to code understanding and era duties. Encoder-only fashions excel in code understanding when fine-tuned with classifiers, whereas decoder-only fashions are optimized for code era via their autoregressive nature. Encoder-decoder fashions, resembling PLBART and CodeT5, have been developed to carry out properly in various code-related duties. Earlier analysis has leveraged syntactic parts, resembling ASTs, in neural community fashions for code understanding and era.

AST-T5 is a pretraining framework that leverages ASTs for code-based language fashions. AST-T5 makes use of AST-Conscious Segmentation, an algorithm designed to deal with Transformer token limits whereas retaining the semantic coherence of the code. AST-T5 additionally employs AST-Conscious Span Corruption, a masking approach that pretrains the mannequin to reconstruct code buildings starting from particular person tokens to complete perform our bodies, enhancing its flexibility and structure-awareness. The efficacy of AST-T5’s proposed strategies is evaluated via managed experiments, evaluating it towards T5 baselines with similar Transformer architectures, pretraining knowledge, and computational settings.

AST-T5 constantly outperforms similar-sized LMs throughout varied code-related duties, notably in code-to-code duties, surpassing CodeT5 by 2 factors within the precise match rating for the Bugs2Fix job and by 3 factors within the exact match rating for Java-C# Transpilation in CodeXGLUE. The contributions of every part inside the AST-aware pretraining framework of AST-T5 are analyzed via managed experiments, which present the impact of the proposed strategies. AST-T5’s structure-awareness, achieved via leveraging the AST of code, enhances code era, transpilation, and understanding. AST-T5 integrates seamlessly with any encoder-decoder transformer with out requiring intricate program analyses or architectural adjustments.

In conclusion, AST-T5 is a pretraining paradigm that harnesses the facility of ASTs to spice up the efficiency of code-centric language fashions. AST-T5 constantly outperforms similar-sized language fashions throughout varied code-related duties, notably in code-to-code duties, surpassing CodeT5 in precise match scores for the Bugs2Fix job and Java-C# Transpilation in CodeXGLUE. The simplicity and adaptableness of AST-T5 make it a possible drop-in substitute for any encoder-decoder language mannequin, highlighting its potential for real-world deployments. AST-T5’s structure-awareness, achieved via leveraging the AST, enhances code era, transpilation, and understanding. Future work could discover the scalability of AST-T5 by coaching bigger fashions on extra expansive datasets and evaluating the mannequin on your complete sanitized subset with out few-shot prompts.

Take a look at the Paper and Github. All credit score for this analysis goes to the researchers of this undertaking. Additionally, don’t overlook to observe us on Twitter. Be a part of our 36k+ ML SubReddit, 41k+ Facebook Community, Discord Channel, and LinkedIn Group.

Should you like our work, you’ll love our newsletter..

Don’t Overlook to hitch our Telegram Channel

Sana Hassan, a consulting intern at Marktechpost and dual-degree scholar at IIT Madras, is enthusiastic about making use of know-how and AI to deal with real-world challenges. With a eager curiosity in fixing sensible issues, he brings a contemporary perspective to the intersection of AI and real-life options.