Introduction to Deep Studying Libraries: PyTorch and Lightning AI

Photograph by Google DeepMind

Deep studying is a department of the machine studying mannequin based mostly on neural networks. Within the different machine mannequin, the info processing to seek out the significant options is commonly carried out manually or counting on area experience; nonetheless, deep studying can mimic the human mind to find the important options, growing the mannequin efficiency.

There are various functions for deep studying fashions, together with facial recognition, fraud detection, speech-to-text, textual content era, and lots of extra. Deep studying has change into an ordinary strategy in lots of superior machine studying functions, and we’ve got nothing to lose by studying about them.

To develop this deep studying mannequin, there are numerous library frameworks we will rely on slightly than working from scratch. On this article, we’ll focus on two totally different libraries we will use to develop deep studying fashions: PyTorch and Lighting AI. Let’s get into it.

PyTorch is an open-source library framework to coach deep-learning neural networks. PyTorch was developed by the Meta group in 2016 and has grown in reputation. The rise of recognition was due to the PyTorch characteristic that mixes the GPU backend library from Torch with Python language. This mixture makes the bundle straightforward to observe by the consumer however nonetheless highly effective in growing the deep studying mannequin.

There are a number of standout PyTorch features which are enabled by the libraries, together with a pleasant front-end, distributed coaching, and a quick and versatile experimentation course of. As a result of there are numerous PyTorch customers, the neighborhood improvement and funding had been additionally huge. That’s the reason studying PyTorch could be helpful in the long term.

PyTorch constructing block is a tensor, a multi-dimensional array used to encode all of the enter, output, and mannequin parameters. You’ll be able to think about a tensor just like the NumPy array however with the potential to run on GPU.

Let’s check out the PyTorch library. It’s really useful to carry out the tutorial within the cloud, equivalent to Google Colab for those who don’t have entry to a GPU system (though it may nonetheless work with a CPU). However, If you wish to begin within the native, we have to set up the library by way of this page. Choose the suitable system and specification you will have.

For instance, the code beneath is for pip set up you probably have a CUDA-Succesful system.

pip3 set up torch torchvision torchaudio --index-url https://obtain.pytorch.org/whl/cu118

After the set up finishes, let’s attempt some PyTorch capabilities to develop the deep studying mannequin. We’ll do a easy picture classification mannequin with PyTorch on this tutorial based mostly on their internet tutorial. We might stroll on the code and have a proof of what occurred inside the code.

First, we’d obtain the dataset with PyTorch. For this instance, we’d use the MNIST dataset, which is the quantity handwritten classification dataset.

from torchvision import datasets

practice = datasets.MNIST(

root="image_data",

practice=True,

obtain=True

)

check = datasets.MNIST(

root="image_data",

practice=False,

obtain=True,

)

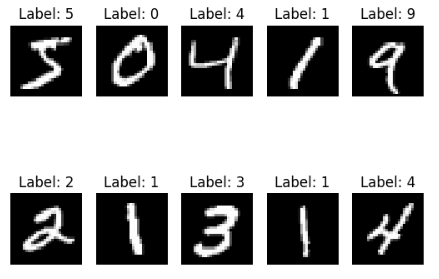

We obtain each the MNIST practice and check datasets to our root folder. Let’s see what our dataset seems like.

import matplotlib.pyplot as plt

for i, (img, label) in enumerate(checklist(practice)[:10]):

plt.subplot(2, 5, i+1)

plt.imshow(img, cmap="grey")

plt.title(f'Label: {label}')

plt.axis('off')

plt.present()

Each picture is a single-digit quantity between zero and 9, that means we’ve got ten labels. Subsequent, let’s develop a picture classifier based mostly on this dataset.

We have to rework the picture dataset right into a tensor to develop a deep studying mannequin with PyTorch. As our picture is a PIL object, we will use the PyTorch ToTensor operate to carry out the transformation. Moreover, we will mechanically rework the picture with the datasets operate.

from torchvision.transforms import ToTensor

practice = datasets.MNIST(

root="information",

practice=True,

obtain=True,

rework=ToTensor()

)

check = datasets.MNIST(

root="information",

practice=False,

obtain=True,

rework=ToTensor()

)

By passing the transformation operate to the rework parameter, we will management what the info could be like. Subsequent, we’d wrap the info into the DataLoader object so the PyTorch mannequin may entry our picture information.

from torch.utils.information import DataLoader

measurement = 64

train_dl = DataLoader(practice, batch_size=measurement)

test_dl = DataLoader(check, batch_size=measurement)

for X, y in test_dl:

print(f"Form of X [N, C, H, W]: {X.form}")

print(f"Form of y: {y.form} {y.dtype}")

break

Form of X [N, C, H, W]: torch.Dimension([64, 1, 28, 28])

Form of y: torch.Dimension([64]) torch.int64

Within the code above, we create a DataLoader object for the practice and check information. Every information batch iteration would return 64 options and labels within the object above. Moreover, the form of our picture is 28 * 28 (top * width).

Subsequent, we’d develop the Neural Community mannequin object.

from torch import nn

#Change to 'cuda' you probably have entry to GPU

system="cpu"

class NNModel(nn.Module):

def __init__(self):

tremendous().__init__()

self.flatten = nn.Flatten()

self.lr_stack = nn.Sequential(

nn.Linear(28*28, 128),

nn.ReLU(),

nn.Linear(128, 128),

nn.ReLU(),

nn.Linear(128, 10)

)

def ahead(self, x):

x = self.flatten(x)

logits = self.lr_stack(x)

return logits

mannequin = NNModel().to(system)

print(mannequin)

NNModel(

(flatten): Flatten(start_dim=1, end_dim=-1)

(lr_stack): Sequential(

(0): Linear(in_features=784, out_features=128, bias=True)

(1): ReLU()

(2): Linear(in_features=128, out_features=128, bias=True)

(3): ReLU()

(4): Linear(in_features=128, out_features=10, bias=True)

)

)

Within the object above, we create a Neural Mannequin with few layer construction. To develop the Neural Mannequin object, we use the subclassing methodology with the nn.module operate and create the neural community layers inside the__init__.

We initially convert the 2D picture information into pixel values contained in the layer with the flatten operate. Then, we use the sequential operate to wrap our layer right into a sequence of layers. Contained in the sequential operate, we’ve got our mannequin layer:

nn.Linear(28*28, 128),

nn.ReLU(),

nn.Linear(128, 128),

nn.ReLU(),

nn.Linear(128, 10)

By sequence, what occurs above is:

- First, the info enter which is 28*28 options is reworked utilizing a linear operate within the linear layer and having 128 options because the output.

- ReLU is a non-linear activation operate that’s current between the mannequin enter and output to introduce non-linearity.

- 128 options enter to the linear layer and have 128 options output

- One other ReLU activation operate

- 128 options because the enter within the linear layer and 10 options because the output (our dataset label solely has 10 labels).

Lastly, the ahead operate is current for the precise enter course of for the mannequin. Subsequent, the mannequin would wish a loss operate and optimization operate.

from torch.optim import SGD

loss_fn = nn.CrossEntropyLoss()

optimizer = SGD(mannequin.parameters(), lr=1e-3)

For the following code, we simply put together the coaching and check preparation earlier than we run the modeling exercise.

import torch

def practice(dataloader, mannequin, loss_fn, optimizer):

measurement = len(dataloader.dataset)

mannequin.practice()

for batch, (X, y) in enumerate(dataloader):

X, y = X.to(system), y.to(system)

pred = mannequin(X)

loss = loss_fn(pred, y)

loss.backward()

optimizer.step()

optimizer.zero_grad()

if batch % 100 == 0:

loss, present = loss.merchandise(), (batch + 1) * len(X)

print(f"loss: {loss:>2f} [{current:>5d}/{size:>5d}]")

def check(dataloader, mannequin, loss_fn):

measurement = len(dataloader.dataset)

num_batches = len(dataloader)

mannequin.eval()

test_loss, right = 0, 0

with torch.no_grad():

for X, y in dataloader:

X, y = X.to(system), y.to(system)

pred = mannequin(X)

test_loss += loss_fn(pred, y).merchandise()

right += (pred.argmax(1) == y).sort(torch.float).sum().merchandise()

test_loss /= num_batches

right /= measurement

print(f"Take a look at Error: n Accuracy: {(100*right):>0.1f}%, Avg loss: {test_loss:>2f} n")

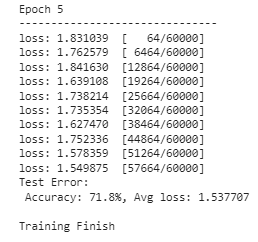

Now we’re able to run our mannequin coaching. We might determine what number of epochs (iterations) we need to carry out with our mannequin. For this instance, let’s say we wish it to run for 5 occasions.

epoch = 5

for i in vary(epoch):

print(f"Epoch {i+1}n-------------------------------")

practice(train_dl, mannequin, loss_fn, optimizer)

check(test_dl, mannequin, loss_fn)

print("Completed!")

The mannequin now has completed their coaching and in a position for use for any picture prediction exercise. The consequence may fluctuate, so anticipate totally different outcomes from the above picture.

It’s just some issues that PyTorch can do, however you’ll be able to see that constructing a mannequin with PyTorch is simple. In case you are within the pre-trained mannequin, PyTorch has a hub you’ll be able to entry.

Lighting AI is an organization that gives numerous merchandise to attenuate the time to coach the PyTorch deep studying mannequin and simplify it. Certainly one of their open-source product is PyTorch Lighting, which is a library that gives a framework to coach and deploy the PyTorch mannequin.

Lighting gives a number of options, together with code flexibility, no boilerplate, minimal API, and improved crew collaboration. Lighting additionally gives options equivalent to multi-GPU utilization and swift, low-precision coaching. This made Lighting an excellent various to develop our PyTorch mannequin.

Let’s check out the mannequin improvement with Lighting. To start out, we have to set up the bundle.

With the Lighting put in, we’d additionally set up one other Lighting AI product known as TorchMetrics to simplify the metric choice.

With all of the libraries put in, we’d attempt to develop the identical mannequin from our earlier instance utilizing a Lighting wrapper. Beneath is the entire code for growing the mannequin.

import torch

import torchmetrics

import pytorch_lightning as pl

from torch import nn

from torch.optim import SGD

# Change to 'cuda' you probably have entry to GPU

system="cpu"

class NNModel(pl.LightningModule):

def __init__(self):

tremendous().__init__()

self.flatten = nn.Flatten()

self.lr_stack = nn.Sequential(

nn.Linear(28 * 28, 128),

nn.ReLU(),

nn.Linear(128, 128),

nn.ReLU(),

nn.Linear(128, 10)

)

self.train_acc = torchmetrics.Accuracy(job="multiclass", num_classes=10)

self.valid_acc = torchmetrics.Accuracy(job="multiclass", num_classes=10)

def ahead(self, x):

x = self.flatten(x)

logits = self.lr_stack(x)

return logits

def training_step(self, batch, batch_idx):

x, y = batch

x, y = x.to(system), y.to(system)

pred = self(x)

loss = nn.CrossEntropyLoss()(pred, y)

self.log('train_loss', loss)

# Compute coaching accuracy

acc = self.train_acc(pred.softmax(dim=-1), y)

self.log('train_acc', acc, on_step=True, on_epoch=True, prog_bar=True)

return loss

def configure_optimizers(self):

return SGD(self.parameters(), lr=1e-3)

def test_step(self, batch, batch_idx):

x, y = batch

x, y = x.to(system), y.to(system)

pred = self(x)

loss = nn.CrossEntropyLoss()(pred, y)

self.log('test_loss', loss)

# Compute check accuracy

acc = self.valid_acc(pred.softmax(dim=-1), y)

self.log('test_acc', acc, on_step=True, on_epoch=True, prog_bar=True)

return loss

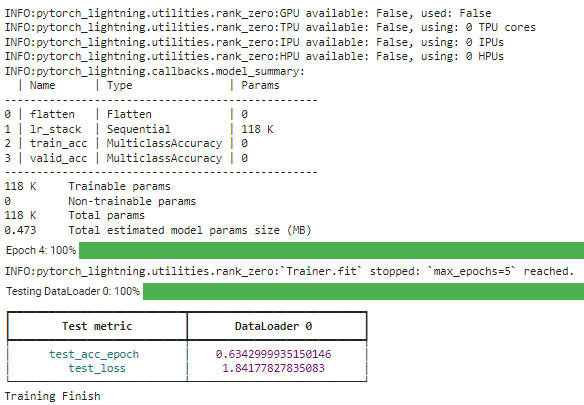

Let’s break down what occur within the code above. The distinction with the PyTorch mannequin we developed beforehand is that the NNModel class now makes use of subclassing from the LightingModule. Moreover, we assign the accuracy metrics to evaluate utilizing the TorchMetrics. Then, we added the coaching and testing step inside the class and arrange the optimization operate.

With all of the fashions set, we’d run the mannequin coaching utilizing the reworked DataLoader object to coach our mannequin.

# Create a PyTorch Lightning coach

coach = pl.Coach(max_epochs=5)

# Create the mannequin

mannequin = NNModel()

# Match the mannequin

coach.match(mannequin, train_dl)

# Take a look at the mannequin

coach.check(mannequin, test_dl)

print("Coaching End")

With the Lighting library, we will simply tweak the construction you want. For additional studying, you would learn their documentation.

PyTorch is a library for growing deep studying fashions, and it supplies a simple framework for us to entry many superior APIs. Lighting AI additionally helps the library, which supplies a framework to simplify the mannequin improvement and improve the event flexibility. This text launched us to each the library’s options and easy code implementation.

Cornellius Yudha Wijaya is an information science assistant supervisor and information author. Whereas working full-time at Allianz Indonesia, he likes to share Python and Information suggestions by way of social media and writing media.