Scalable coaching platform with Amazon SageMaker HyperPod for innovation: a video technology case research

Video technology has grow to be the most recent frontier in AI analysis, following the success of text-to-image fashions. Luma AI’s lately launched Dream Machine represents a major development on this subject. This text-to-video API generates high-quality, sensible movies rapidly from textual content and pictures. Skilled on the Amazon SageMaker HyperPod, Dream Machine excels in creating constant characters, clean movement, and dynamic digital camera actions.

To speed up iteration and innovation on this subject, adequate computing assets and a scalable platform are important. Throughout the iterative analysis and growth section, information scientists and researchers have to run a number of experiments with totally different variations of algorithms and scale to bigger fashions. Mannequin parallel coaching turns into mandatory when the overall mannequin footprint (mannequin weights, gradients, and optimizer states) exceeds the reminiscence of a single GPU. Nevertheless, constructing giant distributed coaching clusters is a fancy and time-intensive course of that requires in-depth experience. Moreover, as clusters scale to bigger sizes (for instance, greater than 32 nodes), they require built-in resiliency mechanisms similar to automated defective node detection and alternative to enhance cluster goodput and keep environment friendly operations. These challenges underscore the significance of strong infrastructure and administration methods in supporting superior AI analysis and growth.

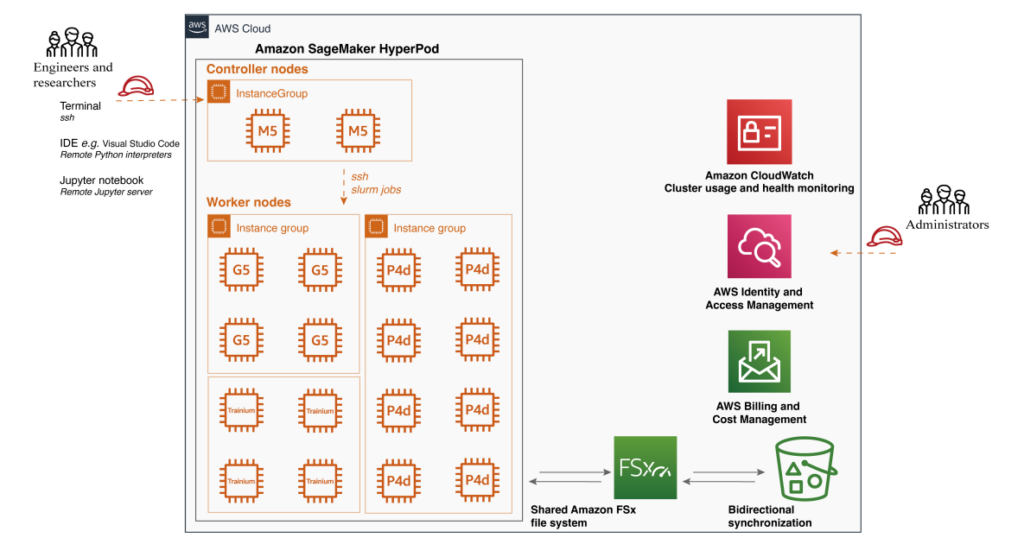

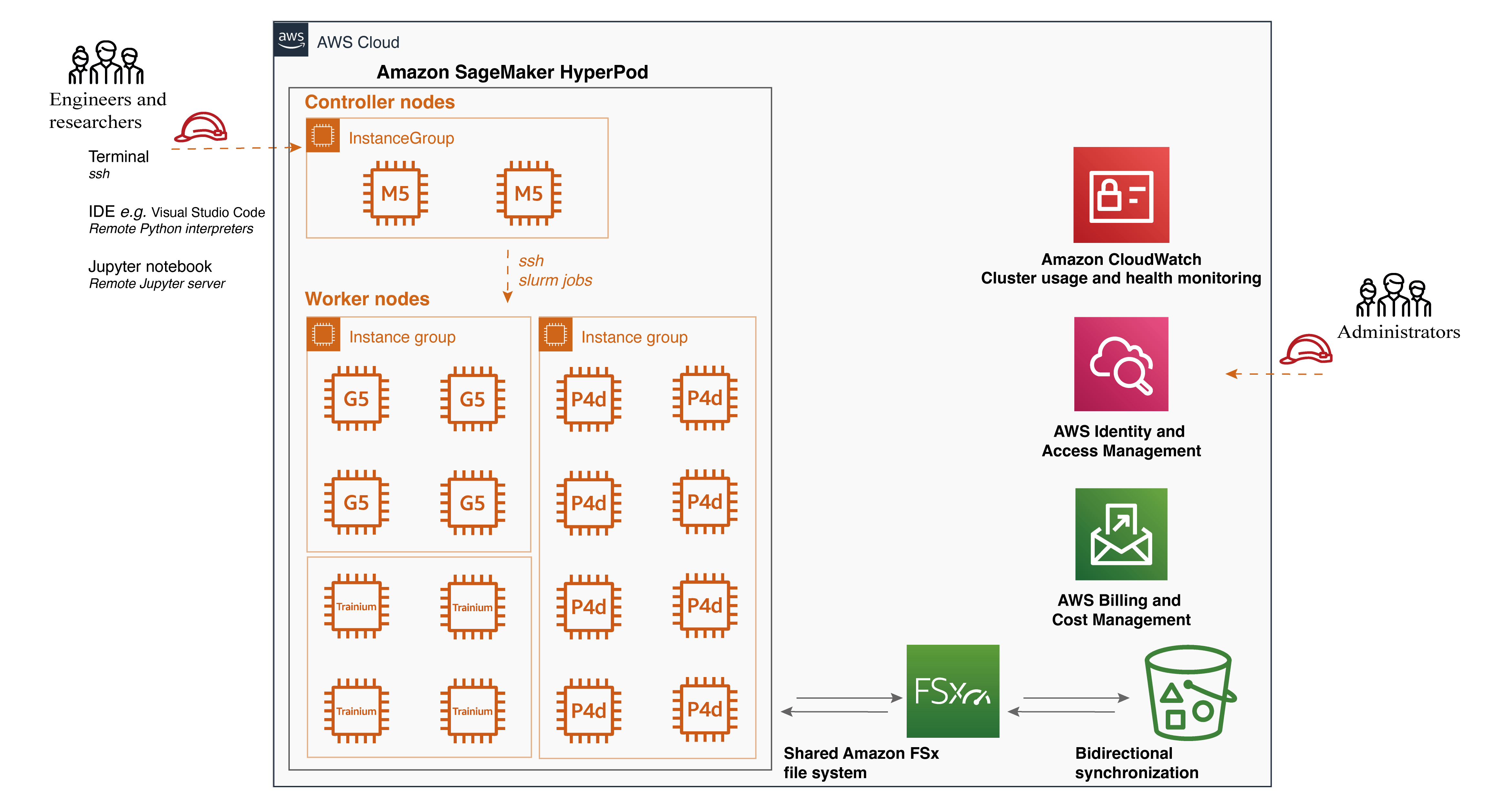

Amazon SageMaker HyperPod, launched throughout re:Invent 2023, is a purpose-built infrastructure designed to deal with the challenges of large-scale coaching. It removes the undifferentiated heavy lifting concerned in constructing and optimizing machine studying (ML) infrastructure for coaching basis fashions (FMs). SageMaker HyperPod provides a extremely customizable person interface utilizing Slurm, permitting customers to pick out and set up any required frameworks or instruments. Clusters are provisioned with the occasion kind and rely of your alternative and may be retained throughout workloads. With these capabilities, clients are adopting SageMaker HyperPod as their innovation platform for extra resilient and performant mannequin coaching, enabling them to construct state-of-the-art fashions quicker.

On this put up, we share an ML infrastructure structure that makes use of SageMaker HyperPod to assist analysis group innovation in video technology. We’ll talk about the benefits and ache factors addressed by SageMaker HyperPod, present a step-by-step setup information, and reveal how one can run a video technology algorithm on the cluster.

Coaching video technology algorithms on Amazon SageMaker HyperPod: background and structure

Video technology is an thrilling and quickly evolving subject that has seen important developments in recent times. Whereas generative modeling has made super progress within the area of picture technology, video technology nonetheless faces a number of challenges that require additional enchancment.

Algorithms structure complexity with diffusion mannequin household

Diffusion fashions have lately made important strides in producing high-quality photographs, prompting researchers to discover their potential in video technology. By leveraging the architecture and pre-trained generative capabilities of diffusion fashions, scientists goal to create visually spectacular movies. The method extends picture technology methods to the temporal area. Beginning with noisy frames, the mannequin iteratively refines them, eradicating random components whereas including significant particulars guided by textual content or picture prompts. This strategy progressively transforms summary patterns into coherent video sequences, successfully translating diffusion fashions’ success in static picture creation to dynamic video synthesis.

Nevertheless, the compute necessities for video technology utilizing diffusion fashions improve considerably in comparison with picture technology for a number of causes:

- Temporal dimension – Not like picture technology, video technology requires processing a number of frames concurrently. This provides a temporal dimension to the unique 2D UNet, considerably growing the quantity of information that must be processed in parallel.

- Iterative denoising course of – The diffusion course of includes a number of iterations of denoising for every body. When prolonged to movies, this iterative course of should be utilized to a number of frames, multiplying the computational load.

- Elevated parameter rely – To deal with the extra complexity of video information, fashions typically require extra parameters, resulting in bigger reminiscence footprints and elevated computational calls for.

- Larger decision and longer sequences – Video technology typically goals for increased decision outputs and longer sequences in comparison with single picture technology, additional amplifying the computational necessities.

On account of these components, the operational effectivity of diffusion fashions for video technology is decrease and considerably extra compute-intensive in comparison with picture technology. This elevated computational demand underscores the necessity for superior {hardware} options and optimized mannequin architectures to make video technology extra sensible and accessible.

Dealing with the elevated computational necessities

The development in video technology high quality necessitates a major improve within the dimension of the fashions and coaching information. Researchers have concluded that scaling up the bottom mannequin dimension results in substantial enhancements in video technology efficiency. Nevertheless, this progress comes with appreciable challenges by way of computing energy and reminiscence assets. Coaching bigger fashions requires extra computational energy and reminiscence house, which might restrict the accessibility and sensible use of those fashions. Because the mannequin dimension will increase, the computational necessities develop exponentially, making it troublesome to coach these fashions on single GPU, and even single node multi-GPUs setting. Furthermore, storing and manipulating the massive datasets required for coaching additionally pose important challenges by way of infrastructure and prices. Excessive-quality video datasets are usually large, requiring substantial storage capability and environment friendly information administration methods. Transferring and processing these datasets may be time-consuming and resource-intensive, including to the general computational burden.

Sustaining temporal consistency and continuity

Sustaining temporal consistency and continuity turns into more and more difficult because the size of the generated video will increase. Temporal consistency refers back to the continuity of visible components, similar to objects, characters, and scenes, throughout subsequent frames. Inconsistencies in look, motion, or lighting can result in jarring visible artifacts and disrupt the general viewing expertise. To handle this problem, researchers have explored the usage of multiframe inputs, which offer the mannequin with info from a number of consecutive frames to raised perceive and mannequin the relationships and dependencies throughout time. These methods protect high-resolution particulars in visible high quality whereas simulating a steady and clean temporal movement course of. Nevertheless, they require extra subtle modeling methods and elevated computational assets.

Algorithm overview

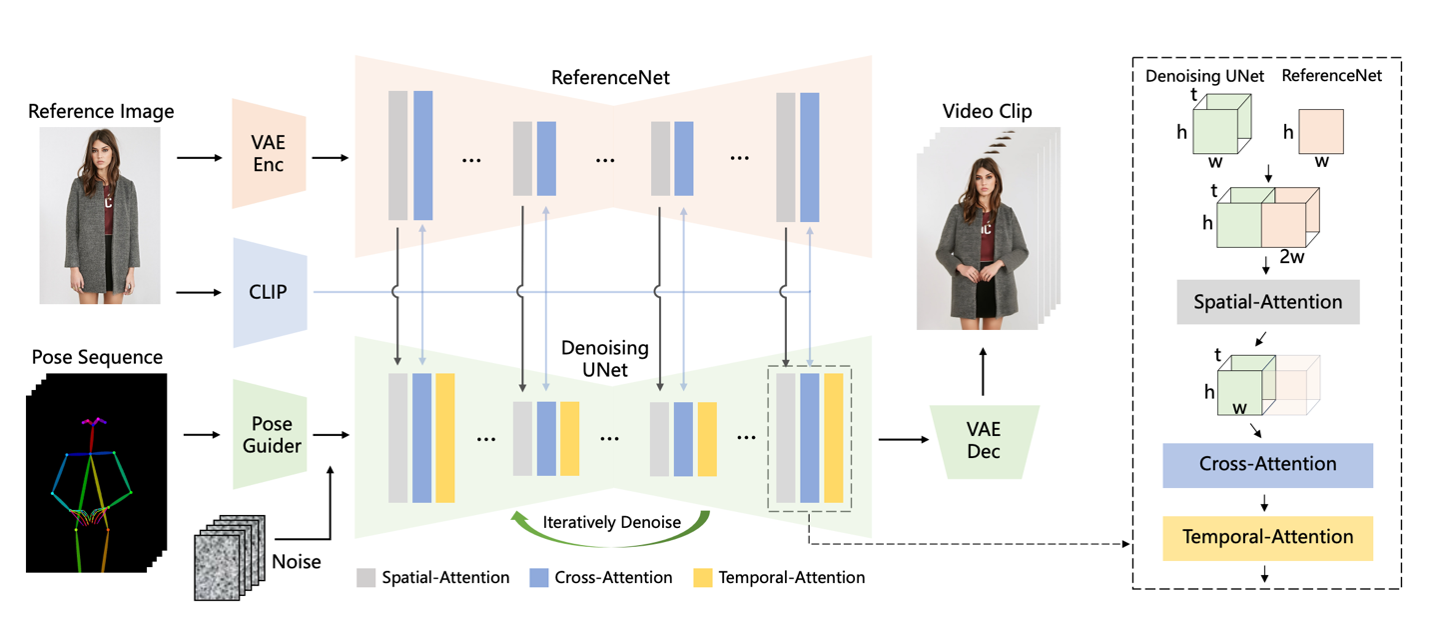

Within the following sections, we illustrate how one can run the Animate Anyone: Consistent and Controllable Image-to-Video Synthesis for Character Animation algorithm on Amazon SageMaker HyperPod for video technology. Animate Anybody is likely one of the strategies for reworking character photographs into animated movies managed by desired pose sequences. The important thing parts of the structure embrace:

- ReferenceNet – A symmetrical UNet construction that captures spatial particulars of the reference picture and integrates them into the denoising UNet utilizing spatial-attention to protect look consistency

- Pose guider – A light-weight module that effectively integrates pose management indicators into the denoising course of to make sure pose controllability

- Temporal layer – Added to the denoising UNet to mannequin relationships throughout a number of frames, preserving high-resolution particulars and making certain temporal stability and continuity of the character’s movement

The mannequin structure is illustrated within the following picture from its original research paper. The strategy is skilled on a dataset of video clips and achieves state-of-the-art outcomes on style video and human dance synthesis benchmarks, demonstrating its capacity to animate arbitrary characters whereas sustaining look consistency and temporal stability. The implementation of AnimateAnyone may be discovered in this repository.

To handle the challenges of large-scale coaching infrastructure required in video technology coaching course of, we will use the facility of Amazon SageMaker HyperPod. Whereas many purchasers have adopted SageMaker HyperPod for large-scale coaching, similar to Luma’s launch of Dream Machine and Stability AI’s work on FMs for picture or video technology, we consider that the capabilities of SageMaker HyperPod may profit lighter ML workloads, together with full fine-tuning.

Amazon SageMaker HyperPod idea and benefit

SageMaker HyperPod provides a complete set of options that considerably improve the effectivity and effectiveness of ML workflows. From purpose-built infrastructure for distributed coaching to customizable environments and seamless integration with instruments like Slurm, SageMaker HyperPod empowers ML practitioners to concentrate on their core duties whereas profiting from the facility of distributed computing. With SageMaker HyperPod, you’ll be able to speed up your ML initiatives, deal with bigger datasets and fashions, and drive innovation in your group. SageMaker HyperPod gives a number of key options and benefits within the scalable coaching structure.

Objective-built infrastructure – One of many main benefits of SageMaker HyperPod is its purpose-built infrastructure for distributed coaching. It simplifies the setup and administration of clusters, permitting you to simply configure the specified occasion varieties and counts, which may be retained throughout workloads. Because of this flexibility, you’ll be able to adapt to varied eventualities. For instance, when working with a smaller spine mannequin like Secure Diffusion 1.5, you’ll be able to run a number of experiments concurrently on a single GPU to speed up the iterative growth course of. As your dataset grows, you’ll be able to seamlessly change to information parallelism and distribute the workload throughout a number of GPUs, similar to eight GPUs, to cut back compute time. Moreover, when coping with bigger spine fashions like Secure Diffusion XL, SageMaker HyperPod provides the flexibleness to scale and use mannequin parallelism.

Shared file system – SageMaker HyperPod helps the attachment of a shared file system, similar to Amazon FSx for Lustre. This integration brings a number of advantages to your ML workflow. FSx for Lustre permits full bidirectional synchronization with Amazon Simple Storage Service (Amazon S3), together with the synchronization of deleted information and objects. It additionally means that you can synchronize file methods with a number of S3 buckets or prefixes, offering a unified view throughout a number of datasets. In our case, because of this the put in libraries throughout the conda digital setting shall be synchronized throughout totally different employee nodes, even when the cluster is torn down and recreated. Moreover, enter video information for coaching and inference outcomes may be seamlessly synchronized with S3 buckets, enhancing the expertise of validating inference outcomes.

Customizable setting – SageMaker HyperPod provides the flexibleness to customise your cluster setting utilizing lifecycle scripts. These scripts mean you can set up further frameworks, debugging instruments, and optimization libraries tailor-made to your particular wants. You can too break up your coaching information and mannequin throughout all nodes for parallel processing, totally utilizing the cluster’s compute and community infrastructure. Furthermore, you might have full management over the execution setting, together with the flexibility to simply set up and customise digital Python environments for every mission. In our case, all of the required libraries for operating the coaching script are put in inside a conda digital setting, which is shared throughout all employee nodes, simplifying the method of distributed coaching on multi-node setups. We additionally put in MLflow Tracking on the controller node to watch the coaching progress.

Job distribution with Slurm integration – SageMaker HyperPod seamlessly integrates with Slurm, a preferred open supply cluster administration and job scheduling system. Slurm may be put in and arrange by way of lifecycle scripts as a part of the cluster creation course of, offering a extremely customizable person interface. With Slurm, you’ll be able to effectively schedule jobs throughout totally different GPU assets so you’ll be able to run a number of experiments in parallel or use distributed coaching to coach giant fashions for improved efficiency. With Slurm, clients can customise the job queues, prioritization algorithms, and job preemption insurance policies, making certain optimum useful resource use and streamlining your ML workflows. If you’re looking a Kubernetes-based administrator expertise, lately, Amazon SageMaker HyperPod introduces Amazon EKS support to handle their clusters utilizing a Kubernetes-based interface.

Enhanced productiveness – To additional improve productiveness, SageMaker HyperPod helps connecting to the cluster utilizing Visible Studio Code (VS Code) by way of a Safe Shell (SSH) connection. You possibly can simply browse and modify code inside an built-in growth setting (IDE), execute Python scripts seamlessly as if in an area setting, and launch Jupyter notebooks for fast growth and debugging. The Jupyter pocket book software expertise inside VS Code gives a well-recognized and intuitive interface for iterative experimentation and evaluation.

Arrange SageMaker HyperPod and run video technology algorithms

On this walkthrough, we use the AnimateAnyone algorithm as an illustration for video technology. AnimateAnyone is a state-of-the-art algorithm that generates high-quality movies from enter photographs or movies. Our walkthrough guidance code is available on GitHub.

Arrange the cluster

To create the SageMaker HyperPod infrastructure, observe the detailed intuitive and step-by-step steering for cluster setup from the Amazon SageMaker HyperPod workshop studio.

The 2 issues you’ll want to put together are a provisioning_parameters.json file required by HyperPod for organising Slurm and a cluster-config.json file because the configuration file for creating the HyperPod cluster. Inside these configuration information, you’ll want to specify the InstanceGroupName, InstanceType, and InstanceCount for the controller group and employee group, in addition to the execution function hooked up to the group.

One sensible setup is to arrange bidirectional synchronization with Amazon FSx and Amazon S3. This may be accomplished with the Amazon S3 integration for Amazon FSx for Lustre. It helps to ascertain a full bidirectional synchronization of your file methods with Amazon S3. As well as, it may possibly synchronize your file methods with a number of S3 buckets or prefixes.

As well as, when you desire an area IDE similar to VSCode, you’ll be able to arrange an SSH connection to the controller node inside your IDE. On this approach, the employee nodes can be utilized for operating scripts inside a conda setting and a Jupyter pocket book server.

Run the AnimateAnyone algorithm

When the cluster is in service, you’ll be able to join utilizing SSH into the controller node, then go into the employee nodes, the place the GPU compute assets can be found. You possibly can observe the SSH Access to compute information. We propose putting in the libraries on the employee nodes instantly.

To create the conda setting, observe the directions at Miniconda’s Quick command line install. You possibly can then use the conda setting to put in all required libraries.

To run AnimateAnyone, clone the GitHub repo and observe the directions.

To coach AnimateAnyone, launch stage 1 for coaching the denoising UNet and ReferenceNet, which permits the mannequin to generate high-quality animated photographs below the situation of a given reference picture and goal pose. The denoising UNet and ReferenceNet are initialized based mostly on the pre-trained weights from Secure Diffusion.

In stage 2, the target is to coach the temporal layer to seize the temporal dependencies amongst video frames.

As soon as the coaching script executes as anticipated, use a Slurm scheduled job to run on a single node. We offer a batch file to simulate the single-node coaching job. It may be a single GPU or a single node with a number of GPUs. If you wish to know extra, the documentation gives detailed directions on operating jobs on SageMaker HyperPod clusters.

#!/bin/bash

#SBATCH --job-name=video-gen

#SBATCH -N 1

#SBATCH --exclusive

#SBATCH -o video-gen-stage-1.out

export OMP_NUM_THREADS=1

# Activate the conda setting

supply ~/miniconda3/bin/activate

conda activate videogen

srun speed up launch train_stage_1.py --config configs/prepare/stage1.yamlVerify the job standing utilizing the next code snippet.

Through the use of a small batch dimension and setting use_8bit_adam=True, you’ll be able to obtain environment friendly coaching on a single GPU. When utilizing a single GPU, use a multi-GPU cluster for operating a number of experiments.

The next code block is one instance of operating 4 jobs in parallel to check totally different hyperparameters. We offer the batch file right here as effectively.

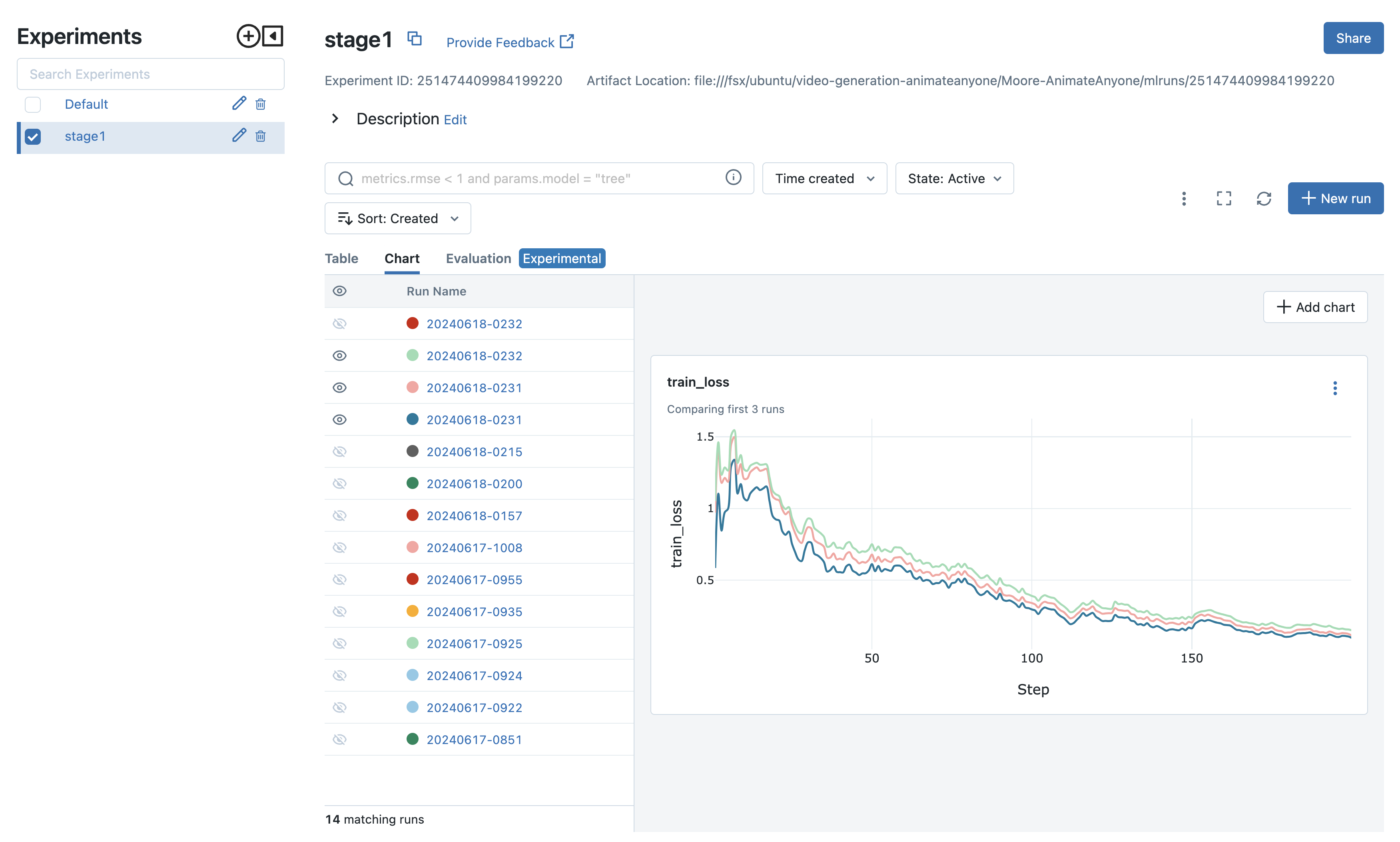

The experiments can then be in contrast, and you’ll transfer ahead with the perfect configuration. In our situation, proven within the following screenshot, we use totally different datasets and video preprocessing methods to validate the stage 1 coaching. Then, we rapidly draw conclusions concerning the influence on video high quality with respect to stage 1 coaching outcomes. For experiment monitoring, apart from putting in MLflow on the controller node to watch the coaching progress, it’s also possible to leverage the fully managed MLflow capability on Amazon SageMaker. This makes it simple for information scientists to make use of MLflow on SageMaker for mannequin coaching, registration, and deployment.

Scale to multi-node GPU setup

As mannequin sizes develop, single GPU reminiscence rapidly turns into a bottleneck. Massive fashions simply exhaust reminiscence with pure information parallelism, and implementing mannequin parallelism may be difficult. DeepSpeed addresses these points, accelerating mannequin growth and coaching.

ZeRO

DeepSpeed is a deep studying optimization library that goals to make distributed coaching simple, environment friendly, and efficient. DeepSpeed’s ZeRO removes reminiscence redundancies throughout data-parallel processes by partitioning three mannequin states (optimizer states, gradients, and parameters) throughout data-parallel processes as an alternative of replicating them. This strategy considerably boosts reminiscence effectivity in comparison with traditional data-parallelism whereas sustaining computational granularity and communication effectivity.

ZeRO provides three levels of optimization:

- ZeRO Stage 1 – Partitions optimizer states throughout processes, with every course of updating solely its partition

- ZeRO Stage 2 – Moreover partitions gradients, with every course of retaining solely the gradients comparable to its optimizer state portion

- ZeRO Stage 3 – Partitions mannequin parameters throughout processes, mechanically accumulating and partitioning them throughout ahead and backward passes

Every stage provides progressively increased reminiscence effectivity at the price of elevated communication overhead. These methods allow coaching of extraordinarily giant fashions that might in any other case be unimaginable. That is significantly helpful when working with restricted GPU reminiscence or coaching very giant fashions.

Speed up

Speed up is a library that allows operating the identical PyTorch code throughout any distributed configuration with minimal code modifications. It handles the complexities of distributed setups, permitting builders to concentrate on their fashions quite than infrastructure. To place it briefly, Speed up makes coaching and inference at scale easy, environment friendly, and adaptable.

Speed up permits simple integration of DeepSpeed options by way of a configuration file. Customers can provide a customized configuration file or use offered templates. The next is an instance of how one can use DeepSpeed with Speed up.

Single node with a number of GPUs job

To run a job on a single node with a number of GPUs, we have now examined this configuration on 4 GPU cases (for instance, g5.24xlarge). For these cases, regulate train_width: 768 and train_height: 768, and set use_8bit_adam: False in your configuration file. You’ll possible discover that the mannequin can deal with a lot bigger photographs for technology with these settings.

This Slurm job will:

- Allocate a single node

- Activate the coaching setting

- Run

speed up launch train_stage_1.py --config configs/prepare/stage1.yaml

Multi-node with a number of GPUs job

To run a job throughout a number of nodes, every with a number of GPUs, we have now examined this distribution with two ml.g5.24xlarge cases.

This Slurm job will:

- Allocate the desired variety of nodes

- Activate the coaching setting on every node

- Run

speed up launch --multi_gpu --num_processes <num_processes> --num_machines <num_machines> train_stage_1.py --config configs/prepare/stage1.yaml

When operating a multi-node job, ensure that the num_processes and num_machines arguments are set accurately based mostly in your cluster configuration.

For optimum efficiency, regulate the batch dimension and studying price in response to the variety of GPUs and nodes getting used. Think about using a studying price scheduler to adapt the training price throughout coaching.

Moreover, monitor the GPU reminiscence utilization and regulate the mannequin’s structure or batch dimension if mandatory to forestall out-of-memory points.

By following these steps and configurations, you’ll be able to effectively prepare your fashions on single-node and multi-node setups with a number of GPUs, profiting from the facility of distributed coaching.

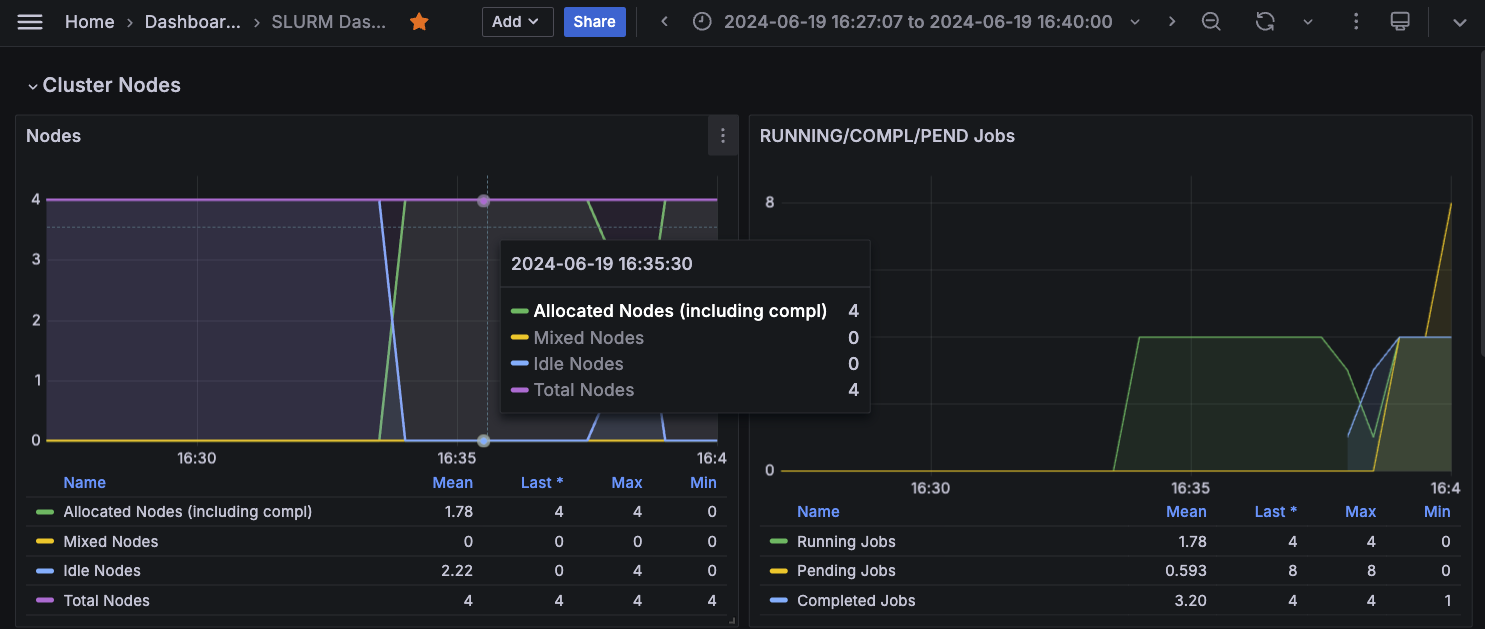

Monitor cluster utilization

To realize complete observability into your SageMaker HyperPod cluster assets and software program parts, combine the cluster with Amazon Managed Service for Prometheus and Amazon Managed Grafana. The mixing with Amazon Managed Service for Prometheus makes it attainable to export of metrics associated to your HyperPod cluster assets, offering insights into their efficiency, utilization, and well being. The mixing with Amazon Managed Grafana makes it attainable to visualise these metrics by way of varied Grafana dashboards that provide intuitive interfaces for monitoring and analyzing the cluster’s conduct. You possibly can observe the SageMaker documentation on Monitor SageMaker HyperPod cluster resources and Workshop Studio Observability section to bootstrap your cluster monitoring with the metric exporter companies. The next screenshot reveals a Grafana dashboard.

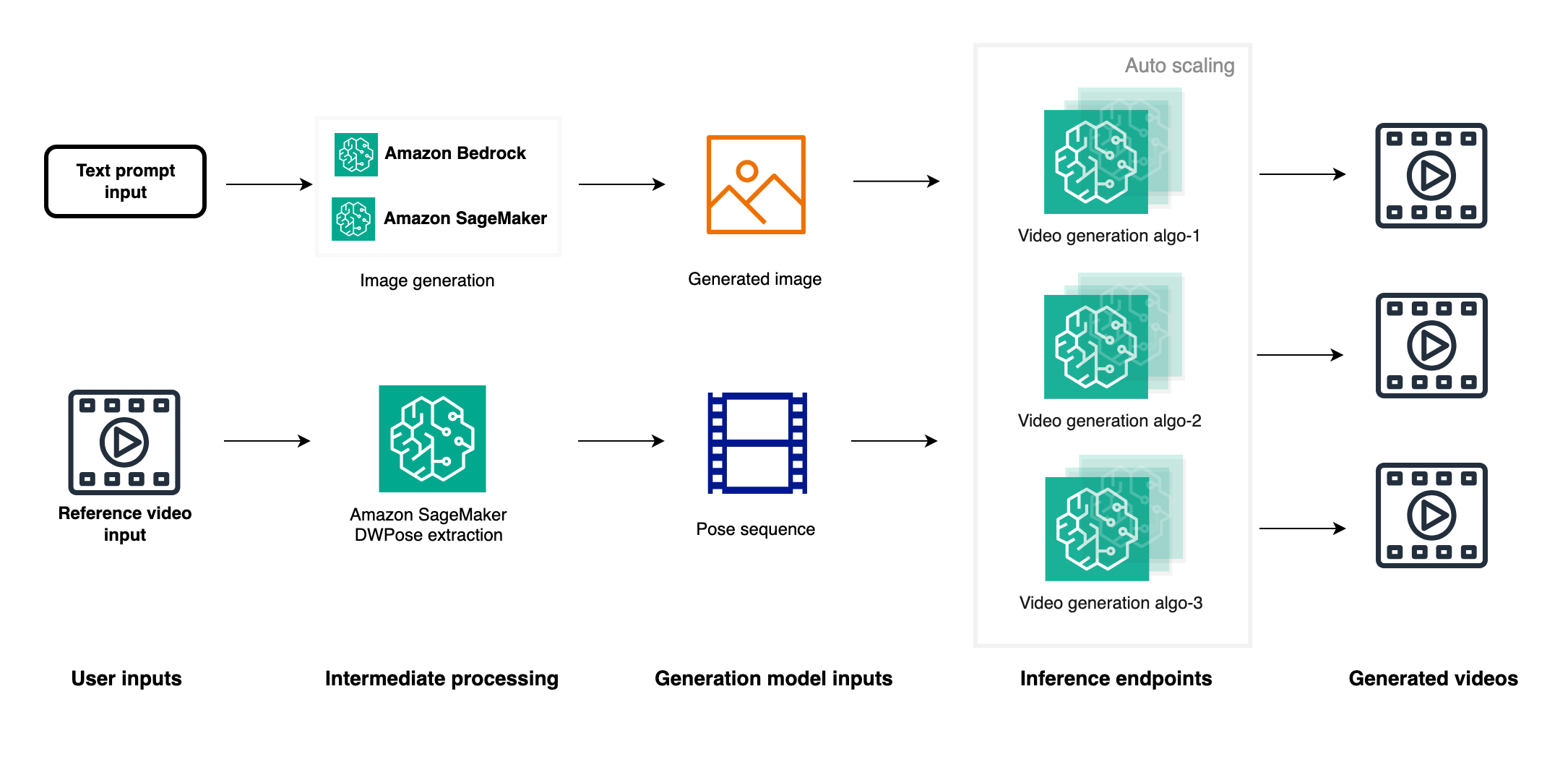

Inference and outcomes dialogue

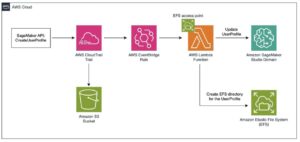

When the fine-tuned mannequin is prepared, you might have two main deployment choices: utilizing well-liked picture and video technology GUIs like ComfyUI or deploying an inference endpoint with Amazon SageMaker. The SageMaker choice provides a number of benefits, together with simple integration of picture technology APIs with video technology endpoints to create end-to-end pipelines. As a managed service with auto scaling, SageMaker makes parallel technology of a number of movies attainable utilizing both the identical reference picture with totally different reference movies or the reverse. Moreover, you’ll be able to deploy varied video technology mannequin endpoints similar to MimicMotion and UniAnimate, permitting for high quality comparisons by producing movies in parallel with the identical reference picture and video. This strategy not solely gives flexibility and scalability but in addition accelerates the manufacturing course of by making attainable the technology of numerous movies rapidly, finally streamlining the method of acquiring content material that meets enterprise necessities. The SageMaker choice thus provides a robust, environment friendly, and scalable resolution for video technology workflows. The next diagram reveals a primary model of video technology pipeline. You possibly can modify it based mostly by yourself particular enterprise necessities.

Latest developments in video technology have quickly overcome limitations of earlier fashions like AnimateAnyone. Two notable analysis papers showcase important progress on this area.

Champ: Controllable and Consistent Human Image Animation with 3D Parametric Guidance enhances form alignment and movement steering. It demonstrates superior capacity in producing high-quality human animations that precisely seize each pose and form variations, with improved generalization on in-the-wild datasets.

UniAnimate: Taming Unified Video Diffusion Models for Consistent Human Image Animation makes it attainable to generate longer movies, as much as one minute, in comparison with earlier fashions’ restricted body outputs. It introduces a unified noise enter supporting each random noise enter and first body conditioned enter, enhancing long-term video technology capabilities.

Cleanup

To keep away from incurring future expenses, delete the assets created as a part of this put up:

- Delete the SageMaker HyperPod cluster utilizing both the CLI or the console.

- As soon as the SageMaker HyperPod cluster deletion is full, delete the CloudFormation stack. For extra particulars on cleanup, check with the cleanup section in the Amazon SageMaker HyperPod workshop.

- To delete the endpoints created throughout deployment, check with the endpoint deletion part we offered within the Jupyter pocket book. Then manually delete the SageMaker pocket book.

Conclusion

On this put up, we explored the thrilling subject of video technology and showcased how SageMaker HyperPod can be utilized to effectively prepare video technology algorithms at scale. Through the use of the AnimateAnyone algorithm for instance, we demonstrated the step-by-step strategy of organising a SageMaker HyperPod cluster, operating the algorithm, scaling it to a number of GPU nodes, and monitoring GPU utilization through the coaching course of.

SageMaker HyperPod provides a number of key benefits that make it a really perfect platform for coaching large-scale ML fashions, significantly within the area of video technology. Its purpose-built infrastructure permits for distributed coaching at scale so you’ll be able to handle clusters with desired occasion varieties and counts. The flexibility to connect a shared file system similar to Amazon FSx for Lustre gives environment friendly information storage and retrieval, with full bidirectional synchronization with Amazon S3. Furthermore, the SageMaker HyperPod customizable setting, integration with Slurm, and seamless connectivity with Visible Studio Code improve productiveness and simplify the administration of distributed coaching jobs.

We encourage you to make use of SageMaker HyperPod to your ML coaching workloads, particularly these concerned in video technology or different computationally intensive duties. By harnessing the facility of SageMaker HyperPod, you’ll be able to speed up your analysis and growth efforts, iterate quicker, and construct state-of-the-art fashions extra effectively. Embrace the way forward for video technology and unlock new prospects with SageMaker HyperPod. Begin your journey right now and expertise the advantages of distributed coaching at scale.

Concerning the creator

Yanwei Cui, PhD, is a Senior Machine Studying Specialist Options Architect at AWS. He began machine studying analysis at IRISA (Analysis Institute of Laptop Science and Random Techniques), and has a number of years of expertise constructing AI-powered industrial functions in pc imaginative and prescient, pure language processing, and on-line person conduct prediction. At AWS, he shares his area experience and helps clients unlock enterprise potentials and drive actionable outcomes with machine studying at scale. Outdoors of labor, he enjoys studying and touring.

Yanwei Cui, PhD, is a Senior Machine Studying Specialist Options Architect at AWS. He began machine studying analysis at IRISA (Analysis Institute of Laptop Science and Random Techniques), and has a number of years of expertise constructing AI-powered industrial functions in pc imaginative and prescient, pure language processing, and on-line person conduct prediction. At AWS, he shares his area experience and helps clients unlock enterprise potentials and drive actionable outcomes with machine studying at scale. Outdoors of labor, he enjoys studying and touring.

Gordon Wang is a Senior Information Scientist at AWS. He helps clients think about and scope the use circumstances that may create the best worth for his or her companies, outline paths to navigate technical or enterprise challenges. He’s enthusiastic about pc imaginative and prescient, NLP, generative AI, and MLOps. In his spare time, he loves operating and mountain climbing.

Gordon Wang is a Senior Information Scientist at AWS. He helps clients think about and scope the use circumstances that may create the best worth for his or her companies, outline paths to navigate technical or enterprise challenges. He’s enthusiastic about pc imaginative and prescient, NLP, generative AI, and MLOps. In his spare time, he loves operating and mountain climbing.

Gary LO is a Options Architect at AWS based mostly in Hong Kong. He’s a extremely passionate IT skilled with over 10 years of expertise in designing and implementing vital and sophisticated options for distributed methods, net functions, and cellular platforms for startups and enterprise corporations. Outdoors of the workplace, he enjoys cooking and sharing the most recent expertise traits and insights on his social media platforms with hundreds of followers.

Gary LO is a Options Architect at AWS based mostly in Hong Kong. He’s a extremely passionate IT skilled with over 10 years of expertise in designing and implementing vital and sophisticated options for distributed methods, net functions, and cellular platforms for startups and enterprise corporations. Outdoors of the workplace, he enjoys cooking and sharing the most recent expertise traits and insights on his social media platforms with hundreds of followers.