Find out how to Interpret GPT2-Small. Mechanistic Interpretability on… | by Shuyang Xiang | Mar, 2024

The event of large-scale language fashions, particularly ChatGPT, has left those that have experimented with it, myself included, astonished by its outstanding linguistic prowess and its capacity to perform various duties. Nonetheless, many researchers, together with myself, whereas marveling at its capabilities, additionally discover themselves perplexed. Regardless of figuring out the mannequin’s structure and the particular values of its weights, we nonetheless wrestle to grasp why a specific sequence of inputs results in a particular sequence of outputs.

On this weblog submit, I’ll try and demystify GPT2-small utilizing mechanistic interpretability on a easy case: the prediction of repeated tokens.

Conventional mathematical instruments for explaining machine studying fashions aren’t fully appropriate for language fashions.

Take into account SHAP, a useful device for explaining machine studying fashions. It’s proficient at figuring out which characteristic considerably influenced the prediction of high quality wine. Nonetheless, it’s vital to keep in mind that language fashions make predictions on the token stage, whereas SHAP values are largely computed on the characteristic stage, making them probably unfit for tokens.

Furthermore, Language Fashions (LLMs) have quite a few parameters and inputs, making a high-dimensional area. Computing SHAP values is dear even in low-dimensional areas, and much more so within the high-dimensional area of LLMs.

Regardless of tolerating the excessive computational prices, the reasons offered by SHAP might be superficial. For example, figuring out that the time period “potter” most affected the output prediction as a result of earlier point out of “Harry” doesn’t present a lot perception. It leaves us unsure concerning the a part of the mannequin or the particular mechanism answerable for such a prediction.

Mechanistic Interpretability affords a distinct strategy. It doesn’t simply establish vital options or inputs for a mannequin’s predictions. As an alternative, it sheds gentle on the underlying mechanisms or reasoning processes, serving to us perceive how a mannequin makes its predictions or selections.

We shall be utilizing GPT2-small for a easy activity: predicting a sequence of repeated tokens. The library we are going to use is TransformerLens, which is designed for mechanistic interpretability of GPT-2 model language fashions.

gpt2_small: HookedTransformer = HookedTransformer.from_pretrained("gpt2-small")

We use the code above to load the GPT2-Small mannequin and predict tokens on a sequence generated by a particular perform. This sequence consists of two similar token sequences, adopted by the bos_token. An instance could be “ABCDABCD” + bos_token when the seq_len is 3. For readability, we seek advice from the sequence from the start to the seq_len as the primary half, and the remaining sequence, excluding the bos_token, because the second half.

def generate_repeated_tokens(

mannequin: HookedTransformer, seq_len: int, batch: int = 1

) -> Int[Tensor, "batch full_seq_len"]:

'''

Generates a sequence of repeated random tokensOutputs are:

rep_tokens: [batch, 1+2*seq_len]

'''

bos_token = (t.ones(batch, 1) * mannequin.tokenizer.bos_token_id).lengthy() # generate bos token for every batch

rep_tokens_half = t.randint(0, mannequin.cfg.d_vocab, (batch, seq_len), dtype=t.int64)

rep_tokens = t.cat([bos_token,rep_tokens_half,rep_tokens_half], dim=-1).to(machine)

return rep_tokens

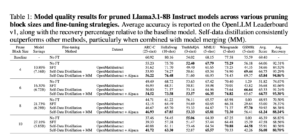

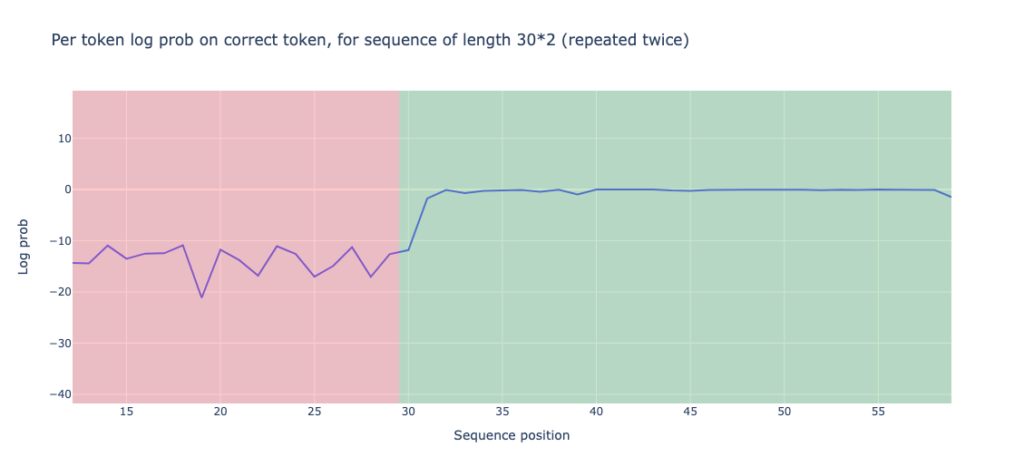

After we permit the mannequin to run on the generated token, we discover an attention-grabbing statement: the mannequin performs considerably higher on the second half of the sequence than on the primary half. That is measured by the log chances on the right tokens. To be exact, the efficiency on the primary half is -13.898, whereas the efficiency on the second half is -0.644.

We are able to additionally calculate prediction accuracy, outlined because the ratio of appropriately predicted tokens (these similar to the generated tokens) to the full variety of tokens. The accuracy for the primary half sequence is 0.0, which is unsurprising since we’re working with random tokens that lack precise that means. In the meantime, the accuracy for the second half is 0.93, considerably outperforming the primary half.

Discovering induction head

The statement above is perhaps defined by the existence of an induction circuit. It is a circuit that scans the sequence for prior cases of the present token, identifies the token that adopted it beforehand, and predicts that the identical sequence will repeat. For example, if it encounters an ‘A’, it scans for the earlier ‘A’ or a token similar to ‘A’ within the embedding area, identifies the following token ‘B’, after which predicts the following token after ‘A’ to be ‘B’ or a token similar to ‘B’ within the embedding area.

This prediction course of might be damaged down into two steps:

- Establish the earlier identical (or comparable) token. Each token within the second half of the sequence ought to “listen” to the token ‘seq_len’ locations earlier than it. For example, the ‘A’ at place 4 ought to take note of the ‘A’ at place 1 if ‘seq_len’ is 3. We are able to name the eye head performing this activity the “induction head.”

- Establish the next token ‘B’. That is the method of copying info from the earlier token (e.g., ‘A’) into the following token (e.g., ‘B’). This info shall be used to “reproduce” ‘B’ when ‘A’ seems once more. We are able to name the eye head performing this activity the “earlier token head.”

These two heads represent an entire induction circuit. Word that generally the time period “induction head” can be used to explain the complete “induction circuit.” For extra introduction of induction circuit, I extremely advocate the article In-context learning and induction head which is a grasp piece!

Now, let’s establish the eye head and former head in GPT2-small.

The next code is used to seek out the induction head. First, we run the mannequin with 30 batches. Then, we calculate the imply worth of the diagonal with an offset of seq_len within the consideration sample matrix. This technique lets us measure the diploma of consideration the present token provides to the one which seems seq_len beforehand.

def induction_score_hook(

sample: Float[Tensor, "batch head_index dest_pos source_pos"],

hook: HookPoint,

):

'''

Calculates the induction rating, and shops it within the [layer, head] place of the `induction_score_store` tensor.

'''

induction_stripe = sample.diagonal(dim1=-2, dim2=-1, offset=1-seq_len) # src_pos, des_pos, one place proper from seq_len

induction_score = einops.scale back(induction_stripe, "batch head_index place -> head_index", "imply")

induction_score_store[hook.layer(), :] = induction_scoreseq_len = 50

batch = 30

rep_tokens_30 = generate_repeated_tokens(gpt2_small, seq_len, batch)

induction_score_store = t.zeros((gpt2_small.cfg.n_layers, gpt2_small.cfg.n_heads), machine=gpt2_small.cfg.machine)

rep_tokens_30,

return_type=None,

pattern_hook_names_filter,

induction_score_hook

)]

)

Now, let’s study the induction scores. We’ll discover that some heads, such because the one on layer 5 and head 5, have a excessive induction rating of 0.91.

We are able to additionally show the eye sample of this head. You’ll discover a transparent diagonal line as much as an offset of seq_len.

Equally, we will establish the previous token head. For example, layer 4 head 11 demonstrates a robust sample for the earlier token.

How do MLP layers attribute?

Let’s think about this query: do MLP layers depend? We all know that GPT2-Small incorporates each consideration and MLP layers. To analyze this, I suggest utilizing an ablation method.

Ablation, because the identify implies, systematically removes sure mannequin parts and observes how efficiency adjustments in consequence.

We’ll change the output of the MLP layers within the second half of the sequence with these from the primary half, and observe how this impacts the ultimate loss perform. We’ll compute the distinction between the loss after changing the MLP layer outputs and the unique lack of the second half sequence utilizing the next code.

def patch_residual_component(

residual_component,

hook,

pos,

cache,

):

residual_component[0,pos, :] = cache[hook.name][pos-seq_len, :]

return residual_componentablation_scores = t.zeros((gpt2_small.cfg.n_layers, seq_len), machine=gpt2_small.cfg.machine)

gpt2_small.reset_hooks()

logits = gpt2_small(rep_tokens, return_type="logits")

loss_no_ablation = cross_entropy_loss(logits[:, seq_len: max_len],rep_tokens[:, seq_len: max_len])

for layer in tqdm(vary(gpt2_small.cfg.n_layers)):

for place in vary(seq_len, max_len):

hook_fn = functools.partial(patch_residual_component, pos=place, cache=rep_cache)

ablated_logits = gpt2_small.run_with_hooks(rep_tokens, fwd_hooks=[

(utils.get_act_name("mlp_out", layer), hook_fn)

])

loss = cross_entropy_loss(ablated_logits[:, seq_len: max_len], rep_tokens[:, seq_len: max_len])

ablation_scores[layer, position-seq_len] = loss - loss_no_ablation

We arrive at a shocking consequence: apart from the primary token, the ablation doesn’t produce a major logit distinction. This means that the MLP layers might not have a major contribution within the case of repeated tokens.

Provided that the MLP layers don’t considerably contribute to the ultimate prediction, we will manually assemble an induction circuit utilizing the top of layer 5, head 5, and the top of layer 4, head 11. Recall that these are the induction head and the earlier token head. We do it by the next code:

def K_comp_full_circuit(

mannequin: HookedTransformer,

prev_token_layer_index: int,

ind_layer_index: int,

prev_token_head_index: int,

ind_head_index: int

) -> FactoredMatrix:

'''

Returns a (vocab, vocab)-size FactoredMatrix,

with the primary dimension being the question facet

and the second dimension being the important thing facet (going through the earlier token head)'''

W_E = gpt2_small.W_E

W_Q = gpt2_small.W_Q[ind_layer_index, ind_head_index]

W_K = mannequin.W_K[ind_layer_index, ind_head_index]

W_O = mannequin.W_O[prev_token_layer_index, prev_token_head_index]

W_V = mannequin.W_V[prev_token_layer_index, prev_token_head_index]

Q = W_E @ W_Q

Okay = W_E @ W_V @ W_O @ W_K

return FactoredMatrix(Q, Okay.T)

Computing the highest 1 accuracy of this circuit yields a worth of 0.2283. That is fairly good for a circuit constructed by solely two heads!

For detailed implementation, please test my notebook.