Studying the significance of coaching knowledge below idea drift – Google Analysis Weblog

The consistently altering nature of the world round us poses a big problem for the event of AI fashions. Usually, fashions are skilled on longitudinal knowledge with the hope that the coaching knowledge used will precisely characterize inputs the mannequin could obtain sooner or later. Extra usually, the default assumption that every one coaching knowledge are equally related usually breaks in apply. For instance, the determine beneath exhibits photographs from the CLEAR nonstationary studying benchmark, and it illustrates how visible options of objects evolve considerably over a ten yr span (a phenomenon we confer with as gradual idea drift), posing a problem for object categorization fashions.

|

| Pattern photographs from the CLEAR benchmark. (Tailored from Lin et al.) |

Various approaches, reminiscent of online and continual learning, repeatedly replace a mannequin with small quantities of current knowledge with the intention to hold it present. This implicitly prioritizes current knowledge, because the learnings from previous knowledge are regularly erased by subsequent updates. Nevertheless in the actual world, completely different varieties of data lose relevance at completely different charges, so there are two key points: 1) By design they focus completely on the latest knowledge and lose any sign from older knowledge that’s erased. 2) Contributions from knowledge cases decay uniformly over time no matter the contents of the info.

In our current work, “Instance-Conditional Timescales of Decay for Non-Stationary Learning”, we suggest to assign every occasion an significance rating throughout coaching with the intention to maximize mannequin efficiency on future knowledge. To perform this, we make use of an auxiliary mannequin that produces these scores utilizing the coaching occasion in addition to its age. This mannequin is collectively discovered with the first mannequin. We deal with each the above challenges and obtain vital positive factors over different sturdy studying strategies on a spread of benchmark datasets for nonstationary studying. As an illustration, on a recent large-scale benchmark for nonstationary studying (~39M images over a ten yr interval), we present as much as 15% relative accuracy positive factors by means of discovered reweighting of coaching knowledge.

The problem of idea drift for supervised studying

To achieve quantitative perception into gradual idea drift, we constructed classifiers on a recent photo categorization task, comprising roughly 39M images sourced from social media web sites over a ten yr interval. We in contrast offline coaching, which iterated over all of the coaching knowledge a number of occasions in random order, and continuous coaching, which iterated a number of occasions over every month of knowledge in sequential (temporal) order. We measured mannequin accuracy each through the coaching interval and through a subsequent interval the place each fashions have been frozen, i.e., not up to date additional on new knowledge (proven beneath). On the finish of the coaching interval (left panel, x-axis = 0), each approaches have seen the identical quantity of knowledge, however present a big efficiency hole. This is because of catastrophic forgetting, an issue in continuous studying the place a mannequin’s data of knowledge from early on within the coaching sequence is diminished in an uncontrolled method. Alternatively, forgetting has its benefits — over the take a look at interval (proven on the appropriate), the continuous skilled mannequin degrades a lot much less quickly than the offline mannequin as a result of it’s much less depending on older knowledge. The decay of each fashions’ accuracy within the take a look at interval is affirmation that the info is certainly evolving over time, and each fashions develop into more and more much less related.

|

| Evaluating offline and regularly skilled fashions on the picture classification activity. |

Time-sensitive reweighting of coaching knowledge

We design a technique combining the advantages of offline studying (the flexibleness of successfully reusing all out there knowledge) and continuous studying (the power to downplay older knowledge) to handle gradual idea drift. We construct upon offline studying, then add cautious management over the affect of previous knowledge and an optimization goal, each designed to scale back mannequin decay sooner or later.

Suppose we want to prepare a mannequin, M, given some coaching knowledge collected over time. We suggest to additionally prepare a helper mannequin that assigns a weight to every level primarily based on its contents and age. This weight scales the contribution from that knowledge level within the coaching goal for M. The target of the weights is to enhance the efficiency of M on future knowledge.

In our work, we describe how the helper mannequin could be meta-learned, i.e., discovered alongside M in a way that helps the training of the mannequin M itself. A key design alternative of the helper mannequin is that we separated out instance- and age-related contributions in a factored method. Particularly, we set the burden by combining contributions from a number of completely different fastened timescales of decay, and be taught an approximate “task” of a given occasion to its most suited timescales. We discover in our experiments that this type of the helper mannequin outperforms many different alternate options we thought-about, starting from unconstrained joint capabilities to a single timescale of decay (exponential or linear), as a result of its mixture of simplicity and expressivity. Full particulars could also be discovered within the paper.

Occasion weight scoring

The highest determine beneath exhibits that our discovered helper mannequin certainly up-weights extra modern-looking objects within the CLEAR object recognition challenge; older-looking objects are correspondingly down-weighted. On nearer examination (backside determine beneath, gradient-based feature importance evaluation), we see that the helper mannequin focuses on the first object throughout the picture, versus, e.g., background options which will spuriously be correlated with occasion age.

|

| Pattern photographs from the CLEAR benchmark (digicam & laptop classes) assigned the very best and lowest weights respectively by our helper mannequin. |

|

| Function significance evaluation of our helper mannequin on pattern photographs from the CLEAR benchmark. |

Outcomes

Beneficial properties on large-scale knowledge

We first research the large-scale photo categorization task (PCAT) on the YFCC100M dataset mentioned earlier, utilizing the primary 5 years of knowledge for coaching and the subsequent 5 years as take a look at knowledge. Our methodology (proven in purple beneath) improves considerably over the no-reweighting baseline (black) in addition to many different sturdy studying methods. Apparently, our methodology intentionally trades off accuracy on the distant previous (coaching knowledge unlikely to reoccur sooner or later) in change for marked enhancements within the take a look at interval. Additionally, as desired, our methodology degrades lower than different baselines within the take a look at interval.

|

| Comparability of our methodology and related baselines on the PCAT dataset. |

Broad applicability

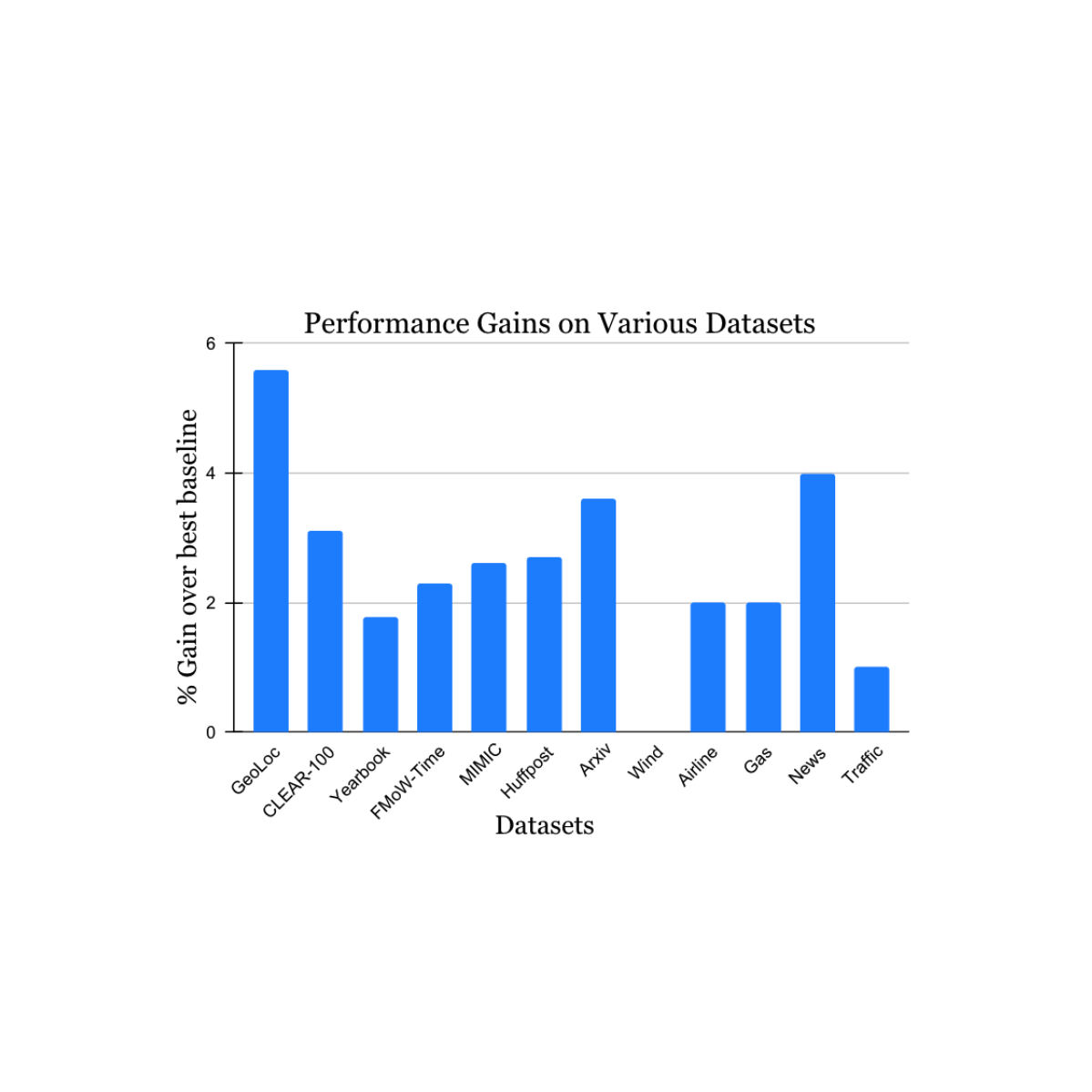

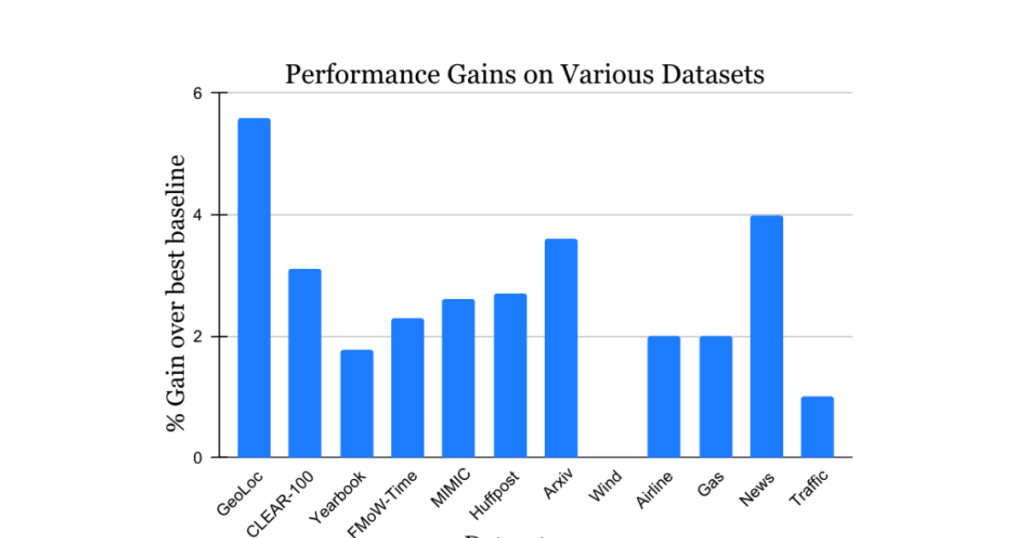

We validated our findings on a variety of nonstationary studying problem datasets sourced from the tutorial literature (see 1, 2, 3, 4 for particulars) that spans knowledge sources and modalities (images, satellite tv for pc photographs, social media textual content, medical information, sensor readings, tabular knowledge) and sizes (starting from 10k to 39M cases). We report vital positive factors within the take a look at interval when in comparison with the closest revealed benchmark methodology for every dataset (proven beneath). Word that the earlier best-known methodology could also be completely different for every dataset. These outcomes showcase the broad applicability of our strategy.

|

| Efficiency acquire of our methodology on quite a lot of duties learning pure idea drift. Our reported positive factors are over the earlier best-known methodology for every dataset. |

Extensions to continuous studying

Lastly, we take into account an fascinating extension of our work. The work above described how offline studying could be prolonged to deal with idea drift utilizing concepts impressed by continuous studying. Nevertheless, typically offline studying is infeasible — for instance, if the quantity of coaching knowledge out there is simply too giant to keep up or course of. We tailored our strategy to continuous studying in a simple method by making use of temporal reweighting throughout the context of every bucket of knowledge getting used to sequentially replace the mannequin. This proposal nonetheless retains some limitations of continuous studying, e.g., mannequin updates are carried out solely on most-recent knowledge, and all optimization choices (together with our reweighting) are solely remodeled that knowledge. Nonetheless, our strategy persistently beats common continuous studying in addition to a variety of different continuous studying algorithms on the picture categorization benchmark (see beneath). Since our strategy is complementary to the concepts in lots of baselines in contrast right here, we anticipate even bigger positive factors when mixed with them.

|

| Outcomes of our methodology tailored to continuous studying, in comparison with the most recent baselines. |

Conclusion

We addressed the problem of knowledge drift in studying by combining the strengths of earlier approaches — offline studying with its efficient reuse of knowledge, and continuous studying with its emphasis on newer knowledge. We hope that our work helps enhance mannequin robustness to idea drift in apply, and generates elevated curiosity and new concepts in addressing the ever present drawback of gradual idea drift.

Acknowledgements

We thank Mike Mozer for a lot of fascinating discussions within the early section of this work, in addition to very useful recommendation and suggestions throughout its growth.