JPMorgan AI Analysis Introduces DocLLM: A Light-weight Extension to Conventional Massive Language Fashions Tailor-made for Generative Reasoning Over Paperwork with Wealthy Layouts

Enterprise paperwork like contracts, stories, invoices, and receipts include intricate layouts. These paperwork could also be robotically interpreted and analyzed, which is helpful and can lead to the creation of AI-driven options. Nonetheless, there are a variety of challenges, as these paperwork can have wealthy semantics that lie on the intersection of textual and spatial modalities. The advanced layouts of the paperwork present essential visible clues which might be crucial for his or her environment friendly interpretation.

Whereas Doc AI (DocAI) has made important strides in areas resembling query answering, categorization, and extraction, real-world functions proceed to face persistent hurdles associated to accuracy, reliability, contextual understanding, and generalization to new domains.

To deal with these points, a crew of researchers from JPMorgan AI Analysis has launched DocLLM, a light-weight model of standard Massive Language Fashions (LLMs) that takes into consideration each textual semantics and spatial format and has been particularly created for reasoning over visible paperwork.

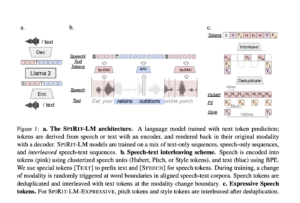

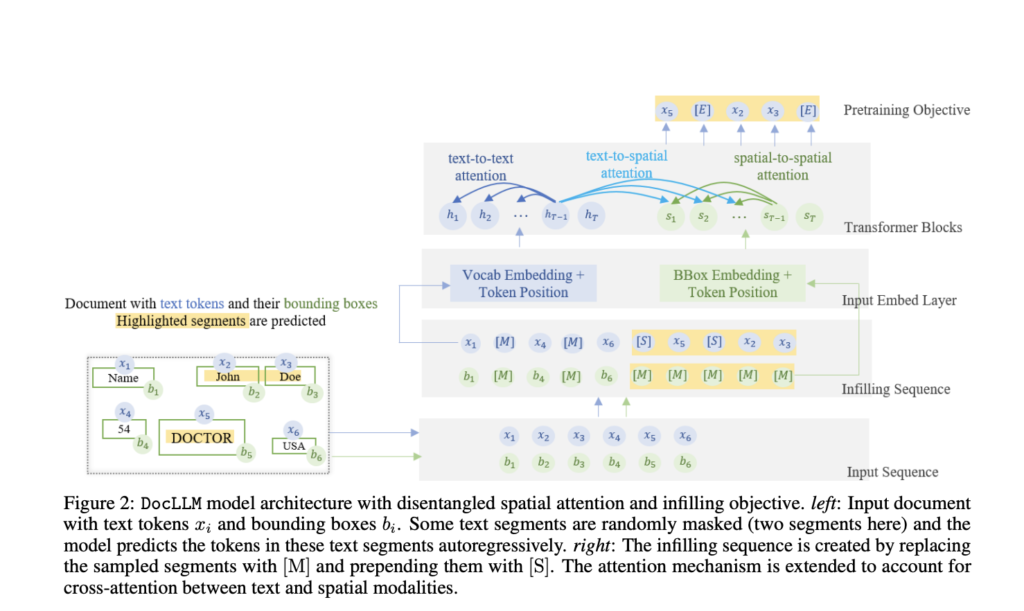

DocLLM is inherently multi-modal because it represents each textual content semantics and spatial layouts. In distinction to conventional strategies, it has been developed in a manner that it makes use of bounding field coordinates acquired utilizing optical character recognition (OCR) so as to add spatial format info, therefore eradicating the requirement for a complicated visible encoder. This design resolution decreases processing occasions, barely barely will increase mannequin dimension, and maintains the causal decoder structure.

The crew has shared that for a number of doc intelligence duties, together with type comprehension, desk alignment, and visible query responding, simply having a spatial format construction is sufficient. By separating spatial info from textual info, the strategy has prolonged typical transformers’ self-attention mechanism to seize cross-modal interactions.

Visible paperwork often have fragmented textual content sections, erratic layouts, and diverse info. To deal with this, the examine has advised altering the pre-training goal in the course of the self-supervised pre-training part. It has beneficial infilling to accommodate numerous textual content preparations and cohesive textual content blocks. With this adjustment, the mannequin can extra successfully deal with combined information varieties, advanced layouts, contextual completions, and misaligned textual content.

DocLLM’s pre-trained information has been fine-tuned on instruction information from many datasets to go well with completely different doc intelligence jobs. These duties embrace doc categorization, visible query answering, pure language inference, and key info extraction.

Each single- and multi-page paperwork have been lined by the instruction-tuning information, and format cues like discipline separators, titles, and captions may be included to make it simpler for readers to grasp the papers’ logical construction. For the Llama2-7B mannequin, the modifications made by DocLLM have yielded notable efficiency features, starting from 15% to 61%, in 4 of the 5 beforehand unpublished datasets.

The crew has summarized their main contributions as follows.

- A typical LLM with a light-weight extension designed particularly for visible doc interpretation has been launched,

- The examine goals to supply a singular consideration mechanism that may distinguish between textual and spatial info, enabling the environment friendly seize of cross-modal alignment between format and textual content.

- A pre-training objective has been outlined to handle the difficulties brought on by asymmetrical layouts in visible paperwork.

- A specialised instruction-tuning dataset has been designed for visible doc intelligence duties that must be curated to fine-tune the mannequin successfully.

- In-depth trials have been carried out, which yielded necessary insights into how the advised mannequin behaves and capabilities whereas managing visible paperwork.

Try the Paper. All credit score for this analysis goes to the researchers of this challenge. Additionally, don’t neglect to affix our 35k+ ML SubReddit, 41k+ Facebook Community, Discord Channel, LinkedIn Group, Twitter, and Email Newsletter, the place we share the newest AI analysis information, cool AI initiatives, and extra.

If you like our work, you will love our newsletter..

Tanya Malhotra is a ultimate 12 months undergrad from the College of Petroleum & Vitality Research, Dehradun, pursuing BTech in Pc Science Engineering with a specialization in Synthetic Intelligence and Machine Studying.

She is a Knowledge Science fanatic with good analytical and demanding pondering, together with an ardent curiosity in buying new expertise, main teams, and managing work in an organized method.