LLMs & Information Graphs – MarkTechPost

Massive Language Fashions (LLMs) are AI instruments that may perceive and generate human language. They’re highly effective neural networks with billions of parameters skilled on huge quantities of textual content knowledge. The in depth coaching of those fashions offers them a deep understanding of human language’s construction and which means.

LLMs can carry out varied language duties like translation, sentiment evaluation, chatbot dialog, and so forth. LLMs can comprehend intricate textual info, acknowledge entities and their connections, and produce textual content that maintains coherence and grammatical correctness.

A Information Graph is a database that represents and connects knowledge and details about totally different entities. It contains nodes representing any object, particular person, or place and edges defining the relationships between the nodes. This permits machines to know how the entities relate to one another, share attributes, and draw connections between various things on the earth round us.

Information graphs can be utilized in varied purposes, resembling advisable movies on YouTube, insurance coverage fraud detection, product suggestions in retail, and predictive modeling.

One of many major limitations of LLMs is that they’re “black packing containers,” i.e., it’s onerous to know how they arrive at a conclusion. Furthermore, they steadily wrestle to know and retrieve factual info, which can lead to errors and inaccuracies often known as hallucinations.

That is the place information graphs can assist LLMs by offering them with exterior information for inference. Nevertheless, Information graphs are tough to assemble and are evolving by nature. So, it’s a good suggestion to make use of LLMs and information graphs collectively to take advantage of their strengths.

LLMs might be mixed with Information Graphs (KGs) utilizing three approaches:

- KG-enhanced LLMs: These combine KGs into LLMs throughout coaching and use them for higher comprehension.

- LLM-augmented KGs: LLMs can enhance varied KG duties like embedding, completion, and query answering.

- Synergized LLMs + KGs: LLMs and KGs work collectively, enhancing one another for two-way reasoning pushed by knowledge and information.

KG-Enhanced LLMs

LLMs are well-known for his or her skill to excel in varied language duties by studying from huge textual content knowledge. Nevertheless, they face criticism for producing incorrect info (hallucination) and missing interpretability. Researchers suggest enhancing LLMs with information graphs (KGs) to handle these points.

KGs retailer structured information, which can be utilized to enhance LLMs’ understanding. Some strategies combine KGs throughout LLM pre-training, aiding information acquisition, whereas others use KGs throughout inference to boost domain-specific information entry. KGs are additionally used to interpret LLMs’ reasoning and information for improved transparency.

LLM-augmented KGs

Information graphs (KGs) retailer structured info essential for real-world purposes. Nevertheless, present KG strategies face challenges with incomplete knowledge and textual content processing for KG development. Researchers are exploring the way to leverage the flexibility of LLMs to handle KG-related duties.

One widespread strategy includes utilizing LLMs as textual content processors for KGs. LLMs analyze textual knowledge inside KGs and improve KG representations. Some research additionally make use of LLMs to course of authentic textual content knowledge, extracting relations and entities to construct KGs. Latest efforts purpose to create KG prompts that make structural KGs comprehensible to LLMs. This allows direct utility of LLMs to duties like KG completion and reasoning.

Synergized LLMs + KGs

Researchers are more and more keen on combining LLMs and KGs as a result of their complementary nature. To discover this integration, a unified framework referred to as “Synergized LLMs + KGs” is proposed, consisting of 4 layers: Knowledge, Synergized Mannequin, Method, and Software.

LLMs deal with textual knowledge, KGs deal with structural knowledge, and with multi-modal LLMs and KGs, this framework can lengthen to different knowledge varieties like video and audio. These layers collaborate to boost capabilities and enhance efficiency for varied purposes like search engines like google and yahoo, recommender methods, and AI assistants.

Multi-Hop Query Answering

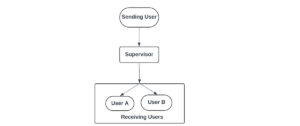

Sometimes, after we use LLM to retrieve info from paperwork, we divide them into chunks after which convert them into vector embeddings. Utilizing this strategy, we would not have the ability to discover info that spans a number of paperwork. This is named the issue of multi-hop query answering.

This situation might be solved utilizing a information graph. We are able to assemble a structured illustration of the knowledge by processing every doc individually and connecting them in a information graph. This makes it simpler to maneuver round and discover linked paperwork, making it potential to reply complicated questions that require a number of steps.

Within the above instance, if we wish the LLM to reply the query, “Did any former worker of OpenAI begin their very own firm?” the LLM would possibly return some duplicated info or different related info may very well be ignored. Extracting entities and relationships from textual content to assemble a information graph makes it simple for the LLM to reply questions spanning a number of paperwork.

Combining Textual Knowledge with a Information Graph

One other benefit of utilizing a information graph with an LLM is that through the use of the previous, we will retailer each structured in addition to unstructured knowledge and join them with relationships. This makes info retrieval simpler.

Within the above instance, a information graph has been used to retailer:

- Structured knowledge: Previous Workers of OpenAI and the businesses they began.

- Unstructured knowledge: Information articles mentioning OpenAI and its workers.

With this setup, we will reply questions like “What’s the most recent information about Prosper Robotics founders?” by ranging from the Prosper Robotics node, transferring to its founders, after which retrieving latest articles about them.

This adaptability makes it appropriate for a variety of LLM purposes, as it may well deal with varied knowledge varieties and relationships between entities. The graph construction offers a transparent visible illustration of data, making it simpler for each builders and customers to know and work with.

Researchers are more and more exploring the synergy between LLMs and KGs, with three major approaches: KG-enhanced LLMs, LLM-augmented KGs, and Synergized LLMs + KGs. These approaches purpose to leverage each applied sciences’ strengths to handle varied language and knowledge-related duties.

The mixing of LLMs and KGs affords promising prospects for purposes resembling multi-hop query answering, combining textual and structured knowledge, and enhancing transparency and interpretability. As expertise advances, this collaboration between LLMs and KGs holds the potential to drive innovation in fields like search engines like google and yahoo, recommender methods, and AI assistants, finally benefiting customers and builders alike.

Additionally, don’t overlook to affix our 30k+ ML SubReddit, 40k+ Facebook Community, Discord Channel, and Email Newsletter, the place we share the most recent AI analysis information, cool AI tasks, and extra.

If you like our work, you will love our newsletter..

References: